Featured article of the week: July 17–23:

"Effective information extraction framework for heterogeneous clinical reports using online machine learning and controlled vocabularies"

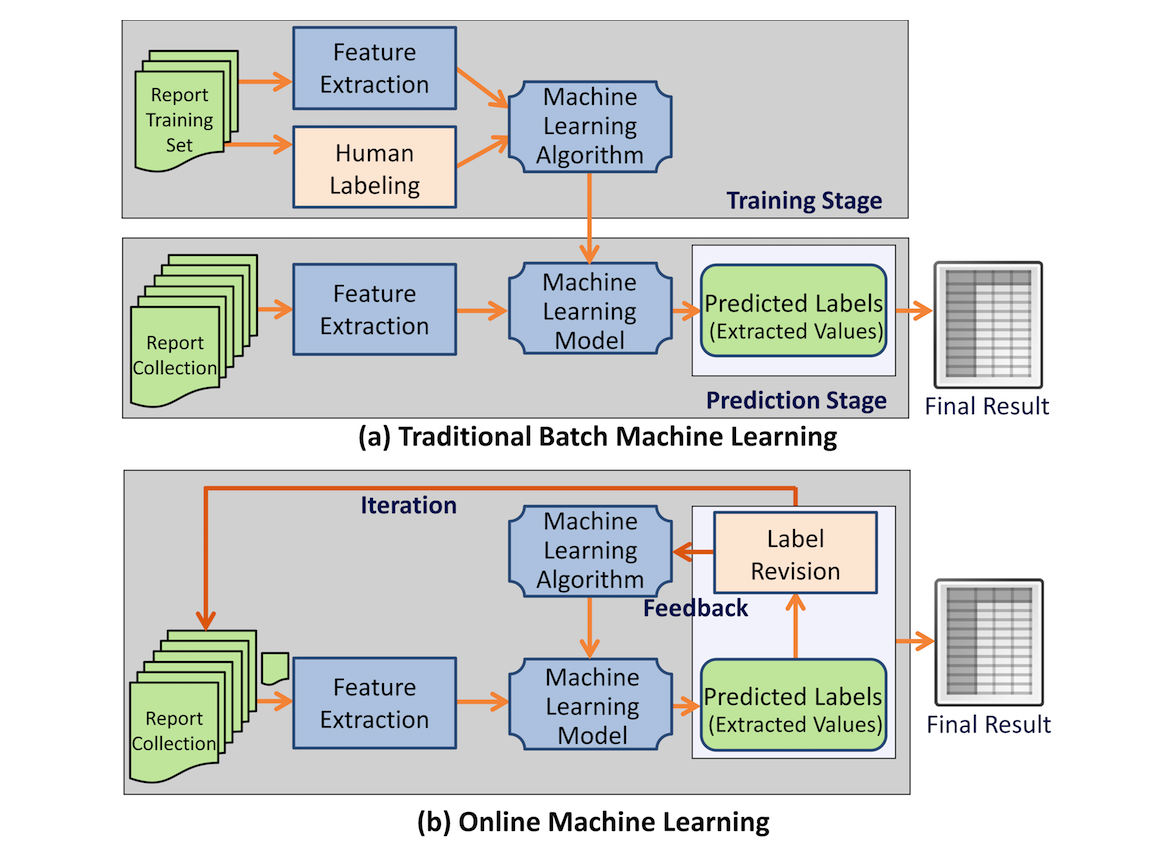

Extracting structured data from narrated medical reports is challenged by the complexity of heterogeneous structures and vocabularies and often requires significant manual effort. Traditional machine-based approaches lack the capability to take user feedback for improving the extraction algorithm in real time.

Our goal was to provide a generic information extraction framework that can support diverse clinical reports and enables a dynamic interaction between a human and a machine that produces highly accurate results.

A clinical information extraction system IDEAL-X has been built on top of online machine learning. It processes one document at a time, and user interactions are recorded as feedback to update the learning model in real time. The updated model is used to predict values for extraction in subsequent documents. Once prediction accuracy reaches a user-acceptable threshold, the remaining documents may be batch processed. A customizable controlled vocabulary may be used to support extraction. (Full article...)

Featured article of the week: July 10–16:

"Selecting a laboratory information management system for biorepositories in low- and middle-income countries: The H3Africa experience and lessons learned"

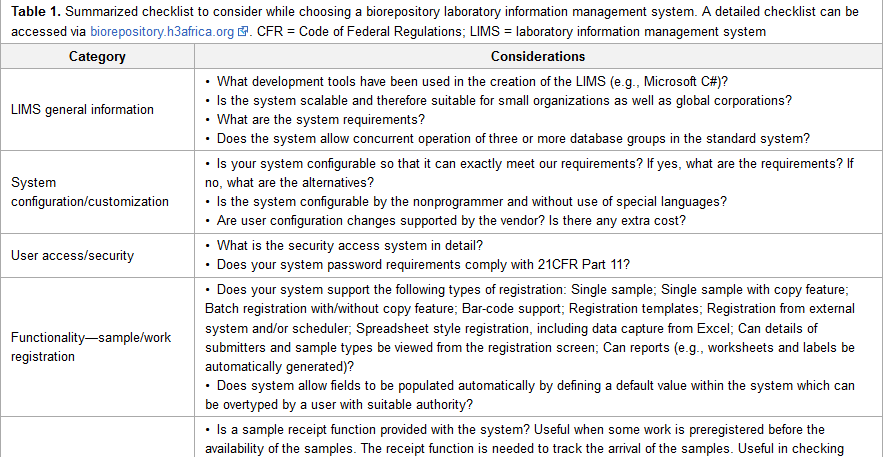

Biorepositories in Africa need significant infrastructural support to meet International Society for Biological and Environmental Repositories (ISBER) Best Practices to support population-based genomics research. ISBER recommends a biorepository information management system which can manage workflows from biospecimen receipt to distribution. The H3Africa Initiative set out to develop regional African biorepositories where Uganda, Nigeria, and South Africa were successfully awarded grants to develop the state-of-the-art biorepositories. The biorepositories carried out an elaborate process to evaluate and choose a laboratory information management system (LIMS) with the aim of integrating the three geographically distinct sites. In this article, we review the processes, African experience, and lessons learned, and we make recommendations for choosing a biorepository LIMS in the African context. (Full article...)

|

Featured article of the week: July 3–9:

"Baobab Laboratory Information Management System: Development of an open-source laboratory information management system for biobanking"

A laboratory information management system (LIMS) is central to the informatics infrastructure that underlies biobanking activities. To date, a wide range of commercial and open-source LIMSs are available, and the decision to opt for one LIMS over another is often influenced by the needs of the biobank clients and researchers, as well as available financial resources. The Baobab LIMS was developed by customizing the Bika LIMS software to meet the requirements of biobanking best practices. The need to implement biobank standard operation procedures as well as stimulate the use of standards for biobank data representation motivated the implementation of Baobab LIMS, an open-source LIMS for biobanking. Baobab LIMS comprises modules for biospecimen kit assembly, shipping of biospecimen kits, storage management, analysis requests, reporting, and invoicing. The Baobab LIMS is based on the Plone web-content management framework. All the system requirements for Plone are applicable to Baobab LIMS, including the need for a server with at least 8 GB RAM and 120 GB hard disk space. Baobab LIMS is a client-server-based system, whereby the end user is able to access the system securely through the internet on a standard web browser, thereby eliminating the need for standalone installations on all machines. (Full article...)

|

Featured article of the week: June 19–25:

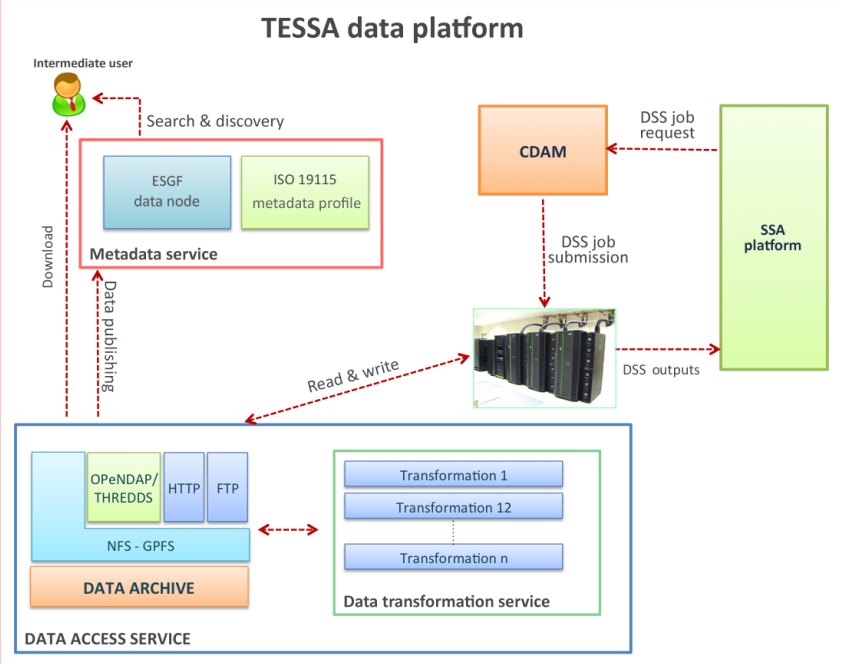

"A multi-service data management platform for scientific oceanographic products"

An efficient, secure and interoperable data platform solution has been developed in the TESSA project to provide fast navigation and access to the data stored in the data archive, as well as a standard-based metadata management support. The platform mainly targets scientific users and the situational sea awareness high-level services such as the decision support systems (DSS). These datasets are accessible through the following three main components: the Data Access Service (DAS), the Metadata Service and the Complex Data Analysis Module (CDAM). The DAS allows access to data stored in the archive by providing interfaces for different protocols and services for downloading, variable selection, data subsetting or map generation. Metadata Service is the heart of the information system of TESSA products and completes the overall infrastructure for data and metadata management. This component enables data search and discovery and addresses interoperability by exploiting widely adopted standards for geospatial data. Finally, the CDAM represents the back end of the TESSA DSS by performing on-demand complex data analysis tasks. (Full article...)

|

Featured article of the week: June 26–July 2:

"The FAIR Guiding Principles for scientific data management and stewardship"

There is an urgent need to improve the infrastructure supporting the reuse of scholarly data. A diverse set of stakeholders — representing academia, industry, funding agencies, and scholarly publishers — have come together to design and jointly endorse a concise and measureable set of principles that we refer to as the FAIR Data Principles. The intent is that these may act as a guideline for those wishing to enhance the reusability of their data holdings. Distinct from peer initiatives that focus on the human scholar, the FAIR Principles put specific emphasis on enhancing the ability of machines to automatically find and use the data, in addition to supporting its reuse by individuals. This comment article represents the first formal publication of the FAIR Principles, and it includes the rationale behind them as well as some exemplar implementations in the community. (Full article...)

|

Featured article of the week: June 19–25:

"A multi-service data management platform for scientific oceanographic products"

An efficient, secure and interoperable data platform solution has been developed in the TESSA project to provide fast navigation and access to the data stored in the data archive, as well as a standard-based metadata management support. The platform mainly targets scientific users and the situational sea awareness high-level services such as the decision support systems (DSS). These datasets are accessible through the following three main components: the Data Access Service (DAS), the Metadata Service and the Complex Data Analysis Module (CDAM). The DAS allows access to data stored in the archive by providing interfaces for different protocols and services for downloading, variable selection, data subsetting or map generation. Metadata Service is the heart of the information system of TESSA products and completes the overall infrastructure for data and metadata management. This component enables data search and discovery and addresses interoperability by exploiting widely adopted standards for geospatial data. Finally, the CDAM represents the back end of the TESSA DSS by performing on-demand complex data analysis tasks. (Full article...)

|

Featured article of the week: June 12–18:

"MASTR-MS: A web-based collaborative laboratory information management system (LIMS) for metabolomics"

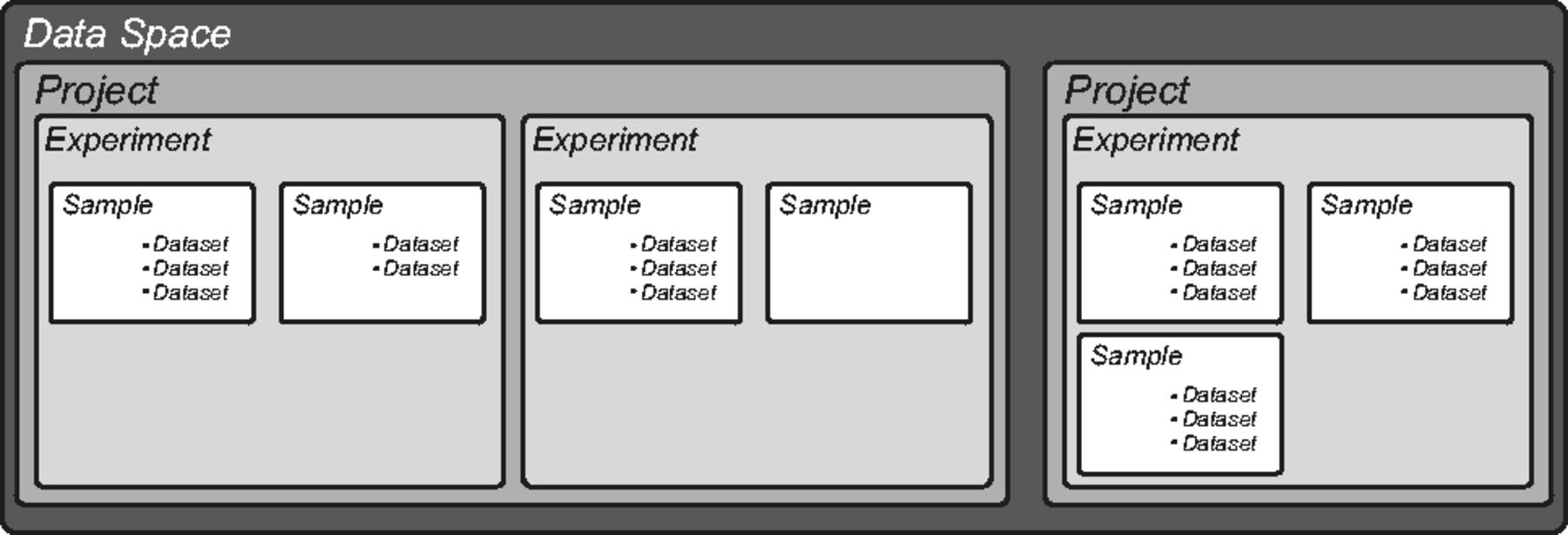

An increasing number of research laboratories and core analytical facilities around the world are developing high throughput metabolomic analytical and data processing pipelines that are capable of handling hundreds to thousands of individual samples per year, often over multiple projects, collaborations and sample types. At present, there are no laboratory information management systems (LIMS) that are specifically tailored for metabolomics laboratories that are capable of tracking samples and associated metadata from the beginning to the end of an experiment, including data processing and archiving, and which are also suitable for use in large institutional core facilities or multi-laboratory consortia as well as single laboratory environments.

Here we present MASTR-MS, a downloadable and installable LIMS solution that can be deployed either within a single laboratory or used to link workflows across a multisite network.(Full article...)

|

Featured article of the week: June 5–11:

|

Featured article of the week: May 29–June 4:

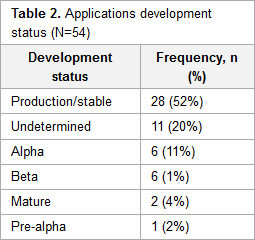

"The state of open-source electronic health record projects: A software anthropology study"

Electronic health records (EHR) are a key tool in managing and storing patients’ information. Currently, there are over 50 open-source EHR systems available. Functionality and usability are important factors for determining the success of any system. These factors are often a direct reflection of the domain knowledge and developers’ motivations. However, few published studies have focused on the characteristics of free and open-source software (F/OSS) EHR systems, and none to date have discussed the motivation, knowledge background, and demographic characteristics of the developers involved in open-source EHR projects.

This study analyzed the characteristics of prevailing F/OSS EHR systems and aimed to provide an understanding of the motivation, knowledge background, and characteristics of the developers. (Full article...)

|

Featured article of the week: May 22–28:

"PCM-SABRE: A platform for benchmarking and comparing outcome prediction methods in precision cancer medicine"

Numerous publications attempt to predict cancer survival outcome from gene expression data using machine-learning methods. A direct comparison of these works is challenging for the following reasons: (1) inconsistent measures used to evaluate the performance of different models, and (2) incomplete specification of critical stages in the process of knowledge discovery. There is a need for a platform that would allow researchers to replicate previous works and to test the impact of changes in the knowledge discovery process on the accuracy of the induced models.

We developed the PCM-SABRE platform, which supports the entire knowledge discovery process for cancer outcome analysis. PCM-SABRE was developed using KNIME. By using PCM-SABRE to reproduce the results of previously published works on breast cancer survival, we define a baseline for evaluating future attempts to predict cancer outcome with machine learning. (Full article...)

|

Featured article of the week: May 15–21:

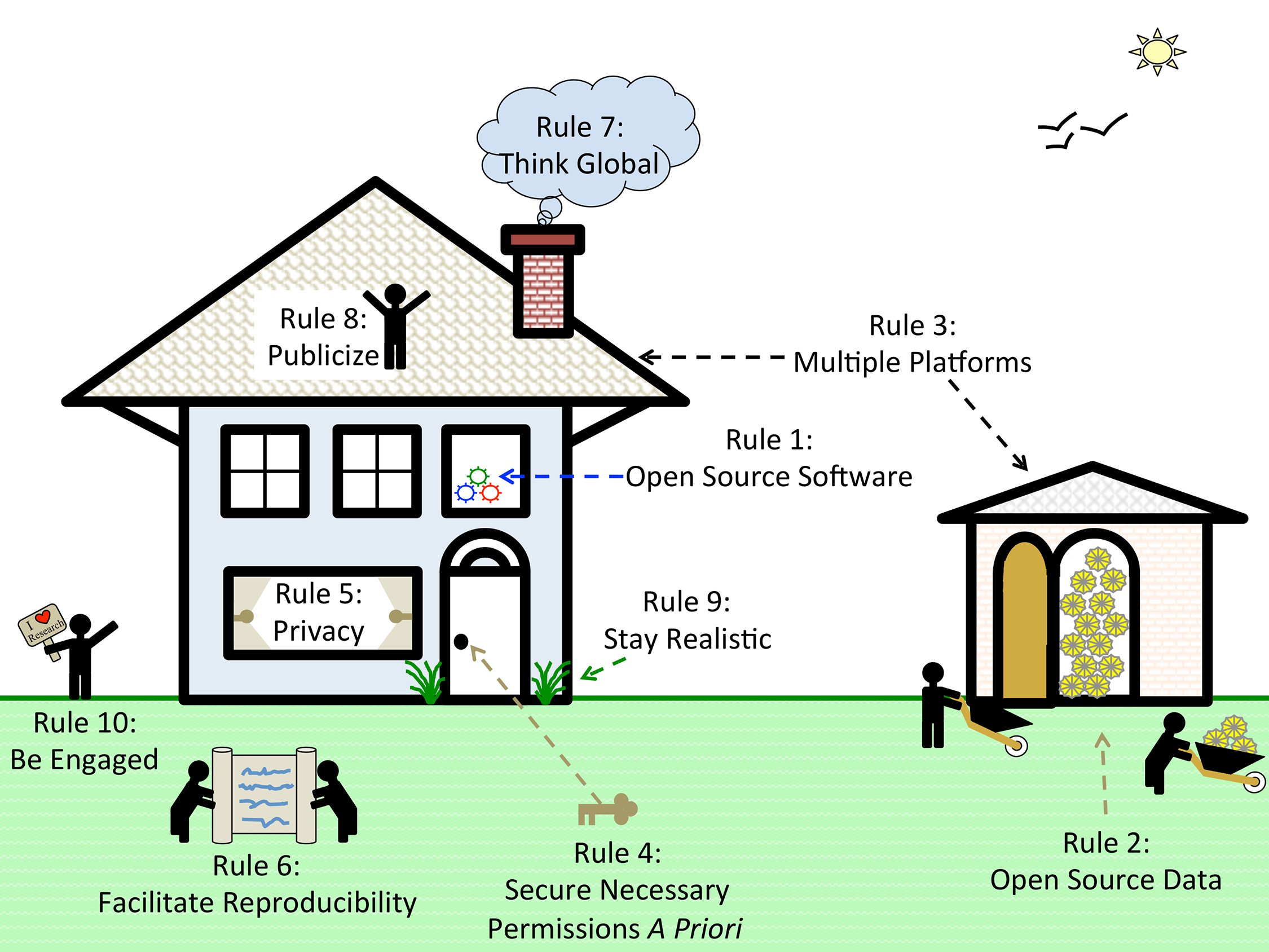

"Ten simple rules for cultivating open science and collaborative R&D"

How can we address the complexity and cost of applying science to societal challenges?

Open science and collaborative R&D may help. Open science has been described as "a research accelerator." Open science implies open access but goes beyond it: "Imagine a connected online web of scientific knowledge that integrates and connects data, computer code, chains of scientific reasoning, descriptions of open problems, and beyond ... tightly integrated with a scientific social web that directs scientists' attention where it is most valuable, releasing enormous collaborative potential."

Open science and collaborative approaches are often described as open-source, by analogy with open-source software such as the operating system Linux which powers Google and Amazon — collaboratively created software which is free to use and adapt, and popular for internet infrastructure and scientific research. (Full article...)

|

Featured article of the week: May 8–14:

"Ten simple rules to enable multi-site collaborations through data sharing"

Open access, open data, and software are critical for advancing science and enabling collaboration across multiple institutions and throughout the world. Despite near universal recognition of its importance, major barriers still exist to sharing raw data, software, and research products throughout the scientific community. Many of these barriers vary by specialty, increasing the difficulties for interdisciplinary and/or translational researchers to engage in collaborative research. Multi-site collaborations are vital for increasing both the impact and the generalizability of research results. However, they often present unique data sharing challenges. We discuss enabling multi-site collaborations through enhanced data sharing in this set of Ten Simple Rules. (Full article...)

|

Featured article of the week: May 1–7:

"Ten simple rules for developing usable software in computational biology"

The rise of high-throughput technologies in molecular biology has led to a massive amount of publicly available data. While computational method development has been a cornerstone of biomedical research for decades, the rapid technological progress in the wet laboratory makes it difficult for software development to keep pace. Wet lab scientists rely heavily on computational methods, especially since more research is now performed in silico. However, suitable tools do not always exist, and not everyone has the skills to write complex software. Computational biologists are required to close this gap, but they often lack formal training in software engineering. To alleviate this, several related challenges have been previously addressed in the Ten Simple Rules series, including reproducibility, effectiveness, and open-source development of software. (Full article...)

|

Featured article of the week: April 24–30:

"The effect of the General Data Protection Regulation on medical research"

The enactment of the General Data Protection Regulation (GDPR) will impact on European data science. Particular concerns relating to consent requirements that would severely restrict medical data research have been raised. Our objective is to explain the changes in data protection laws that apply to medical research and to discuss their potential impact ... The GDPR makes the classification of pseudonymised data as personal data clearer, although it has not been entirely resolved. Biomedical research on personal data where consent has not been obtained must be of substantial public interest. [We conclude] [t]he GDPR introduces protections for data subjects that aim for consistency across the E.U. The proposed changes will make little impact on biomedical data research. (Full article...)

|

Featured article of the week: April 17–23:

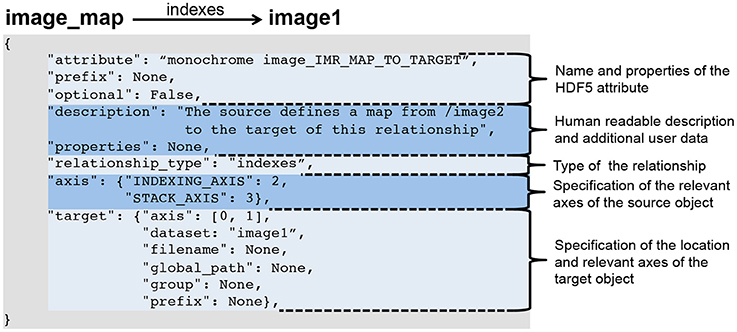

"Methods for specifying scientific data standards and modeling relationships with applications to neuroscience"

Neuroscience continues to experience a tremendous growth in data; in terms of the volume and variety of data, the velocity at which data is acquired, and in turn the veracity of data. These challenges are a serious impediment to sharing of data, analyses, and tools within and across labs. Here, we introduce BRAINformat, a novel data standardization framework for the design and management of scientific data formats. The BRAINformat library defines application-independent design concepts and modules that together create a general framework for standardization of scientific data. We describe the formal specification of scientific data standards, which facilitates sharing and verification of data and formats. We introduce the concept of "managed objects," enabling semantic components of data formats to be specified as self-contained units, supporting modular and reusable design of data format components and file storage. We also introduce the novel concept of "relationship attributes" for modeling and use of semantic relationships between data objects. Based on these concepts we demonstrate the application of our framework to design and implement a standard format for electrophysiology data and show how data standardization and relationship-modeling facilitate data analysis and sharing. (Full article...)

|

Featured article of the week: April 10–16:

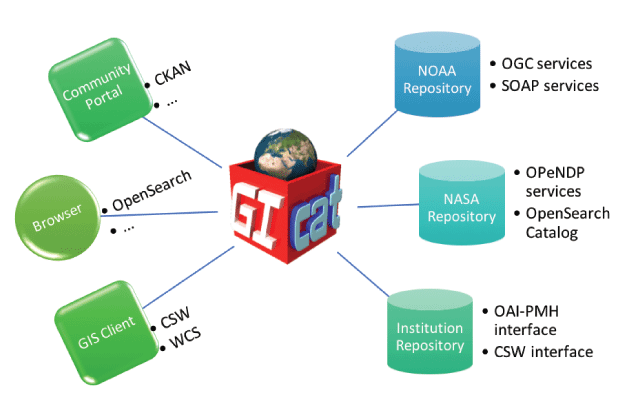

"Data and metadata brokering – Theory and practice from the BCube Project"

EarthCube is a U.S. National Science Foundation initiative that aims to create a cyberinfrastructure (CI) for all the geosciences. An initial set of "building blocks" was funded to develop potential components of that CI. The Brokering Building Block (BCube) created a brokering framework to demonstrate cross-disciplinary data access based on a set of use cases developed by scientists from the domains of hydrology, oceanography, polar science and climate/weather. While some successes were achieved, considerable challenges were encountered. We present a synopsis of the processes and outcomes of the BCube experiment. (Full article...)

|

Featured article of the week: April 3–9:

"A metadata-driven approach to data repository design"

The design and use of a metadata-driven data repository for research data management is described. Metadata is collected automatically during the submission process whenever possible and is registered with DataCite in accordance with their current metadata schema, in exchange for a persistent digital object identifier. Two examples of data preview are illustrated, including the demonstration of a method for integration with commercial software that confers rich domain-specific data analytics without introducing customization into the repository itself. (Full article...)

|

Featured article of the week: March 27–April 2:

"DGW: An exploratory data analysis tool for clustering and visualisation of epigenomic marks"

Functional genomic and epigenomic research relies fundamentally on sequencing-based methods like ChIP-seq for the detection of DNA-protein interactions. These techniques return large, high-dimensional data sets with visually complex structures, such as multi-modal peaks extended over large genomic regions. Current tools for visualisation and data exploration represent and leverage these complex features only to a limited extent.

We present DGW (Dynamic Gene Warping), an open-source software package for simultaneous alignment and clustering of multiple epigenomic marks. DGW uses dynamic time warping to adaptively rescale and align genomic distances which allows to group regions of interest with similar shapes, thereby capturing the structure of epigenomic marks. We demonstrate the effectiveness of the approach in a simulation study and on a real epigenomic data set from the ENCODE project. (Full article...)

|

Featured article of the week: March 20–26:

"SCIFIO: An extensible framework to support scientific image formats"

No gold standard exists in the world of scientific image acquisition; a proliferation of instruments each with its own proprietary data format has made out-of-the-box sharing of that data nearly impossible. In the field of light microscopy, the Bio-Formats library was designed to translate such proprietary data formats to a common, open-source schema, enabling sharing and reproduction of scientific results. While Bio-Formats has proved successful for microscopy images, the greater scientific community was lacking a domain-independent framework for format translation.

SCIFIO (SCientific Image Format Input and Output) is presented as a freely available, open-source library unifying the mechanisms of reading and writing image data. The core of SCIFIO is its modular definition of formats, the design of which clearly outlines the components of image I/O to encourage extensibility, facilitated by the dynamic discovery of the SciJava plugin framework. SCIFIO is structured to support coexistence of multiple domain-specific open exchange formats, such as Bio-Formats’ OME-TIFF, within a unified environment. (Full article...)

|

Featured article of the week: March 13–19:

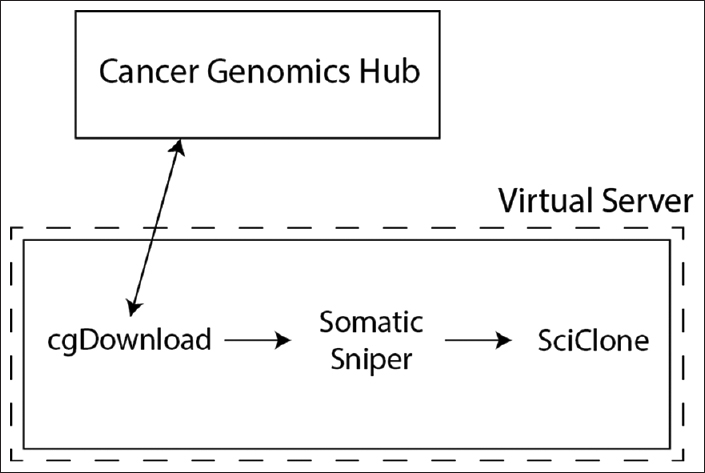

"Use of application containers and workflows for genomic data analysis"

The rapid acquisition of biological data and development of computationally intensive analyses has led to a need for novel approaches to software deployment. In particular, the complexity of common analytic tools for genomics makes them difficult to deploy and decreases the reproducibility of computational experiments. Recent technologies that allow for application virtualization, such as Docker, allow developers and bioinformaticians to isolate these applications and deploy secure, scalable platforms that have the potential to dramatically increase the efficiency of big data processing. While limitations exist, this study demonstrates a successful implementation of a pipeline with several discrete software applications for the analysis of next-generation sequencing (NGS) data. (Full article...)

|

Featured article of the week: March 6–12:

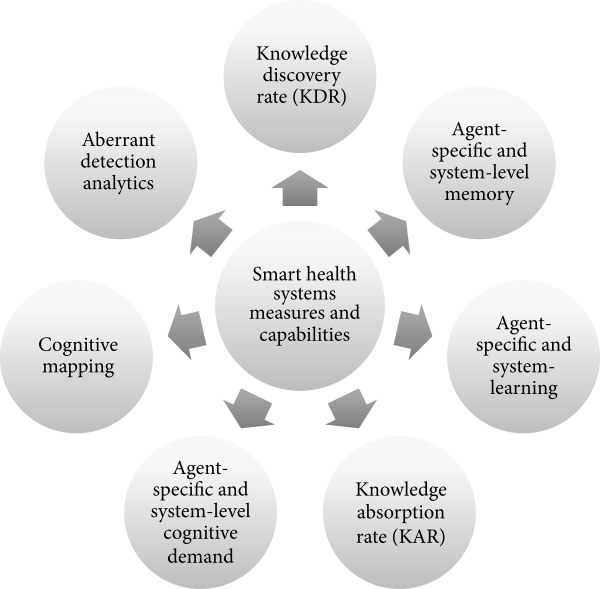

"Informatics metrics and measures for a smart public health systems approach: Information science perspective"

Public health informatics is an evolving domain in which practices constantly change to meet the demands of a highly complex public health and healthcare delivery system. Given the emergence of various concepts, such as learning health systems, smart health systems, and adaptive complex health systems, health informatics professionals would benefit from a common set of measures and capabilities to inform our modeling, measuring, and managing of health system “smartness.” Here, we introduce the concepts of organizational complexity, problem/issue complexity, and situational awareness as three codependent drivers of smart public health systems characteristics. We also propose seven smart public health systems measures and capabilities that are important in a public health informatics professional’s toolkit. (Full article...)

|

Featured article of the week: February 27–March 5:

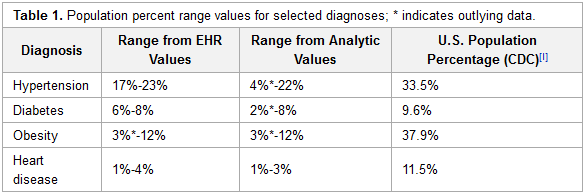

"Deployment of analytics into the healthcare safety net: Lessons learned"

As payment reforms shift healthcare reimbursement toward value-based payment programs, providers need the capability to work with data of greater complexity, scope and scale. This will in many instances necessitate a change in understanding of the value of data and the types of data needed for analysis to support operations and clinical practice. It will also require the deployment of different infrastructure and analytic tools. Community health centers (CHCs), which serve more than 25 million people and together form the nation’s largest single source of primary care for medically underserved communities and populations, are expanding and will need to optimize their capacity to leverage data as new payer and organizational models emerge.

To better understand existing capacity and help organizations plan for the strategic and expanded uses of data, a project was initiated that deployed contemporary, Hadoop-based, analytic technology into several multi-site CHCs and a primary care association (PCA) with an affiliated data warehouse supporting health centers across the state. (Full article...)

|

Featured article of the week: February 20–26:

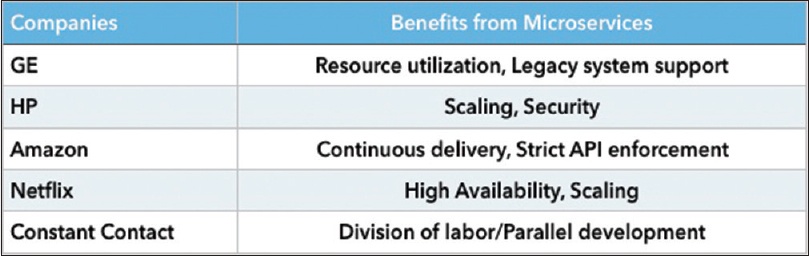

"The growing need for microservices in bioinformatics"

Within the information technology (IT) industry, best practices and standards are constantly evolving and being refined. In contrast, computer technology utilized within the healthcare industry often evolves at a glacial pace, with reduced opportunities for justified innovation. Although the use of timely technology refreshes within an enterprise's overall technology stack can be costly, thoughtful adoption of select technologies with a demonstrated return on investment can be very effective in increasing productivity and at the same time, reducing the burden of maintenance often associated with older and legacy systems.

In this brief technical communication, we introduce the concept of microservices as applied to the ecosystem of data analysis pipelines. Microservice architecture is a framework for dividing complex systems into easily managed parts. Each individual service is limited in functional scope, thereby conferring a higher measure of functional isolation and reliability to the collective solution. Moreover, maintenance challenges are greatly simplified by virtue of the reduced architectural complexity of each constitutive module. This fact notwithstanding, rendered overall solutions utilizing a microservices-based approach provide equal or greater levels of functionality as compared to conventional programming approaches. Bioinformatics, with its ever-increasing demand for performance and new testing algorithms, is the perfect use-case for such a solution. (Full article...)

|

Featured article of the week: February 13–19:

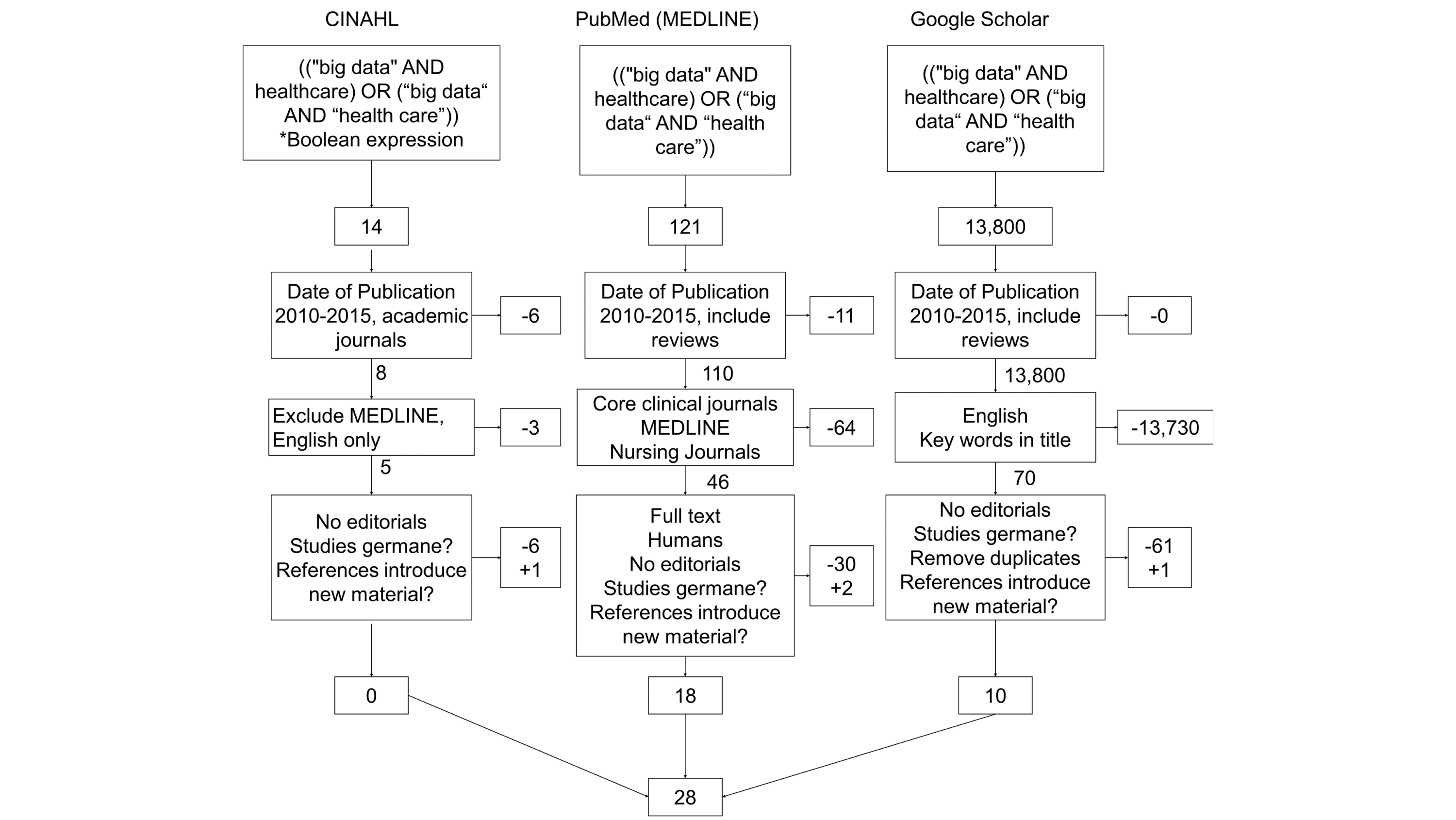

"Challenges and opportunities of big data in health care: A systematic review"

Big data analytics offers promise in many business sectors, and health care is looking at big data to provide answers to many age-related issues, particularly dementia and chronic disease management. The purpose of this review was to summarize the challenges faced by big data analytics and the opportunities that big data opens in health care. A total of three searches were performed for publications between January 1, 2010 and January 1, 2016 (PubMed/MEDLINE, CINAHL, and Google Scholar), and an assessment was made on content germane to big data in health care. From the results of the searches in research databases and Google Scholar (N=28), the authors summarized content and identified nine and 14 themes under the categories "Challenges" and "Opportunities," respectively. We rank-ordered and analyzed the themes based on the frequency of occurrence. (Full article...)

|

Featured article of the week: February 06–12:

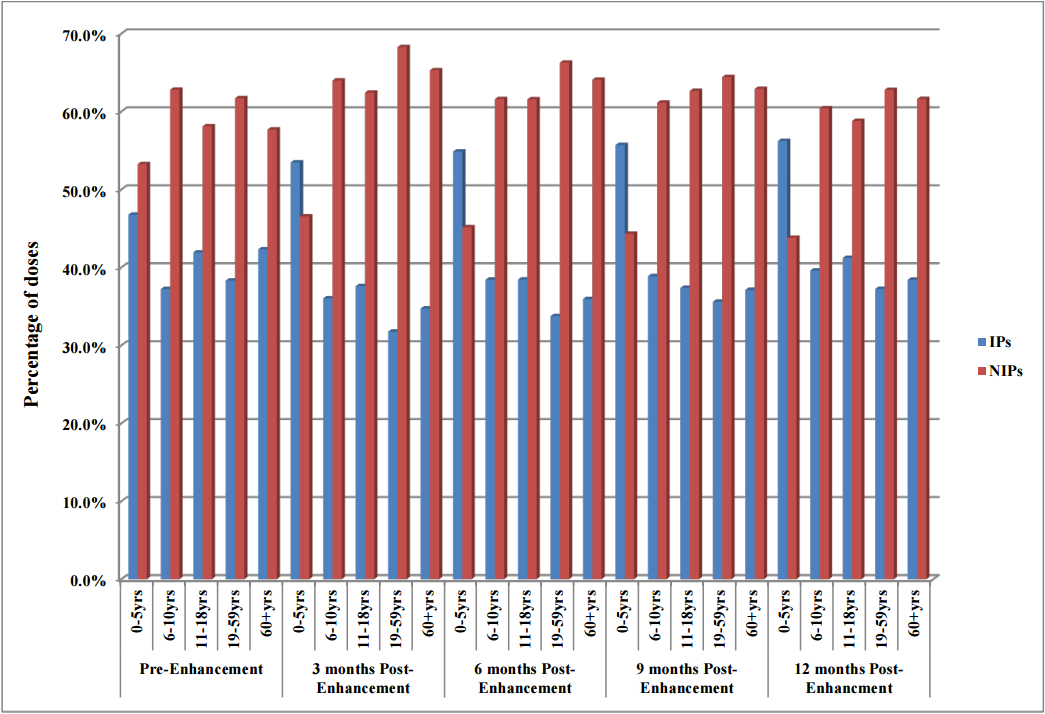

"The impact of electronic health record (EHR) interoperability on immunization information system (IIS) data quality"

Objectives: To evaluate the impact of electronic health record (EHR) interoperability on the quality of immunization data in the North Dakota Immunization Information System (NDIIS).

NDIIS "doses administered" data was evaluated for completeness of the patient and dose-level core data elements for records that belong to interoperable and non-interoperable providers. Data was compared at three months prior to EHR interoperability enhancement to data at three, six, nine and 12 months post-enhancement following the interoperability go live date. Doses administered per month and by age group, timeliness of vaccine entry and the number of duplicate clients added to the NDIIS was also compared, in addition to immunization rates for children 19–35 months of age and adolescents 11–18 years of age. (Full article...)

|

Featured article of the week: January 30–February 05:

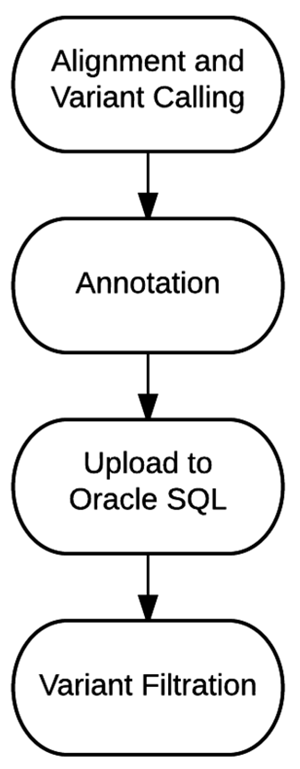

"Bioinformatics workflow for clinical whole genome sequencing at Partners HealthCare Personalized Medicine"

Effective implementation of precision medicine will be enhanced by a thorough understanding of each patient’s genetic composition to better treat his or her presenting symptoms or mitigate the onset of disease. This ideally includes the sequence information of a complete genome for each individual. At Partners HealthCare Personalized Medicine, we have developed a clinical process for whole genome sequencing (WGS) with application in both healthy individuals and those with disease. In this manuscript, we will describe our bioinformatics strategy to efficiently process and deliver genomic data to geneticists for clinical interpretation. We describe the handling of data from FASTQ to the final variant list for clinical review for the final report. We will also discuss our methodology for validating this workflow and the cost implications of running WGS. (Full article...)

|

Featured article of the week: January 23–29:

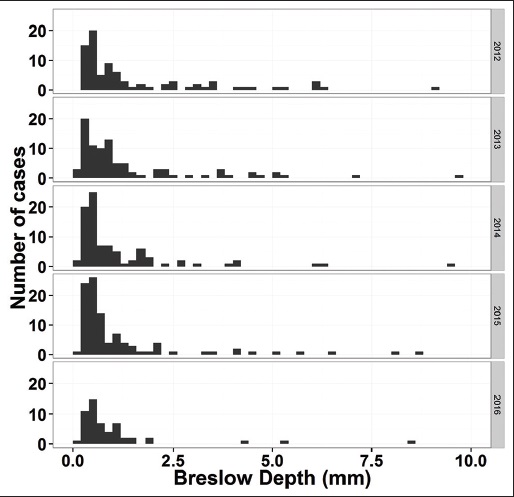

"Pathology report data extraction from relational database using R, with extraction from reports on melanoma of skin as an example"

The olog approach of Spivak and Kent is applied to the practical development of data transfer frameworks, yielding simple rules for construction and assessment of data transfer standards. The simplicity, extensibility and modularity of such descriptions allows discipline experts unfamiliar with complex ontological constructs or toolsets to synthesize multiple pre-existing standards, potentially including a variety of file formats, into a single overarching ontology. These ontologies nevertheless capture all scientifically-relevant prior knowledge, and when expressed in machine-readable form are sufficiently expressive to mediate translation between legacy and modern data formats. A format-independent programming interface informed by this ontology consists of six functions, of which only two handle data. Demonstration software implementing this interface is used to translate between two common diffraction image formats using such an ontology in place of an intermediate format. (Full article...)

|

Featured article of the week: January 16–22:

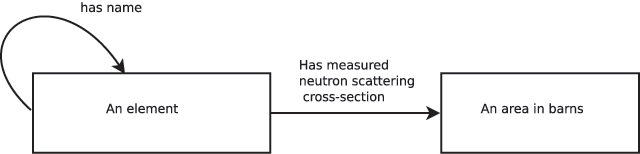

"A robust, format-agnostic scientific data transfer framework"

The olog approach of Spivak and Kent is applied to the practical development of data transfer frameworks, yielding simple rules for construction and assessment of data transfer standards. The simplicity, extensibility and modularity of such descriptions allows discipline experts unfamiliar with complex ontological constructs or toolsets to synthesize multiple pre-existing standards, potentially including a variety of file formats, into a single overarching ontology. These ontologies nevertheless capture all scientifically-relevant prior knowledge, and when expressed in machine-readable form are sufficiently expressive to mediate translation between legacy and modern data formats. A format-independent programming interface informed by this ontology consists of six functions, of which only two handle data. Demonstration software implementing this interface is used to translate between two common diffraction image formats using such an ontology in place of an intermediate format. (Full article...)

|

Featured article of the week: January 9–15:

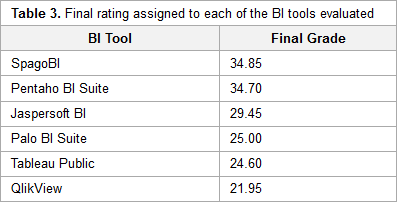

"A benchmarking analysis of open-source business intelligence tools in healthcare environments"

In recent years, a wide range of business intelligence (BI) technologies have been applied to different areas in order to support the decision-making process. BI enables the extraction of knowledge from the data stored. The healthcare industry is no exception, and so BI applications have been under investigation across multiple units of different institutions. Thus, in this article, we intend to analyze some open-source/free BI tools on the market and their applicability in the clinical sphere, taking into consideration the general characteristics of the clinical environment. For this purpose, six BI tools were selected, analyzed, and tested in a practical environment. Then, a comparison metric and a ranking were defined for the tested applications in order to choose the one that best applies to the extraction of useful knowledge and clinical data in a healthcare environment. Finally, a pervasive BI platform was developed using a real case in order to prove the tool's viability. (Full article...)

|

Featured article of the week: January 2–8:

|

|