Difference between revisions of "Journal:Expert search strategies: The information retrieval practices of healthcare information professionals"

Shawndouglas (talk | contribs) (Saving and adding more.) |

Shawndouglas (talk | contribs) (Saving and adding more.) |

||

| Line 84: | Line 84: | ||

===Evaluation of PubMed=== | ===Evaluation of PubMed=== | ||

An evaluation of the PubMed search system was performed using online documentation<ref name="PMTutorial">{{cite web |url=https://www.nlm.nih.gov/bsd/disted/pubmedtutorial/cover.html |title=PubMed Tutorial |publisher=U.S. National Library of Medicine |accessdate=05 March 2017}}</ref>, best practice advice<ref name="ChapmanAdvanced09">{{cite journal |title=Advanced search features of PubMed |journal=Journal of the Canadian Academy of Child and Adolescent Psychiatry |author=Chapman, D. |volume=18 |issue=1 |pages=58–9 |year=2009 |pmid=19270851 |pmc=PMC2651214}}</ref>, and direct testing of the interface using Boolean commands. In addition to the search portal, users can register to My NCBI, which provides additional functionality for saving search queries, managing results sets, and customizing filters so this was included in the comparison. The mobile version of PubMed, PubMed Mobile<ref name="PMMobile">{{cite web |url=https://www.ncbi.nlm.nih.gov/m/pubmed/ |title=Welcome to PubMed Mobile |publisher=U.S. National Library of Medicine |accessdate=05 March 2017}}</ref> does not offer extended functionality, so it was not considered in the evaluation. Although beyond the scope of this study, information seeking by healthcare practitioners on hand-held devices has been shown to save time and improve the early learning of new developments.<ref name="MickanEvidence13">{{cite journal |title=Evidence of effectiveness of health care professionals using handheld computers: A scoping review of systematic reviews |journal=Journal of Medical Internet Research |author=Mickan, S.; Tilson, J.K.; Atherton, H. et al. |volume=15 |issue=10 |pages=e212 |year=2013 |doi=10.2196/jmir.2530 |pmid=24165786 |pmc=PMC3841346}}</ref> | An evaluation of the PubMed search system was performed using online documentation<ref name="PMTutorial">{{cite web |url=https://www.nlm.nih.gov/bsd/disted/pubmedtutorial/cover.html |title=PubMed Tutorial |publisher=U.S. National Library of Medicine |accessdate=05 March 2017}}</ref>, best practice advice<ref name="ChapmanAdvanced09">{{cite journal |title=Advanced search features of PubMed |journal=Journal of the Canadian Academy of Child and Adolescent Psychiatry |author=Chapman, D. |volume=18 |issue=1 |pages=58–9 |year=2009 |pmid=19270851 |pmc=PMC2651214}}</ref>, and direct testing of the interface using Boolean commands. In addition to the search portal, users can register to My NCBI, which provides additional functionality for saving search queries, managing results sets, and customizing filters so this was included in the comparison. The mobile version of PubMed, PubMed Mobile<ref name="PMMobile">{{cite web |url=https://www.ncbi.nlm.nih.gov/m/pubmed/ |title=Welcome to PubMed Mobile |publisher=U.S. National Library of Medicine |accessdate=05 March 2017}}</ref> does not offer extended functionality, so it was not considered in the evaluation. Although beyond the scope of this study, information seeking by healthcare practitioners on hand-held devices has been shown to save time and improve the early learning of new developments.<ref name="MickanEvidence13">{{cite journal |title=Evidence of effectiveness of health care professionals using handheld computers: A scoping review of systematic reviews |journal=Journal of Medical Internet Research |author=Mickan, S.; Tilson, J.K.; Atherton, H. et al. |volume=15 |issue=10 |pages=e212 |year=2013 |doi=10.2196/jmir.2530 |pmid=24165786 |pmc=PMC3841346}}</ref> | ||

==Results== | |||

===Demographics=== | |||

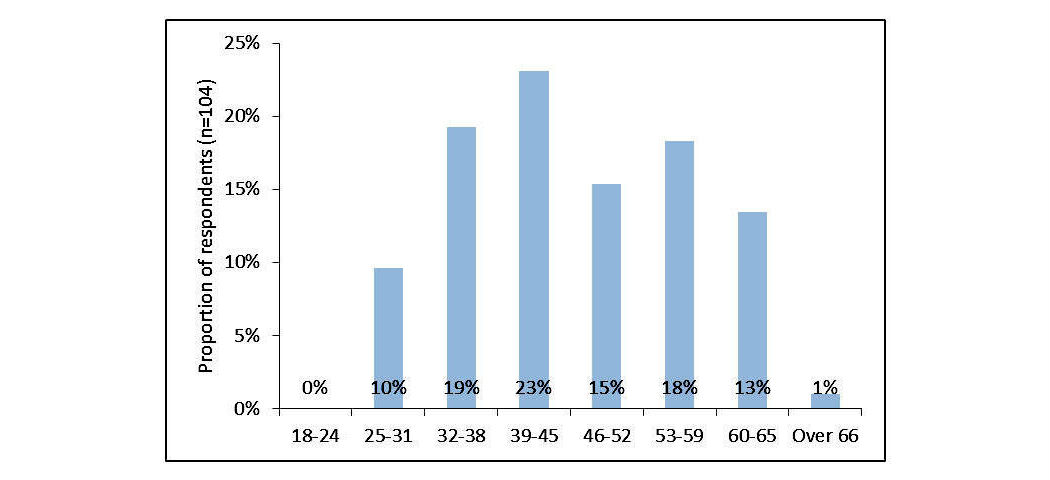

Of the respondents, 89.3% (92/103) were female. Their ages were distributed bi-modally, with peaks at 39 to 45 and 53 to 59, with a conflated average age of 46.0 (SD 10.9, N=104) (Figure 1). | |||

[[File:Fig1 Russell-Rose JMIRMedInfo2017 5-4.jpg|800px]] | |||

{{clear}} | |||

{| | |||

| STYLE="vertical-align:top;"| | |||

{| border="0" cellpadding="5" cellspacing="0" width="800px" | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"| <blockquote>'''Fig. 1''' Age of respondents</blockquote> | |||

|- | |||

|} | |||

|} | |||

The mean time for respondents' experience in their profession was 16.6 years (SD 10.0), greater than their 12.0 (SD 9.0) years of experience in the review of scientific literature (N=107, ''P''<.01, paired ''t'' test). Most respondents worked full time (78.5%, 84/107), and the commissioning agents for their searches were predominantly internal (i.e., within the same organization [72.9%, 78/107]). | |||

The majority of respondents were either based in the U.K. (51.4%, 55/107), the U.S. (27.1%, 29/107), or Canada (7.5%, 8/107). The remaining respondents were from Australia (2.8%, 3/107), Netherlands, Norway, and Germany (1.9% each, 2/107), as well as Denmark, Singapore, Uruguay, South Africa, Belgium, and Ireland (0.9% each, 1/107). All (100.0%, 107/107) respondents stated that the language they used most frequently for searching was English; however, 6.5% (7/107) stated that they did not use English most frequently for communication in their workplace. | |||

The majority of respondents (81.3%, 87/107) worked in organizations that provide systematic reviews. These organizations also provided other services including reference management (72.0%, 77/107), rapid evidence reviews (63.6%, 68/107), background reviews (60.7%, 65/107), and critical appraisals (52.3%, 56/107). | |||

===Search tasks=== | |||

We considered a search task in this context to be the creation of one or more strategy lines to search a specific collection of documents or databases, with task completion resulting in a set of search results that will be subject to further analysis. The output of this process is the search strategy, which is often published as part of the search documentation. This rationalization is in line with a healthcare information professionals’ understanding, but the complexity of search tasks in this domain is discussed in more detail later. | |||

The time respondents spent formulating search strategies, the time spent completing search tasks, and the number of strategy lines they used is shown in Table 1. Respondents were asked to estimate a minimum, average, and maximum for each of these measures, and the values reported here are the medians of each, with the interquartile range (IQR) shown in brackets (in the form Q1 to Q3). The final row shows the minimum, average, and maximum answers to the question: “What would you consider to be the ideal number of results returned for a typical search task?” On average, it takes 60 minutes to formulate a search strategy for a document collection, with the search task taking four hours to complete, and the final strategy consisting of 15 lines. | |||

{| | |||

| STYLE="vertical-align:top;"| | |||

{| class="wikitable" border="1" cellpadding="5" cellspacing="0" width="80%" | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" colspan="4"|'''Table 1.''' Effort to complete search tasks and evaluate results<br /><sup>a</sup>IQR: interquartile range | |||

|- | |||

! style="padding-left:10px; padding-right:10px;"|Task | |||

! style="padding-left:10px; padding-right:10px;"|Minimum (IQR<sup>a</sup>) | |||

! style="padding-left:10px; padding-right:10px;"|Average (IQR) | |||

! style="padding-left:10px; padding-right:10px;"|Maximum (IQR) | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"|Search time per document collection/database, minutes | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"|20 (10-30) | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"|60 (27.5-150) | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"|228 (86-480) | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"|Search task completion time, hours | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"|1 (0.5-2) | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"|4 (2-6.5) | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"|14 (7-30) | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"|Strategy lines per search task, n | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"|5 (2.8-10) | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"|15 (9.1-30) | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"|59 (30-105) | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"|Results examined from a search task, n | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"|10 (5-32) | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"|175 (75-500) | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"|850 (400-5250) | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"|Time to assess relevance of a single result/document, minutes | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"|1 (0.5-2) | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"|3 (1-5) | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"|10 (5-25) | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"|Ideal number of search results per search task, n | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"|0 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"|100 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"|10,000 | |||

|- | |||

|} | |||

|} | |||

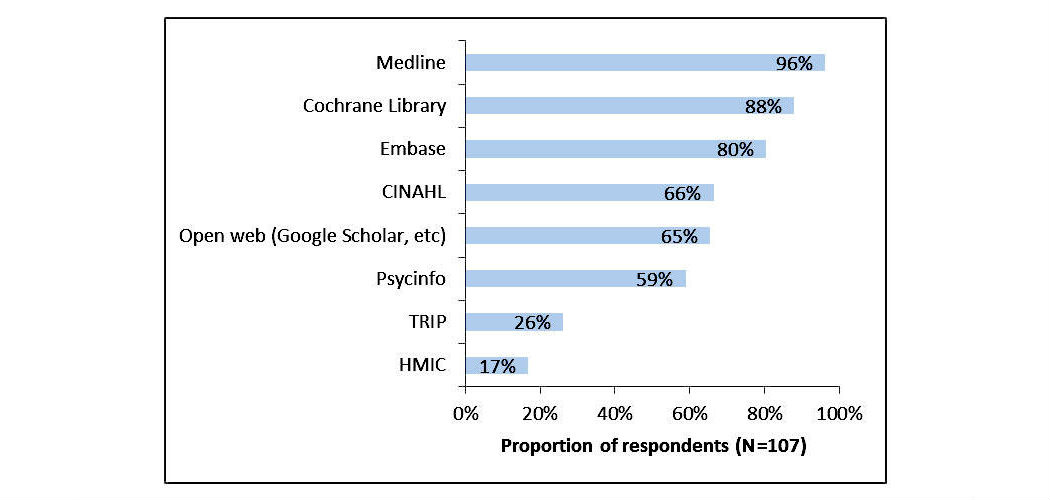

The data sources most frequently searched were MEDLINE (96.3%, 103/107), the Cochrane Library (87.9%, 94/107), and Embase (80.4%, 86/107) (Figure 2). | |||

[[File:Fig2 Russell-Rose JMIRMedInfo2017 5-4.jpg|800px]] | |||

{{clear}} | |||

{| | |||

| STYLE="vertical-align:top;"| | |||

{| border="0" cellpadding="5" cellspacing="0" width="800px" | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"| <blockquote>'''Fig. 2''' Data sources most frequently searched</blockquote> | |||

|- | |||

|} | |||

|} | |||

The majority of respondents (86.9%, 93/107) used previous search strategies or templates at least sometimes, suggesting that the value embodied in them is recognized and should be re-used wherever possible. In addition, most respondents (89.7%, 96/107) routinely share their search strategies in some form, either with colleagues in their workgroup, more broadly within their organization, or in some other capacity (e.g., with clients or as part of a published review). | |||

===Query formulation=== | |||

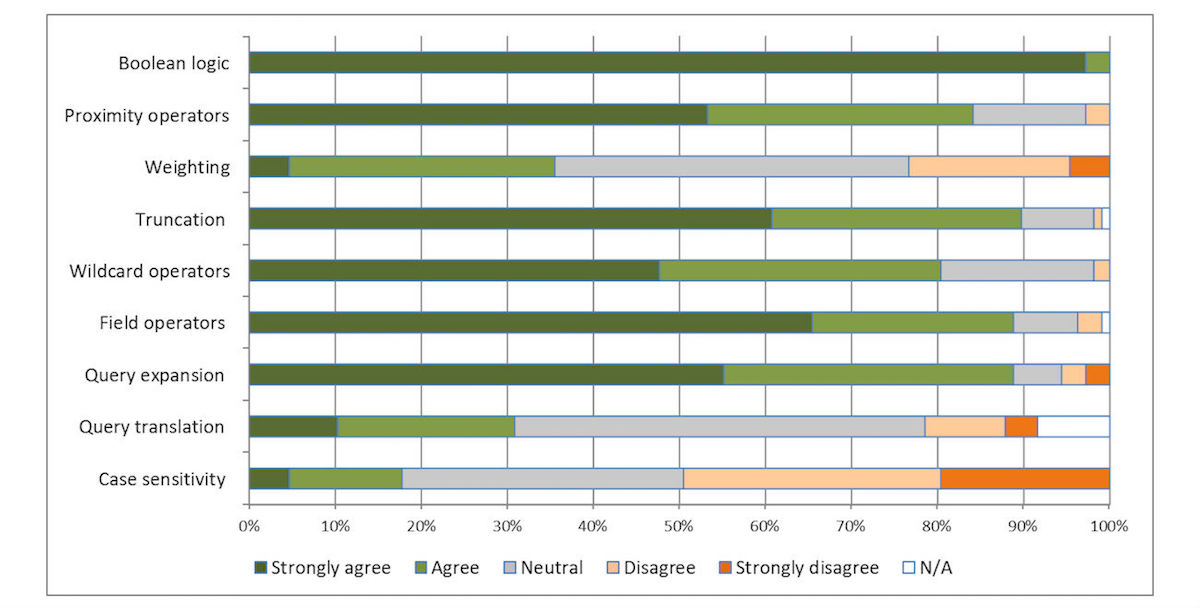

We examined the mechanics of the query formulation process by asking respondents to indicate a level of agreement to statements using a five-point Likert scale ranging from 1 (strong disagreement) to 5 (strong agreement). The results are shown in Figure 3. | |||

[[File:Fig3 Russell-Rose JMIRMedInfo2017 5-4.jpg|800px]] | |||

{{clear}} | |||

{| | |||

| STYLE="vertical-align:top;"| | |||

{| border="0" cellpadding="5" cellspacing="0" width="800px" | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"| <blockquote>'''Fig. 3''' Importance of query formulation functionality</blockquote> | |||

|- | |||

|} | |||

|} | |||

When asked which taxonomies are regularly used, 74.8% (80/107) of respondents indicated they used MeSH, 45.8% (49/107) Emtree, and 18.9% (20/107) CINAHL headings. | |||

When asked which combination of techniques they used to create their search strategies, 44.9% (48/107) stated they used a form-based query builder, 41.1% (44/107) did so manually on paper, and 40.2% (43/107) used a text editor. Only 9.3% (10/107) used some form of visual query builder. | |||

===Evaluating search results=== | |||

Respondents indicated that the ideal number of results returned for a search task would be 100 documents, yet in practice they evaluate more than this (a median of 175 documents; Table 1). The ideal number of results and the actual number of results evaluated are strongly correlated (N=66, ρ=.661 [Spearman rank correlation]). The average time to assess relevance of a single document was three minutes. | |||

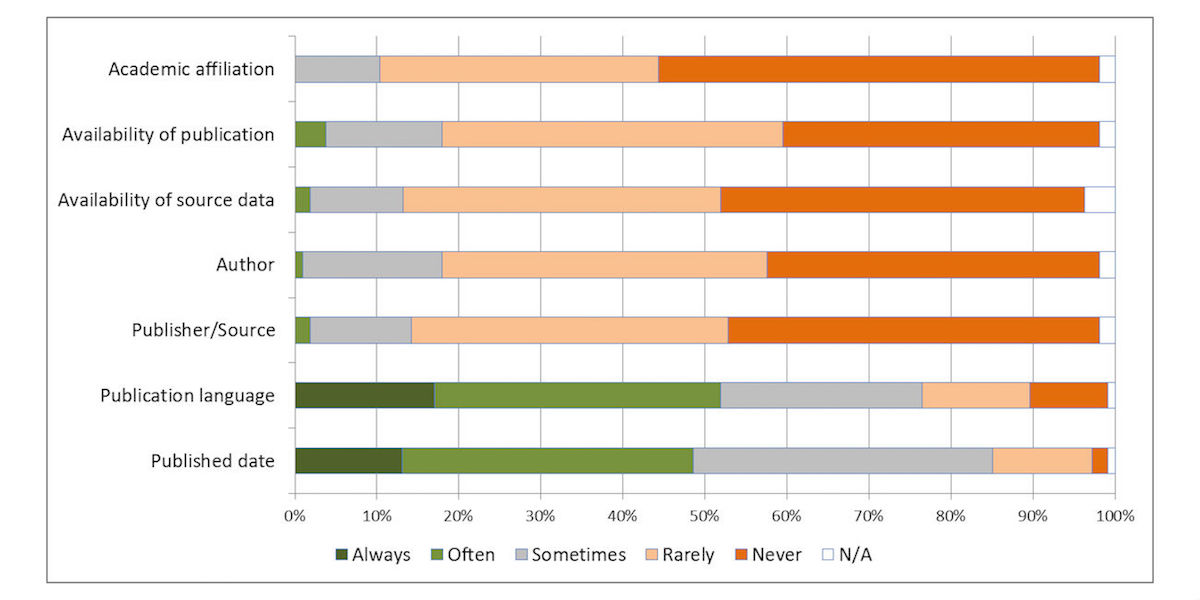

Respondents were asked to indicate on a five-point Likert scale how frequently they use search limits and restriction criteria to narrow down results. The results are shown in Figure 4. | |||

[[File:Fig4 Russell-Rose JMIRMedInfo2017 5-4.jpg|800px]] | |||

{{clear}} | |||

{| | |||

| STYLE="vertical-align:top;"| | |||

{| border="0" cellpadding="5" cellspacing="0" width="800px" | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"| <blockquote>'''Fig. 4''' Usage of restriction criteria</blockquote> | |||

|- | |||

|} | |||

|} | |||

We also examined respondents’ strategies for examining the search results. The most popular approaches were to “start with the result that looked most relevant” (54.2%, 58/107) or simply “select the first result” (23.4%, 25/107). No respondent suggested selecting the “most trustworthy source.” | |||

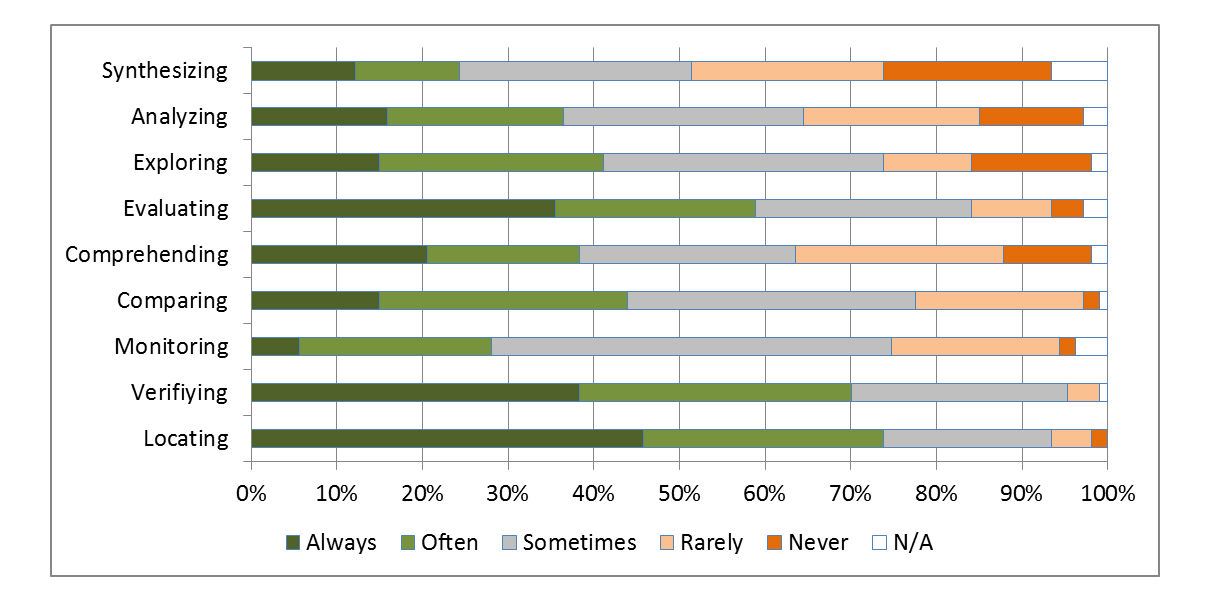

Respondents were asked what types of activities<ref name="Russell-RoseATax11">{{cite journal |title=A taxonomy of enterprise search discovery |journal=Proceedings of HCIR 2011 |author=Russell-Rose, T.; Lamantia, J.; Burrell, M. |volume=2011 |year=2011 |url=https://isquared.wordpress.com/2011/11/02/a-taxonomy-of-enterprise-search-and-discovery/}}</ref> they typically engaged in whilst completing their search task (Figure 5). “Locating, verifying, and evaluating results” were the most common activities (see Multimedia Appendix 1 for the full description of each activity, as provided to the respondents). | |||

[[File:Fig5 Russell-Rose JMIRMedInfo2017 5-4.jpg|800px]] | |||

{{clear}} | |||

{| | |||

| STYLE="vertical-align:top;"| | |||

{| border="0" cellpadding="5" cellspacing="0" width="800px" | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"| <blockquote>'''Fig. 5''' Activities that respondents engage in when completing a search task</blockquote> | |||

|- | |||

|} | |||

|} | |||

===Ideal functionality for searching databases=== | |||

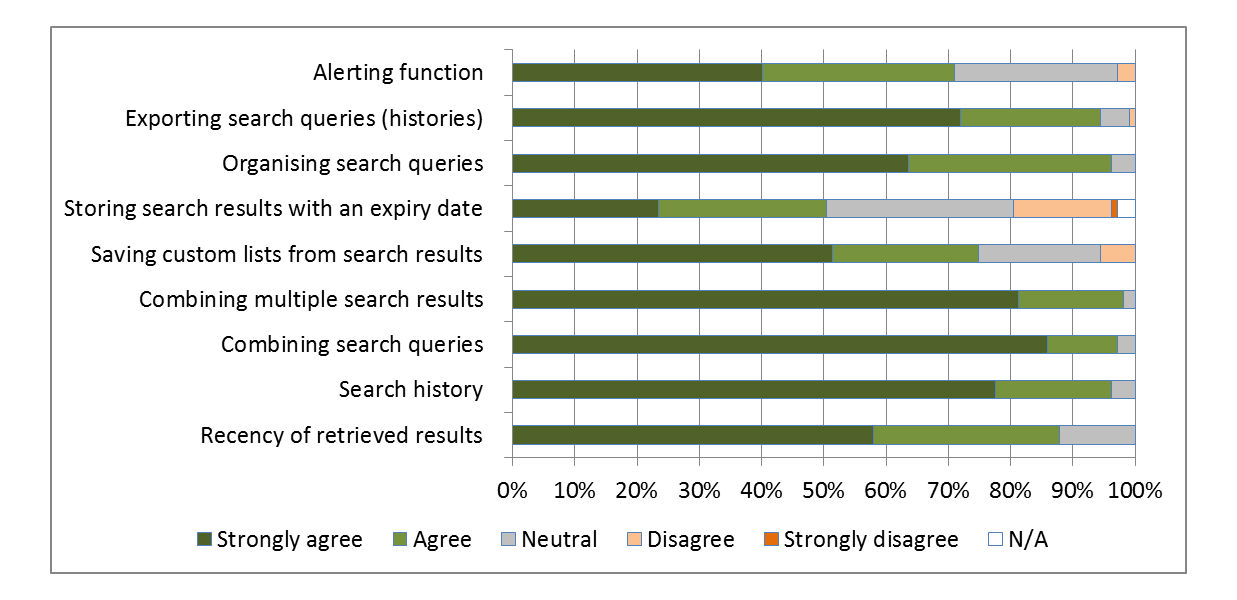

We also examined other features related to search management, organization, and history that respondents value when performing search tasks. Respondents were asked to indicate a level of agreement to a statement using a five-point Likert scale ranging from 1 (strong disagreement) to 5 (strong agreement). The results are shown in Figure 6. | |||

[[File:Fig6 Russell-Rose JMIRMedInfo2017 5-4.jpg|800px]] | |||

{{clear}} | |||

{| | |||

| STYLE="vertical-align:top;"| | |||

{| border="0" cellpadding="5" cellspacing="0" width="800px" | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"| <blockquote>'''Fig. 6''' Ideal features of a literature search system</blockquote> | |||

|- | |||

|} | |||

|} | |||

==References== | ==References== | ||

Revision as of 18:42, 7 November 2017

| Full article title | Expert search strategies: The information retrieval practices of healthcare information professionals |

|---|---|

| Journal | JMIR Medical Informatics |

| Author(s) | Russell-Rose, Tony; Chamberlain, Jon |

| Author affiliation(s) | UXLabs Ltd., University of Essex |

| Primary contact | Email: tgr at uxlabs dot co dot uk |

| Editors | Eysenbach, G. |

| Year published | 2017 |

| Volume and issue | 5 (4) |

| Page(s) | e33 |

| DOI | 10.2196/medinform.7680 |

| ISSN | 2291-9694 |

| Distribution license | Creative Commons Attribution 4.0 International |

| Website | http://medinform.jmir.org/2017/4/e33/ |

| Download | http://medinform.jmir.org/2017/4/e33/pdf (PDF) |

|

|

This article should not be considered complete until this message box has been removed. This is a work in progress. |

Abstract

Background: Healthcare information professionals play a key role in closing the knowledge gap between medical research and clinical practice. Their work involves meticulous searching of literature databases using complex search strategies that can consist of hundreds of keywords, operators, and ontology terms. This process is prone to error and can lead to inefficiency and bias if performed incorrectly.

Objective: The aim of this study was to investigate the search behavior of healthcare information professionals, uncovering their needs, goals, and requirements for information retrieval systems.

Methods: A survey was distributed to healthcare information professionals via professional association email discussion lists. It investigated the search tasks they undertake, their techniques for search strategy formulation, their approaches to evaluating search results, and their preferred functionality for searching library-style databases. The popular literature search system PubMed was then evaluated to determine the extent to which their needs were met.

Results: The 107 respondents indicated that their information retrieval process relied on the use of complex, repeatable, and transparent search strategies. On average it took 60 minutes to formulate a search strategy, with a search task taking four hours and consisting of 15 strategy lines. Respondents reviewed a median of 175 results per search task, far more than they would ideally like (100). The most desired features of a search system were merging search queries and combining search results.

Conclusions: Healthcare information professionals routinely address some of the most challenging information retrieval problems of any profession. However, their needs are not fully supported by current literature search systems, and there is demand for improved functionality, in particular regarding the development and management of search strategies.

Keywords: review, surveys and questionnaires, search engine, information management, information systems

Introduction

Background

Medical knowledge is growing so rapidly that it is difficult for healthcare professionals to keep up. As the volume of published studies increases each year[1], the gap between research knowledge and professional practice grows.[2] Frontline healthcare providers (such as general practitioners [GPs]) responding to the immediate needs of patients may employ a web-style search for diagnostic purposes, with Google being reported to be a useful diagnostic tool[3]; however, the credibility of results depends on the domain.[4] Medical staff may also perform more in-depth searches, such as rapid evidence reviews, where a concise summary of what is known about a topic or intervention is required.[5]

Healthcare information professionals play the primary role in closing the gap between published research and medical practice, by synthesizing the complex, incomplete, and at times conflicting findings of biomedical research into a form that can readily inform healthcare decision making.[6] The systematic literature review process relies on the painstaking and meticulous searching of multiple databases using complex Boolean search strategies that often consist of hundreds of keywords, operators, and ontology terms[7] (Textbox 1).

| |||||||

Performing a systematic review is a resource-intensive and time consuming undertaking, sometimes taking years to complete.[8] It involves a lengthy content production process whose output relies heavily on the quality of the initial search strategy, particularly in ensuring that the scope is sufficiently exhaustive and that the review is not biased by easily accessible studies.[9]

Numerous studies have been performed to investigate the healthcare information retrieval process and to better understand the challenges involved in strategy development, as it has been noted that online health resources are not created by healthcare professionals.[10] For example, Grant[11] used a combination of a semi-structured questionnaire and interviews to study researchers’ experiences of searching the literature, with particular reference to the use of optimal search strategies. McGowan et al.[12] used a combination of a web-based survey and peer review forums to investigate what elements of the search process have the most impact on the overall quality of the resulting evidence base. Similarly, Gillies et al.[13] used an online survey to investigate the review, with a view to identifying problems and barriers for authors of Cochrane reviews. Ciapponi and Glujovsky[14] also used an online survey to study the early stages of systematic review.

No single database can cover all the medical literature required for a systematic review, although some are considered to be a core element of any healthcare search strategy, such as MEDLINE[15], Embase[16], and the Cochrane Library.[17] Consequently, healthcare information professionals may consult these sources along with a number of other, more specialized databases to fit the precise scope area.[18]

A survey[1] of online tools for searching literature databases using PubMed[19], the online literature search service primarily for MEDLINE, showed that most tools were developed for managing search results (such as ranking, clustering into topics and enriching with semantics). Very few tools improved on the standard PubMed search interface or offered advanced Boolean string editing methods in order to support complex literature searching.

Objective

To improve the accuracy and efficiency of the literature search process, it is essential that information retrieval applications (in this case, databases of medical literature and the interfaces through which they are accessed) are designed to support the tasks, needs, and expectations of their users. To do so they should consider the layers of context that influence the search task[20] and how this affects the various phases in the search process.Cite error: Invalid <ref> tag; invalid names, e.g. too many This study was designed to fill gaps in this knowledge by investigating the information retrieval practices of healthcare information professionals and contrasting their requirements to the level of support offered by a widely used literature search tool (PubMed).

The specific research questions addressed by this study were (1) How long do search tasks take when performed by healthcare information professionals? (2) How do they formulate search strategies and what kind of search functionality do they use? (3) How are search results evaluated? (4) What functionality do they value in a literature search system? (5) To what extent are their requirements and aspirations met by the PubMed literature search system?

In answering these research questions we hope to provide direct comparisons within other professions (e.g., in terms of the structure, complexity, and duration of their search tasks).

Methods

Online survey

The survey instrument consisted of an online questionnaire of 58 questions divided into five sections. It was designed to align with the structure and content of Joho et al.’s[21] survey of patent searchers and wherever possible also with Geschwandtner et al.’s[22] survey of medical professionals to facilitate comparisons with other professions. The following were the five sections: (1) Demographics, the background and professional experience of the respondents; (2) Search tasks, the tasks that respondents perform when searching literature databases; (3) Query formulation, the techniques respondents used to formulate search strategies; (4) Evaluating search results, how respondents evaluate the results of their search tasks; and (5) Ideal functionality for searching databases, any other features that respondents value when searching literature databases.

The survey was designed to be completed in approximately 15 minutes and was pre-tested for face validity by two health sciences librarians.

Survey respondents were recruited by sending an email invitation with a link to the survey to five healthcare professional association mailing lists that deal with systematic reviews and medical librarianship: LIS-MEDICAL[23], CLIN-LIB[24], EVIDENCE-BASED-HEALTH[25], expertsearching[26], and the Cochrane Information Retrieval Methods Group (IRMG).[27] It was also sent directly to the members of the Chartered Institute of Library and Information Professionals (CILIP) Healthcare Libraries special interest group.[28] The recruitment message and start page of the survey described the eligibility criteria for survey participants, expected time to complete the survey, its purpose, and funding source.

The survey (Multimedia Appendix 1) was conducted using SurveyMonkey, a web-based software application.[29] Data were collected from July to September 2015. A total of 218 responses were received, of which 107 (49.1%, 107/218) were complete (meaning all pages of the survey had been viewed and all compulsory questions responded to). Only complete surveys were examined. Since the number of unique individuals reached by the mailing list announcements is unknown, the participation rate cannot be determined.

Responses to numeric questions were not constrained to integers, as a pilot survey had shown that respondents preferred to put in approximate and/or expressive values. Text responses corresponding to numerical questions (questions 14 to 22 and 32 to 38; 16 in total) were normalized as follows: (1) when the respondent specified a range (e.g., 10 to 20 hours), the midpoint was entered (e.g., 15 hours); (2) when the respondent indicated a minimum (e.g., 10 years and greater), the minimum was entered (e.g., 10 years); and (3) when the respondent entered an approximate number (e.g., about 20), that number was entered (e.g., 20).

After normalizing, 8.29% (142/1712) responses contained no numerical data and 21.61% (370/1712) responses were normalized.

Evaluation of PubMed

An evaluation of the PubMed search system was performed using online documentation[30], best practice advice[31], and direct testing of the interface using Boolean commands. In addition to the search portal, users can register to My NCBI, which provides additional functionality for saving search queries, managing results sets, and customizing filters so this was included in the comparison. The mobile version of PubMed, PubMed Mobile[32] does not offer extended functionality, so it was not considered in the evaluation. Although beyond the scope of this study, information seeking by healthcare practitioners on hand-held devices has been shown to save time and improve the early learning of new developments.[33]

Results

Demographics

Of the respondents, 89.3% (92/103) were female. Their ages were distributed bi-modally, with peaks at 39 to 45 and 53 to 59, with a conflated average age of 46.0 (SD 10.9, N=104) (Figure 1).

|

The mean time for respondents' experience in their profession was 16.6 years (SD 10.0), greater than their 12.0 (SD 9.0) years of experience in the review of scientific literature (N=107, P<.01, paired t test). Most respondents worked full time (78.5%, 84/107), and the commissioning agents for their searches were predominantly internal (i.e., within the same organization [72.9%, 78/107]).

The majority of respondents were either based in the U.K. (51.4%, 55/107), the U.S. (27.1%, 29/107), or Canada (7.5%, 8/107). The remaining respondents were from Australia (2.8%, 3/107), Netherlands, Norway, and Germany (1.9% each, 2/107), as well as Denmark, Singapore, Uruguay, South Africa, Belgium, and Ireland (0.9% each, 1/107). All (100.0%, 107/107) respondents stated that the language they used most frequently for searching was English; however, 6.5% (7/107) stated that they did not use English most frequently for communication in their workplace.

The majority of respondents (81.3%, 87/107) worked in organizations that provide systematic reviews. These organizations also provided other services including reference management (72.0%, 77/107), rapid evidence reviews (63.6%, 68/107), background reviews (60.7%, 65/107), and critical appraisals (52.3%, 56/107).

Search tasks

We considered a search task in this context to be the creation of one or more strategy lines to search a specific collection of documents or databases, with task completion resulting in a set of search results that will be subject to further analysis. The output of this process is the search strategy, which is often published as part of the search documentation. This rationalization is in line with a healthcare information professionals’ understanding, but the complexity of search tasks in this domain is discussed in more detail later.

The time respondents spent formulating search strategies, the time spent completing search tasks, and the number of strategy lines they used is shown in Table 1. Respondents were asked to estimate a minimum, average, and maximum for each of these measures, and the values reported here are the medians of each, with the interquartile range (IQR) shown in brackets (in the form Q1 to Q3). The final row shows the minimum, average, and maximum answers to the question: “What would you consider to be the ideal number of results returned for a typical search task?” On average, it takes 60 minutes to formulate a search strategy for a document collection, with the search task taking four hours to complete, and the final strategy consisting of 15 lines.

| ||||||||||||||||||||||||||||||||

The data sources most frequently searched were MEDLINE (96.3%, 103/107), the Cochrane Library (87.9%, 94/107), and Embase (80.4%, 86/107) (Figure 2).

|

The majority of respondents (86.9%, 93/107) used previous search strategies or templates at least sometimes, suggesting that the value embodied in them is recognized and should be re-used wherever possible. In addition, most respondents (89.7%, 96/107) routinely share their search strategies in some form, either with colleagues in their workgroup, more broadly within their organization, or in some other capacity (e.g., with clients or as part of a published review).

Query formulation

We examined the mechanics of the query formulation process by asking respondents to indicate a level of agreement to statements using a five-point Likert scale ranging from 1 (strong disagreement) to 5 (strong agreement). The results are shown in Figure 3.

|

When asked which taxonomies are regularly used, 74.8% (80/107) of respondents indicated they used MeSH, 45.8% (49/107) Emtree, and 18.9% (20/107) CINAHL headings.

When asked which combination of techniques they used to create their search strategies, 44.9% (48/107) stated they used a form-based query builder, 41.1% (44/107) did so manually on paper, and 40.2% (43/107) used a text editor. Only 9.3% (10/107) used some form of visual query builder.

Evaluating search results

Respondents indicated that the ideal number of results returned for a search task would be 100 documents, yet in practice they evaluate more than this (a median of 175 documents; Table 1). The ideal number of results and the actual number of results evaluated are strongly correlated (N=66, ρ=.661 [Spearman rank correlation]). The average time to assess relevance of a single document was three minutes.

Respondents were asked to indicate on a five-point Likert scale how frequently they use search limits and restriction criteria to narrow down results. The results are shown in Figure 4.

|

We also examined respondents’ strategies for examining the search results. The most popular approaches were to “start with the result that looked most relevant” (54.2%, 58/107) or simply “select the first result” (23.4%, 25/107). No respondent suggested selecting the “most trustworthy source.”

Respondents were asked what types of activities[34] they typically engaged in whilst completing their search task (Figure 5). “Locating, verifying, and evaluating results” were the most common activities (see Multimedia Appendix 1 for the full description of each activity, as provided to the respondents).

|

Ideal functionality for searching databases

We also examined other features related to search management, organization, and history that respondents value when performing search tasks. Respondents were asked to indicate a level of agreement to a statement using a five-point Likert scale ranging from 1 (strong disagreement) to 5 (strong agreement). The results are shown in Figure 6.

|

References

- ↑ 1.0 1.1 Lu, Z. (2011). "PubMed and beyond: A survey of web tools for searching biomedical literature". Database 2011: baq036. doi:10.1093/database/baq036. PMC PMC3025693. PMID 21245076. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC3025693.

- ↑ Bastian, H.; Glasziou, P.; Chalmers, I. (2010). "Seventy-five trials and eleven systematic reviews a day: How will we ever keep up?". PLoS Medicine 7 (9): e1000326. doi:10.1371/journal.pmed.1000326. PMC PMC2943439. PMID 20877712. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC2943439.

- ↑ Tang, H.; Ng, J.H. (2006). "Googling for a diagnosis--Use of Google as a diagnostic aid: Internet based study". PLoS Medicine 333 (7579): 1143-5. doi:10.1136/bmj.39003.640567.AE. PMC PMC1676146. PMID 17098763. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC1676146.

- ↑ Kitchens, B.; Harle, C.A.; Li, S. (22014). "Quality of health-related online search results". Decision Support Systems 57: 454-462. doi:10.1016/j.dss.2012.10.050.

- ↑ Hemingway, P.; Brereton, N. (April 2009). "What Is A Systematic Review?" (PDF). Heyward Medical Communications. http://www.bandolier.org.uk/painres/download/whatis/Syst-review.pdf. Retrieved 05 March 2017.

- ↑ Elliott, J.H.; Turner, T. (2006). "Living systematic reviews: An emerging opportunity to narrow the evidence-practice gap". PLoS Medicine 333 (7579): 1143-5. doi:10.1136/bmj.39003.640567.AE. PMC PMC1676146. PMID 17098763. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC1676146.

- ↑ Karimi, S.; Pohl, S.; Scholer, F. et al. (2010). "Boolean versus ranked querying for biomedical systematic reviews". BMC Medical Informatics and Decision Making 10: 58. doi:10.1186/1472-6947-10-58. PMC PMC2966450. PMID 20937152. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC2966450.

- ↑ Higgins, J.P.T.; Green, S., ed. (2011). "Cochrane Handbook for Systematic Reviews of Interventions, Version 5.1.0". The Cochrane Collaboration. http://training.cochrane.org/handbook. Retrieved 05 March 2017.

- ↑ Tsafnat, G.; Glasziou, P.; Choong, M.K. et al. (2014). "Systematic review automation technologies". Systematic Reviews 3: 74. doi:10.1186/2046-4053-3-74. PMC PMC4100748. PMID 25005128. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC4100748.

- ↑ Potts, H.W. (2006). "Is e-health progressing faster than e-health researchers?". Journal of Medical Internet Research 8 (3): e24. doi:10.2196/jmir.8.3.e24. PMC PMC2018835. PMID 17032640. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC2018835.

- ↑ Grant, M.J. (2004). "How does your searching grow? A survey of search preferences and the use of optimal search strategies in the identification of qualitative research". Health Information and Libraries Journal 21 (1): 21–32. doi:10.1111/j.1471-1842.2004.00483.x. PMID 15023206.

- ↑ McGowan, J.; Sampson, M.; Salzwedel, D.M. et al. (2016). "PRESS Peer Review of Electronic Search Strategies: 2015 Guideline Statement". Journal of Clinical Epidemiology 75: 40–6. doi:10.1016/j.jclinepi.2016.01.021. PMID 27005575.

- ↑ Gillies, D.; Maxwell, H.; New, K. et al. (2008). "A collaboration-wide survey of Cochrane authors". Evidence in the Era of Globalisation: Abstracts of the 16th Cochrane Colloquium 2008: 04–33.

- ↑ Ciapponi, A.; Glujovsky, D. (2012). "Survey among Cochrane authors about early stages of systematic reviews". 20th Cochrane Colloquium 2012. http://2012.colloquium.cochrane.org/abstracts/survey-among-cochrane-authors-about-early-stages-systematic-reviews.html.

- ↑ "MEDLINE/PubMed Resources Guide". U.S. National Library of Medicine. https://www.nlm.nih.gov/bsd/pmresources.html. Retrieved 05 March 2017.

- ↑ "Embase". Elsevier. https://www.elsevier.com/solutions/embase-biomedical-research. Retrieved 31 August 2017.

- ↑ "Cochrane Library". John Wiley & Sons, Inc. http://www.cochranelibrary.com/. Retrieved 05 March 2017.

- ↑ Clarke, J; Wentz, R. (2000). "Pragmatic approach is effective in evidence based health care". BMJ 321 (7260): 566–7. PMC PMC1118450. PMID 10968827. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC1118450.

- ↑ "PubMed". U.S. National Library of Medicine. https://www.ncbi.nlm.nih.gov/pubmed. Retrieved 05 March 2017.

- ↑ Järvelin, K.; Ingwersen, P. (2004). "Information seeking research needs extension towards tasks and technology" (PDF). Information Research 10 (1). http://files.eric.ed.gov/fulltext/EJ1082037.pdf.

- ↑ Joho, H.; Azzopardi, L.A.; Vanderbauwhede, W. (2010). "A survey of patent users: An analysis of tasks, behavior, search functionality and system requirements". Proceedings of the Third Symposium on Information Interaction in Context 2010: 13–24. doi:10.1145/1840784.1840789.

- ↑ Gschwandtner, M.; Kritz, M.; Boyer, C. (29 August 2011). "D8.1.2: Requirements of the health professional search" (PDF). KHRESMOI. http://www.khresmoi.eu/assets/Deliverables/WP8/KhresmoiD812.pdf. Retrieved 05 March 2017.

- ↑ "LIS-MEDICAL Home Page". JISC. https://www.jiscmail.ac.uk/cgi-bin/webadmin?A0=lis-medical. Retrieved 05 March 2017.

- ↑ "CLIN-LIB Home Page". JISC. https://www.jiscmail.ac.uk/cgi-bin/webadmin?A0=CLIN-LIB. Retrieved 05 March 2017.

- ↑ "EVIDENCE-BASED-HEALTH Home Page". JISC. https://www.jiscmail.ac.uk/cgi-bin/webadmin?A0=EVIDENCE-BASED-HEALTH. Retrieved 05 March 2017.

- ↑ "expertsearching". Medical Library Association. http://pss.mlanet.org/mailman/listinfo/expertsearching_pss.mlanet.org. Retrieved 05 March 2017.

- ↑ "Information Retrieval Methods". The Cochrane Collaboration. http://methods.cochrane.org/irmg/welcome. Retrieved 05 March 2017.

- ↑ "Health Libraries Group". CILIP. https://www.cilip.org.uk/about/special-interest-groups/health-libraries-group. Retrieved 05 March 2017.

- ↑ "SurveyMonkey". SurveyMonkey Inc. https://www.surveymonkey.co.uk/. Retrieved 05 March 2017.

- ↑ "PubMed Tutorial". U.S. National Library of Medicine. https://www.nlm.nih.gov/bsd/disted/pubmedtutorial/cover.html. Retrieved 05 March 2017.

- ↑ Chapman, D. (2009). "Advanced search features of PubMed". Journal of the Canadian Academy of Child and Adolescent Psychiatry 18 (1): 58–9. PMC PMC2651214. PMID 19270851. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC2651214.

- ↑ "Welcome to PubMed Mobile". U.S. National Library of Medicine. https://www.ncbi.nlm.nih.gov/m/pubmed/. Retrieved 05 March 2017.

- ↑ Mickan, S.; Tilson, J.K.; Atherton, H. et al. (2013). "Evidence of effectiveness of health care professionals using handheld computers: A scoping review of systematic reviews". Journal of Medical Internet Research 15 (10): e212. doi:10.2196/jmir.2530. PMC PMC3841346. PMID 24165786. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC3841346.

- ↑ Russell-Rose, T.; Lamantia, J.; Burrell, M. (2011). "A taxonomy of enterprise search discovery". Proceedings of HCIR 2011 2011. https://isquared.wordpress.com/2011/11/02/a-taxonomy-of-enterprise-search-and-discovery/.

Notes

This presentation is faithful to the original, with only a few minor changes to presentation. In several cases the PubMed ID was missing and was added to make the reference more useful. Grammar and vocabulary were cleaned up to make the article easier to read.

Per the distribution agreement, the following copyright information is also being added:

©Tony Russell-Rose, Jon Chamberlain. Originally published in JMIR Medical Informatics (http://medinform.jmir.org), 02.10.2017.