Journal:A robust, format-agnostic scientific data transfer framework

| Full article title | A robust, format-agnostic scientific data transfer framework |

|---|---|

| Journal | Data Science Journal |

| Author(s) | Hester, James |

| Author affiliation(s) | Australian Nuclear Science and Technology Organisation |

| Primary contact | Email: jxh at ansto dot gov dot au |

| Year published | 2016 |

| Volume and issue | 15 |

| Page(s) | 12 |

| DOI | 10.5334/dsj-2016-012 |

| ISSN | 1683-1470 |

| Distribution license | Creative Commons Attribution 4.0 International |

| Website | http://datascience.codata.org/articles/10.5334/dsj-2016-012/ |

| Download | http://datascience.codata.org/articles/10.5334/dsj-2016-012/galley/605/download/ (PDF) |

|

|

This article should not be considered complete until this message box has been removed. This is a work in progress. |

Abstract

The olog approach of Spivak and Kent[1] is applied to the practical development of data transfer frameworks, yielding simple rules for construction and assessment of data transfer standards. The simplicity, extensibility and modularity of such descriptions allows discipline experts unfamiliar with complex ontological constructs or toolsets to synthesize multiple pre-existing standards, potentially including a variety of file formats, into a single overarching ontology. These ontologies nevertheless capture all scientifically-relevant prior knowledge, and when expressed in machine-readable form are sufficiently expressive to mediate translation between legacy and modern data formats. A format-independent programming interface informed by this ontology consists of six functions, of which only two handle data. Demonstration software implementing this interface is used to translate between two common diffraction image formats using such an ontology in place of an intermediate format.

Keywords: metadata, ontology, knowledge representation, data formats

Introduction

For most of scientific history, results and data were communicated using words and numbers on paper, with correct interpretation of this information reliant on the informal standards created by scholarly reference works, linguistic background, and educational traditions. Modern scientists increasingly rely on computers to perform such data transfer, and in this context the sender and receiver agree on the meaning of the data via a specification as interpreted by authors of the sending and receiving software. Recent calls to preserve raw data[2][3] and a growing awareness of a need to manage the explosion in the variety and quantity of data produced by modern large-scale experimental facilities (big data) have led to an increase in the number and coverage of these data transfer standards. Overlap in the areas of knowledge covered by each standard is increasingly common, either because the newer standards aim to replace older ad hoc or de facto standards, or because of natural expansion into the territory of ontologically “neighboring” standards. One example of such overlap is found in single-crystal diffraction: the newer NeXus standard for raw data[4] partly covers the same ontological space as the older imgCIF standard[5], and both aim to replace the multiplicity of ad hoc standards for diffraction images.

Authors of scientific software faced with multiple standards generally write custom input or output modules for each standard. For example, the HKL Research, Inc. suite of diffraction image processing programs accepts over 300 different formats.[6] In such software, broadly useful information on equivalences and transformations is crystallized in code that is specific to a programming language and software environment and is therefore difficult for other authors faced with the same problems to reuse, even if code is freely available. Such uniform processing and merging of disparate standards has been extensively studied by the knowledge representation community: it is one outcome of "ontological alignment" or "ontological mapping," which has been the subject of hundreds of publications over the last decade.[7] Despite the availability of ontological mapping tools, Otero-Cerdeira, Rodríguez-Martínez, & Gómez-Rodríguez note that relatively few ontology matching systems are put to practical use (see their section 4.5). One barrier to adoption is likely to be the need for the discipline experts driving standards development to learn ontological concepts and terminology in order to evaluate and use ontological tools: the effort required to master these tools may not be judged to yield commensurate benefits in situations where communities have historically been able to transfer data reliably without such formal approaches. Introduction of ontological ideas into data transfer would therefore stand more chance of success if those ideas are simple to understand and implement, as well as offering tangible benefits over the status quo. Indeed one of the challenges noted by Otero-Cerdeira et al. is to "define good tools that are easy to use for non-experts."

Much of the research listed by Otero-Cerdeira et al. has understandably been predicated on reducing human involvement in the mapping process, although expert human intervention is still currently required. In contrast to the thousands of terms found in ontologies tackled by ontological mapping projects, data files in the experimental sciences usually contain information relating to a few dozen well-defined scientific concepts, and so manual handling of ontologies is feasible. The present paper therefore adopts the practical position that, if involvement of discipline experts is unavoidable, then the method of representing the ontology should be as accessible as possible to those experts. An easily-applied framework for scientist-driven formalization, development and assessment of data transfer standards is presented, aimed at minimizing the complexity of the task, while promoting interoperability and minimizing duplication of programmer and domain expert effort.

After describing the framework in the next section, we demonstrate the utility of these concepts by discussing schemes for standards development and later semiautomatic data file translation.

A conceptual framework for data file standards

The framework described here covers systems for automated transfer and manipulation of scientific data. In other words, following creation of the reading and writing software in consultation with the data standard, no further human intervention is necessary in order to automatically create, ingest, and perform calculations on data from standards-conformant data files. Note that simple transfer of information found in the data file to a human reader — for example, presentation of text or graphics — is of minor significance in this context, as such operations, while useful, do not require any interpretation of the data by the computer and are in essence identical to traditional paper-based transfer of information from writer to reader.

Terminology used in this paper is defined in Table 1. The process of scientific data transfer is described using these terms as follows: in consultation with the ontology, authors of file output software determine the required or possible list of datanames for their particular application, then correlate concepts handled by their code to these datanames, arranging for the appropriate values to be linked to the datanames within the output data format according to the specifications within the format adapter. A file in this format is then transferred or archived. At some point, software written in consultation with the same format adapter and ontology extracts datavalues from the file and processes them correctly.

| ||||||||||||||||||||

Following Shvaiko & Euzenat[8], the word "ontology" as used in this paper refers to a system of interrelated terms and their meanings, regardless of the way in which those meanings are represented or described. Under this definition, Table 1 is itself an ontology for use solely by the human reader in understanding the present paper. An ontology may be encoded using a language such as OWL[9] to produce a human-and machine-readable document allowing some level of machine verification, deduction and manipulation.

This paper makes frequent reference to two established data transfer standards in the area of experimental science: the Crystallographic Information Framework (CIF)[10] and the NeXus standard.[11]

Constructing the ontology

In general, a complete data transfer ontology for some field would include all of the distinct concepts and relationships used by scientific software authors in the process of constructing software, including scientific, programming, and format-specific terminology. A clear dividing line may be drawn between the scientific components of the ontology and the remainder, by relying on the assertion that scientific concepts and their relationships are dictated by the real world, not by the particular arrangement in which the data appear; that is, a scientific ontology may be completely specified independent of a particular format.

Furthermore, the scheme presented below assumes that the scientific knowledge informing the ontology is already shared by the software authors implementing the standard. These software authors are one of the main consumers of the ontology, so we do not require the level of machine-readability offered by ontology description languages such as OWL; rather we seek the minimum level of sophistication necessary to describe to a human the correct interpretation of the data, while at the same time including properties that allow coherent expansion and curation of the ontology. Such ontologies should be maximally accessible to experts in the scientific field who are not necessarily programmers or familiar with ontological constructs, in order to allow broad-based contribution and review.

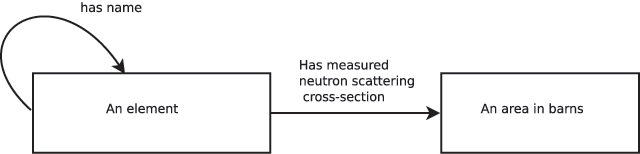

A suitably simple but powerful system for expressing ontologies has been presented by Spivak & Kent[1], who propose using category-theoretic box and arrow diagrams which they call ologs (from "ontology logs"). A concept in an ontology is drawn as an arrow ("aspect") between boxes ("types"): the arrow denotes a mapping between elements in the sets represented by the boxes. A simple ontology written using this approach is shown in Figure 1, which might be used to describe a data file containing the values of neutron cross-section. This olog shows that the concept "measured neutron scattering cross-section" maps every atomic element to a value in barns. We can therefore specify "measured neutron scattering cross-section" as a (domain, function, codomain)[a] triple of ({element names},‘cross-section measurement’,{(r,“barns”) : r ∈ ℝ}). Each of the datanames in our ontology is associated with such a triple, so that the values that the dataname takes are the results of applying the associated function to each of the elements of the domain. Given this formulation of an ontology, it follows that the scientifically useful content of a datafile consists solely of the values taken by the datanames in their codomains, and the matching domain values. In other words, a datafile documents an instance of the olog.

|

Such a functional formulation of a scientific area is generally trivially available. For example, when the result of applying the function might not be known for all elements of the domain, we can augment the co-domain with a null value.[12] Where the function requires several parameters in order to determine uniquely an element of the co-domain, we simply define the domain to be a tuple of the appropriate length. Where a concept would map a single domain element to multiple values and we cannot invert the direction of the relation, the co-domain can be defined to consist of tuples. The identity function applied to a type also creates a dataname, which identifies objects of that type: for example "element name," "measurement number" or "detector bank identifier."

It is useful to visualize the collection of datanames that are functions of the same domain as forming a table; a row in this table describes the mappings of a single value from the domain into the co-domains of the other datanames. Indeed, an olog is trivially transformable to a relational database schema[13], in which case datanames are equivalent to database table column names. Note that this close link to relational databases in no way requires us to use a relational database format for data transfer, although a relational database may serve as a useful baseline against which to compare the chosen data format.

As the above description is potentially too technical to meet our stated aims of broad accessibility, we may instead describe an olog as a collection of definitions for datanames, where each definition meets the following requirements:

- a description

- a description of the concept sufficient for a human reader to unambiguously reconstruct a mapping between the one or more related items and the value type. Every unique collection of values for related items must map to a single value of value type.

- value type

- (the codomain of the function). While the particular discipline will often determine the types of values, a versatile and generally suitable set of core value types might consist of: numeric types (integer,real,complex), finite sets of alternatives (numerical or text), and homogeneous compound structures composed of such values (arrays, matrices). Units should be specified where relevant. Free-form text is useful when constructing an opaque identifier (e.g., sample name), although in other contexts it will not be machine interpretable.

- related items

- dataname(s) that this item maps from (collectively forming the domain of the function).[b] This includes all names which participate in determining the behavior of the mapping, for example, it includes all parameters influencing a model calculation. If it is not possible to identify a unique value for the dataname given only the specific values of the datanames in this list, then the related items list is incomplete. In order to completely insulate users of the specification from expansions in the ontology, once defined this list of related items cannot change (see the next section).

As expected, an ontology constructed according to the above prescription is completely decoupled from the file format. Any discipline specialist able to provide the above information can contribute to the ontology regardless of programming skill, thus broadening the base of contributors.

Although our objective is to provide an ontology that is easy for non-programmers to contribute to, there is some advantage to producing a computer-readable version of the ontology, which might contain additional validation information, e.g., range limits on values that are otherwise only provided in human-readable form (perhaps implicitly) in a plain-text ontology. An olog could, for example, be encoded in the popular machine-readable OWL formalism by specifying an OWL "class" for each olog "type" and creating OWL "functional object properties" for each olog aspect (that is, a dataname is an OWL functional object property linking two OWL classes). OWL terms for restricting value ranges would be used to further describe each OWL class. In general, a restricted and well-chosen vocabulary for the machine-readable ontology will also prompt the ontology author to include useful information that might otherwise be assumed (for example, restricting a range to positive non-zero numbers). An extended example later shows how information presented in a machine-readable ontology can be leveraged for automatic format conversion.

Note that a definition may also stipulate default values. As a reasonably large ontology may contain several alternative routes for reaching a given value (indeed, the notion of path equivalence is a defining feature of ologs and mathematical categories), some care should be taken when stating default values to ensure that they match up with those stated for dependent items.

Expanding ontologies

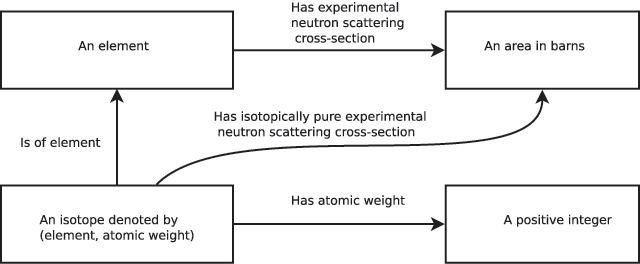

To be useful in the long term, a scientific ontology must be able to gracefully incorporate new scientific discoveries and concepts. In our simple ontological scheme, such expansion is accomplished by addition of new types and aspects. Addition of a new aspect between existing types is clearly not problematic. Addition of a new type (accompanied by an identifier dataname) may, however, include the assertion that a previously-defined dataname is additionally or instead dependent on the newly-defined type. This would potentially impact on all software produced prior to the change, as calculations in older software would not take into account the effect of the new type. As such an impact is almost always undesirable, a fundamental rule should be adopted that the list of dependencies of a dataname may never change. Instead, in this scenario a duplicate of the original dataname should be introduced that includes the new dependency, with the addition of any appropriate functional mappings involving the new and old datanames. For example, consider our simple ontology of Figure 1 following the "discovery" that neutron scattering cross-section depends both on element and atomic weight (Figure 2). Rather than redefining our "experimental neutron scattering cross-section" domain, which consisted solely of the element name, the new concept of "an isotope" (that is, element combined with atomic weight to form a pair) is introduced. A new dataname "isotopically pure experimental neutron scattering cross-section"[c] maps isotope to cross-sectional area. Note that while it is inadvisable to rename or change the dependencies of the old dataname, the descriptive part of the old dataname’s definition can certainly be updated to explain that this dataname refers to the scattering cross-section for natural isotope abundance (if that is indeed what was originally measured).

|

Managing large ontologies

Any reasonably large ontology will benefit from human-friendly organizational tools. The following three suggestions may be useful:

- Adopting a naming convention <type><separator><name> (e.g., "measurement:intensity"), such that all datanames mapping from the same type have the same first component in the name. This makes it easier to find datanames, and is also useful for machine-readable ontologies.

- Defining type "groups," where all types of a given "group" are guaranteed to have the same minimum set of datanames mapping from them.[d] This is most useful in translation scenarios where the ontology writers cannot control the concepts found in foreign data files. For example, a legacy standard may separate "sample manipulation axis" and "detector axis"; an alternative ontology might simply define an "axis" type. A proper description of the contents of the legacy data file requires defining datanames for the two types of axis, in which case assigning an axis "group" to all "axis," "sample manipulation axis" and "detector axis" types saves space, redefining all of the common attributes of an axis. Note that we should not use the format adapter to perform such legacy manipulations as, by design, we wish it to contain no scientific knowledge (see below).

- Separating ontologies into a "core" or "general" ontology, and subsidiary ontologies that define transformations of items in the core ontology. This allows datanames created for legacy data files whose values are subsets of values of datanames in the core ontology to be separated out, reducing clutter and repetition.

Units

The ontology must specify units in order to avoid ambiguity. Not specifying units is equivalent to allowing the elements of a codomain to be represented as a pair: (real value, units), where units can have different values (e.g., "mm" or "cm"), breaking our basic rule that each element of the domain maps to a single element of the co-domain: for example, "1 cm" is the same as "0.01 m" and therefore any value that maps to "(1, cm)" maps also to "(0.01, m)". The units of measurement are therefore a characteristic of the type as a whole, that is, units should always be specified for olog types where units are defined. Where multiple units for a single concept are in common use and the community cannot agree on a single choice, separate types must be defined together with separate datanames mapping to those types.

Uncertainties

Standard uncertainties (su) are sometimes included as part of the data value (for example, in the CIF standard), resulting in a data value that is a pair of numbers. This may be convenient in situations where functions of the data value need to propagate errors. Alternatively, the su can easily be assigned a separate dataname as the mapping from measurement to su is unambiguous. In such a case, when the (measurement, su) pair is required, an additional dataname corresponding to this is defined.

The dataname list

A data bundle contains the values for some set of datanames. The list of datanames in the bundle, together with the ontology, completely specifies the semantic content of the transferred data. A data bundle may be further characterized in practice by indicating which datanames (if any) must be unvarying within the bundle. A single value for a constant-valued dataname can be supplied in the file, and then datanames that are dependent on such constant-valued datanames do not need to explicitly link their values with the corresponding constant-valued dataname value, leading to simplification in data file structure. In the following, for simplicity "data file" and "data bundle" are used interchangeably, although in general a data file may include multiple data bundles; in the CIF and NeXus contexts the bundles inside the data files are called "data blocks" and "entries" respectively.

The specification of such dataname lists for a bundle may evolve over time in three ways: (i) addition of single-valued datanames; (ii) addition of one or more multiple-valued datanames; (iii) change of a single-valued dataname to a multi-valued dataname. The considerable difficulty involved in distributing updated software in a timely manner requires that there is no impact of such evolution on already-existing software, that is, if the dataname list contained within a file produced according to the standard is updated, that file must remain compatible with software that reads older files. The first two cases meet this requirement given the stipulation on ontology expansion (discussed in a previous section). If, however, a single-valued dataname becomes multi-valued as in case (iii), software that expects a single value will almost certainly fail. This failure is usually due to the fact that, in addition to a dataname becoming multi-valued, a series of other values for dependent datanames must now be connected to the appropriate value of the newly multi-valued dataname using some format-specific mechanism. Note that a simple algorithmic transformation exists to transform the new-style files to old-style files: for every value of the newly multi-valued dataname, a data bundle with this single value for the dataname is produced. This means that file reading software could, in theory, automatically handle even this type of evolution in the dataname list. Software written in this way appears to be rare in practice however, presumably because moving to a multi-valued dataname will often imply a different type of analysis and therefore significantly different or upgraded software, which is then written to handle older-style files internally as a special case.

To successfully transfer data files automatically, authors of both file creation and file reading software must agree on the contents of the dataname list to the extent that the file reading software requires a subset of the list placed in the file by the file creation software. One simple but initially time-consuming strategy to achieve such coordination is for the file authoring software to place into output files as many data items from the ontology as are available; if this is insufficient for a given application, the problem lies with the experiment or ontology rather than with the authoring software. Another form of coordination arises based on the use case: a sufficiently well-understood use case will imply a set of concepts from the ontology on which both reader and writer will independently agree. For example, the use case "display a single-crystal atomic structure" immediately implies a list of crystal structure datanames, which can be encoded into an output file by structural databases and then read and used successfully by structural display programs, without any direct coordination between the parties. Similar scenarios determine the contents of dataname lists found in ad-hoc standards based around input and output files for particular software packages.

Long-term archiving is an important special case, as the specific use to which the data retrieved from the archive will be put is not known. Archives therefore aim to include as much information as possible; database deposition software acts to enforce the corresponding dataname list (even if this requirement is not phrased in terms of datanames), and the feedback loop via database depositors to the authors of their software eventually creates a stable dataname list. The IUCr "CheckCIF" program[14]) works in a similar way to enforce a dataname list for publication of crystallographic results.

Choosing a file format

Given that our data is simply an instance of our olog, a file format at minimum must allow (i) association of datanames with sets of co-domain values, and (ii) matching co-domain values to the domain values for each dataname. Any other capabilities provided by the format are not ontologically relevant, but may have important practical implications, for example efficient transfer or storage, or suitability for long-term archiving. Allowing for expansion and variety in dataname lists from the outset is important: as discussed above, this means allowing for the possible addition of new single- and multiple-valued datanames, where the multiple-valued datanames may be independent of the already-included datanames (in database terms, a new table might be required).[e] If such a format is not chosen initially, the alternative is to move to a new format when faced with a use case requiring, e.g., more columns than the format allows. Although integration of a new format into a data transfer specification is dramatically simplified by the present approach, the impact of adopting a new format on the distributed software ecosystem that would have developed around the previous format would still be considerable, meaning that the choice of a flexible format capable of containing multiple independently varying datanames (i.e., multiple tables in database terms) is strongly advisable when designing any new standard.

Certain types of value found in the ontology may not be easily or efficiently represented by a given file format: the format may not provide a structure that can efficiently represent a matrix, for example. Just as for dataname location issues, the ontology is impervious to such data representation issues; rather, it is up to the authors of the transfer specification to choose a format which is the best fit to the dataname list which their use case requires.[f]

Notes

- ↑ Note that "domain" and "codomain" are used throughout in the mathematical sense, as the set on which a function operates, and the set of resulting values, respectively.

- ↑ More strictly, datanames, the codomains of which this dataname maps from.

- ↑ A much shorter dataname such as iso.cross is, of course, also acceptable.

- ↑ OWL class-subclass relations could be used for this purpose.

- ↑ Among others, HDF5, the CIF format, relational databases and XML fulfill this requirement.

- ↑ Interestingly, heterogeneous lists of values are unlikely to be needed in data files, as any processing of such a list will by definition require separate treatment for each element, and consequently a distinct and definable meaning is available for the individual components which can thus be included in their own right within the ontology. While the ontology itself will still contain such heterogeneous tuples (e.g., for a dataname that is a function of multiple other datanames with differing types), the data file itself does not need to contain this tuple so long as the tuple can be constructed from its individual components on input, if necessary, by the programmer.

References

- ↑ 1.0 1.1 Spivak, D.I.; Kent, R.E. (2012). "Ologs: A categorical framework for knowledge representation". PLoS One 7 (1): e24274. doi:10.1371/journal.pone.0024274. PMC PMC3269434. PMID 22303434. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC3269434.

- ↑ Boulton, G. (2012). "Open your minds and share your results". Nature 486 (7404): 441. doi:10.1038/486441a. PMID 22739274.

- ↑ Kroon-Batenburg, L.M.; Helliwell, J.R. (2014). "Experiences with making diffraction image data available: What metadata do we need to archive?". Acta Crystallographica Section D Biological Crystallography 70 (Pt. 10): 2502-9. doi:10.1107/S1399004713029817. PMC PMC4187998. PMID 25286836. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC4187998.

- ↑ "NXmx – Nexus: Manual 3.1 documentation". NeXusformat.org. NIAC. 2015. http://download.nexusformat.org/doc/html/classes/applications/NXmx.html.

- ↑ Bernstein, H.J. (2006). "Classification and use of image data". International Tables for Crystallography G (3.7): 199–205. doi:10.1107/97809553602060000739.

- ↑ "Detectors & Formats recognized by the HKL/HKL-2000/HKL-3000 Software". HKL Research, Inc. 2016. http://www.hkl-xray.com/detectors-formats-recognized-hklhkl-2000hkl-3000-software.

- ↑ Otero-Cerdeira, L.; Rodríguez-Martínez, F.J.; Gómez-Rodríguez, A. (2015). "Ontology matching: A literature review". Expert Systems with Applications 42 (2): 949–971. doi:10.1016/j.eswa.2014.08.032.

- ↑ Shvaiko, P.; Euzenat, J. (2013). "Ontology matching: State of the art and future challenges". IEEE Transactions on Knowledge and Data Engineering 25 (1): 158–176. doi:10.1109/TKDE.2011.253.

- ↑ Hitzler, P.; Krötzsch, M.; Parsia, B. et al. (11 December 2012). "OWL 2 Web Ontology Language Primer (Second Edition)". W3C Recommendations. W3C. https://www.w3.org/TR/2012/REC-owl2-primer-20121211/.

- ↑ Hall, S.R.; McMahon, B., ed. (2005). International Tables for Crystallography Volume G: Definition and exchange of crystallographic data. Springer Netherlands. doi:10.1107/97809553602060000107. ISBN 9781402042904.

- ↑ Könnecke, M.; Akeroyd, F.A.; Bernstein, H.J. et al. (2015). "The NeXus data format". Journal of Applied Crystallography 48 (Pt. 1): 301–305. doi:10.1107/S1600576714027575. PMC PMC4453170. PMID 26089752. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC4453170.

- ↑ Henley, S. (2006). "The problem of missing data in geoscience databases". Computers & Geosciences 32 (9): 1368–1377. doi:10.1016/j.cageo.2005.12.008.

- ↑ Spivak, D.I. (2012). "Functorial data migration". Information and Computation 217: 31–51. doi:10.1016/j.ic.2012.05.001.

- ↑ Strickland, P.R.; Hoyland, M.A.; McMahon, B. (2005). "5.7 Small-molecule crystal structure publication using CIF". In Hall, S.R.; McMahon, B.. International Tables for Crystallography Volume G: Definition and exchange of crystallographic data. Springer Netherlands. pp. 557–568. doi:10.1107/97809553602060000107. ISBN 9781402042904.

Notes

This presentation is faithful to the original, with only a few minor changes to presentation. In some cases important information was missing from the references, and that information was added. The original article lists references alphabetically, but this version — by design — lists them in order of appearance. Footnotes have been changed from numbers to letters as citations are currently using numbers.