Journal:A quality assurance discrimination tool for the evaluation of satellite laboratory practice excellence in the context of European regulatory meat inspection for Trichinella spp.

| Full article title | A quality assurance discrimination tool for the evaluation of satellite laboratory practice excellence in the context of European regulatory meat inspection for Trichinella spp. |

|---|---|

| Journal | Foods |

| Author(s) | Villegas-Pérez, José; Navas-González, Francisco J.; Serrano, Salud; García-Viejo, Fernando; Buffoni, Leandro |

| Author affiliation(s) | University of Cordoba, Junta de Andalucia |

| Primary contact | fjnavas at uco dot es |

| Year published | 2023 |

| Volume and issue | 12(22) |

| Article # | 4186 |

| DOI | 10.3390/foods12224186 |

| ISSN | 2304-8158 |

| Distribution license | Creative Commons Attribution 4.0 International |

| Website | https://www.mdpi.com/2304-8158/12/22/4186 |

| Download | https://www.mdpi.com/2304-8158/12/22/4186/pdf?version=1700532935 (PDF) |

|

|

This article should be considered a work in progress and incomplete. Consider this article incomplete until this notice is removed. |

|

|

This article contains rendered mathematical formulae. You may require the TeX All the Things plugin for Chrome or the Native MathML add-on and fonts for Firefox if they don't render properly for you. |

Abstract

Trichinellosis is a parasitic foodborne zoonotic disease transmitted by ingestion of raw or undercooked meat containing the first larval stage (L1) of the nematode. To ensure the quality and safety of food intended for human consumption, meat inspection for detection of Trichinella spp. larvae is a mandatory procedure per European Union (E.U.) regulations. The implementation of quality assurance (QA) practices in laboratories that are responsible for Trichinella spp. detection is essential given that the detection of this parasite is still a pivotal threat to public health, and it is included in List A of Annex I, Directive 2003/99/EC, which determines the agents to be monitored on a mandatory basis.

A quality management system (QMS) was applied to slaughterhouses and game-handling establishments conducting Trichinella spp. testing without official accreditation but under the supervision of the relevant authority. This study aims to retrospectively analyze the outcomes of implementing the QMS in slaughterhouses and game-handling establishments involved in Trichinella testing in southern Spain. Canonical discriminant analyses (CDAs) were performed to design a tool enabling the classification of satellite laboratories (SLs) while determining whether linear combinations of measures of QA-related traits describe within- and between-SL clustering patterns. The participation of two or more auditors improves the homogeneity of the results deriving from audits. However, when training expertise ensures that such levels of inter-/intralaboratory homogeneity are reached, auditors can perform single audits and act as potential trainers for other auditors. Additionally, technical procedure issues were the primary risk factors identified during audits, which suggests that they should be considered a critical control point (CCP) (for purposes of Hazard Analysis and Critical Control Point [HACCP] evaluations) within the QMS.

Keywords: trichinellosis, Trichinella, laboratories, food safety, quality management system, canonical discriminant analysis

Introduction

Trichinellosis is a worldwide foodborne zoonotic disease caused by the ingestion of the helminth Trichinella spp. Pigs, both domestic and wild, are the main reservoirs, and human infection is primarily linked to the consumption of raw or undercooked meat from infected animals without veterinary inspection. [1] Currently, this parasite continues to pose a significant threat to public health, and it is included in List A of Annex I, Directive 2003/99/EC of the European Union (E.U.) [2] on the surveillance of zoonoses and zoonotic agents, which determines the agents that have to be monitored on a mandatory basis. According to the E.U., 117 human cases have been reported during 2020, with 99 out of the 117 cases acquired within the E.U. [3]

The detection of Trichinella spp. larvae during meat inspection is performed using the magnetic stirrer method of the artificial digestion technique. The method is considered the "gold standard" for Trichinella spp. detection, being "capable of consistently detecting Trichinella larvae in meat at a level of sensitivity that is recognized to be effective for use in controlling animal infection and preventing human disease." [4] Also, it is a mandatory procedure according to regulation EU 2015/1375 [5], which lays down specific rules on official controls for Trichinella in meat. This regulation also specifies equivalent techniques for Trichinella spp. larvae detection in analyzed samples.

As the International Commission on Trichinellosis (ICT) exposes [4], the implementation of quality assurance (QA) practices in laboratories performing Trichinella spp. detection is of the utmost importance, and the probability of detecting a positive case, if present, must be high (>95 or >99%) in order to ensure that there is a low or negligible risk of transmission to humans through the food chain. [6] Laboratories accredited to ISO/IEC 17025 [6] for Trichinella digestion testing are required to use validated diagnostic methods to confirm that the methods are fit for the intended use. In 2019, the ICT recommended the adoption of system-wide practices for QA. [4] However, the minimum required standards for QA are determined and implemented by each local public health authority in E.U. member states. European regulation EU 625/2017 [7]—which places controls on testing and other official activities performed to ensure the application of food and feed law, as well as rules on animal health and welfare, plant health, and plant protection products—states that the regulated control of Trichinella spp. larvae presence during meat inspection should be conducted in accredited laboratories designated by the competent authority. Furthermore, laboratories solely engaged in Trichinella spp. detection in meat using the methods outlined in EU 2015/1375 [5] may be exempt from accreditation if they operate under the supervision of the competent authority.

The activities performed in internal laboratories (e.g., facilities within slaughterhouses and game-handling establishments) require continuous analysis and evaluation by the competent authority. Assessing critical control points (CCPs)—for example, by using a hazard analysis and critical control points (HACCP) approach—involves various aspects such as personal training, diagnostic procedure performance, and document registration, among others. Additionally, continuous monitoring of each CCP is crucial for effective Trichinella spp. detection in infected meat. [8]

In 2011, the relevant authorities of the Andalusia region in southern Spain developed a quality management system (QMS) based on ISO/IEC 17025. [6] This QMS was applied to slaughterhouses and game-handling establishments conducting Trichinella spp. testing without official accreditation but under the supervision of the relevant authority. The QMS not only enabled the implementation of high standards in Trichinella spp. analysis but also facilitated the identification and correction of practices that did not meet the minimum required standards.

Canonical discriminant analysis (CDA) has been proposed as a statistical alternative to tailor HACCP plans that are to be applied to other substances and products for human consumption, such as bottled water, and allows for a comprehensive comparison of practices implemented across different facilities or brands. It provides valuable insights into the specific impact of combinations of factors, which can serve as pivotal points in discerning variations in practice application among these facilities or brands. Additionally, CDA facilitates the exploration of similarities and dissimilarities in the practices adopted by these facilities, unveiling clustering patterns that aid in effectively addressing potential issues. [9] To enhance the reliability of these statistical tools, CDA methods are often complemented by cross-validation techniques. This approach not only helps in pinpointing potential problems along the operational chain but also aids in characterizing the nature and associated risks of these issues. [9] CDA statistical tools are routinely followed by cross-validation techniques, which in turn can help identify issues along the chain and determine the potential nature of the issues and the risk that they imply.

Therefore, the objective of this study is to retrospectively analyze the outcomes of implementing the QMS in slaughterhouses and game-handling establishments involved in Trichinella testing in southern Spain. This translatable discriminant tool permits us to assess the development of QA practices across internal laboratories using DCA and the follow-up cross-validation methods. In turn, the outcomes of the present paper may provide an insight on which items or issues along the implementation of the QMS act as critical discriminant points across laboratories, thus assisting in the determination of the Trichinella risk that their occurrence and frequency may imply for the human food supply chain.

Material and methods

Study units and period: Satellite laboratories in Southern Spain

The present study evaluates the implementation of a QMS based on UNE-EN ISO/IEC 17025 [6] in a total of 18 satellite laboratories (SLs), seven of which were in the province of Cordoba (four in slaughterhouses and three in game-handling establishments) and 11 in the province of Seville (nine in slaughterhouses and two in game-handling establishments). A description of the particular activity carried out in each SL can be found in Table 1.

| ||||||||||||||||||||||||||||||||||||||||

These SLs were chosen upon a selection criterion for the laboratory services (LSs) based on the information provided, either quantitatively (e.g., number of audits conducted over a period of time) or qualitatively, which refers to the interest that the LS may have based on factors such as their activity (e.g., pig slaughter, game meat processing related to wild boars, or equine slaughter) or ownership (i.e., public or private). With this approach as a starting point, SLs were selected for which data from at least four internal audits were available from the period of 2012 to 2018.

The SLs, being facilities within slaughterhouses and game-handling establishments, function as internal laboratories of the establishment, equipped with the necessary materials and resources to perform the analytical determination in question. Ensuring the reliability of the result is an essential requirement for accepting its validity, and according to E.U.'s regulatory requirements found in EU 2015/1375 [5], it must be supported by a QMS as a guarantee of that reliability.

The establishments opted for the alternative of tutelage, and under the supervision of the Official Veterinary Service (SVO), they initiated a process to adapt the facilities where the trichinellosis investigation was carried out and acquire the necessary materials and resources to adapt to a new approach to research on Trichinella spp. This was carried out under a QA system based on the UNE-EN ISO/IEC 17025 standard [6] and under the supervision of an accredited laboratory, designated as the reference laboratory (i.e., public health laboratory or PHL), which depended on the competent authority. The designed system relied on the necessary participation of the economic operator (EO), the SVO, and the PHL, with the collaboration of all three being essential for the effective and proper functioning of the QMS.

Study sample

A total of 3,023 obtained data points, relative to each deviation type and subtype, found for each SL per year from 2012 to 2018, were considered.

Audits

The criteria considered were established in Commission Regulation No. 2075/2005 of 5 December 2005 [10], which established specific rules for official controls (audits) for the presence of Trichinella in meat, and its amendments, in force at the beginning of this initiative, and which was repealed by Regulation 2015/1375. [5]

A checklist of 36 questions or issues was used to obtain the basic information from each SL and assess their initial conditions before the implementation of the QMS.

The scope of the assessment consisted of evaluating aspects related to:

- Facilities requirements: Premises where the investigation of Trichinella larvae is carried out.

- Technique requirements (assay): Investigation of Trichinella larvae via hydrochloric-peptic digestion in fresh meat samples from controlling housing pigs (except for animals coming from a holding or a compartment officially recognized as applying controlled housing conditions in accordance with Annex IV—Article 3, (EU) 2015/1375) [5] and wild boars, using the method of collective sample digestion with a magnetic stirrer (reference method).

- Activity data and records: Existing records on the assay and its results, quality, and traceability.

For the QMS investigated here, exclusive documents (i.e., procedures) were developed, and others were designed for information recording purposes (i.e., formats), which had an individual coding but allowed for differentiation of each participating establishment. There was a necessary correlation between the aspects covered by the UNE-EN ISO/IEC 17025 standard [6] and the developed documentation.

The audits were carried out using a checklist of requirements and a questionnaire regarding the dependencies and technical requirements. Subsequently, a report on the audit was prepared, documenting the observed findings. The findings could be categorized as deviations (if they represented non-compliance or potential non-compliance in the future, for example), and findings that could contribute to strengthening the QMS and be considered improvement actions could also be identified. Deviations could be classified as "observations" (OBS) or "non-conformities" (NC), with NCs having greater negative relevance in terms of the commitment to implementing the QMS. Both types required corrective actions, the effectiveness of which was subsequently verified, and all actions taken were documented.

The provided data inform about the situation of the SLs at the time of the audit and allow for an assessment of the degree of implementation and commitment to the QMS, as well as the reliability that should support the test result.

Deviation classification and coding

The classification of deviations was carried out to prioritize them based on importance (OBS vs. NC), aspect (technical requirement vs. management requirement), and affected content (execution of the technique; equipment, material, and reagents; qualification; quality assurance; records, formats, and other documents and their control; and others). This classification aims to establish a system of grouping findings that facilitates their identification and possible significance regarding the reliability of the result, which is the main objective of the analysis, and coincides with Gajadhar et al. [4], who affirm that the quality and accuracy of Trichinella spp. testing is dependent on the proper performance of the digestion method, the appropriate sample collection based on the target species, adequate facilities, equipment and consumables, accurate verification of findings, and proper documentation of the results. Thus, the goal is to identify findings as potential deviations and classify them based on their importance within the affected area.

Out of all the recorded deviations, a classification into two main groups was performed to create a set of criteria that helps classify the findings. These two groups are based on whether they affect technical aspects (related to the technical execution and its immediate environment of influence) or management and documentation aspects (related to records and other documents, whose existence is necessary but does not compromise the actual execution of the test).

The documented findings may be related to technical requirements or management requirements and may have different intensities, measured by the degree to which they affect the validity of the activity results (whether they question their validity or not), reveal serious non-compliance with management requirements, or occur in isolated or sporadic instances without affecting the activity results or questioning the consistency in the provision of activities. Depending on the degree of impact, these deviations from regulatory requirements can be classified as OBSs or NCs. The decision to consider a finding an OB or NC, regardless of the affected scope (technical requirement or management requirement), depends on the significance or severity that the auditor believes it has in compromising the outcome.

After this initial classification, a classification was carried out based on the affected scope (technical requirement vs. management requirement), and within these scopes, different components can be affected. Six components were identified, which are linked to technique and/or test information; equipment, materials, reagents; qualification; quality assurance; records, forms, and other documents; and other components. This categorization of components allows for the establishment of the same number of types of findings (six types, identified by numbers "1" through "6"), which are further broken down into subtypes (up to a maximum of seven, identified by letters from "a" through "g"; type 3 does not have subtypes). The defined types include the following content, which provides guidance on the findings included within their scope. This classification allows for the differentiation of 12 possible OBSs and 12 possible NCs, each with their respective subtypes, which reflect the degree of compliance with the QMS and the intensity or scope of the described deviation.

Grouping the findings into categories based on areas and types facilitates their classification, ensuring a regulated criterion and improving uniformity in the evaluation of findings. This classification of deviations based on areas and types should be complemented by considering the importance of deviations from the perspective of issuing a valid and reliable result. It expands the dimension of the QMS beyond the analytical result itself, as not all types of findings equally affect the concepts of validity and reliability.

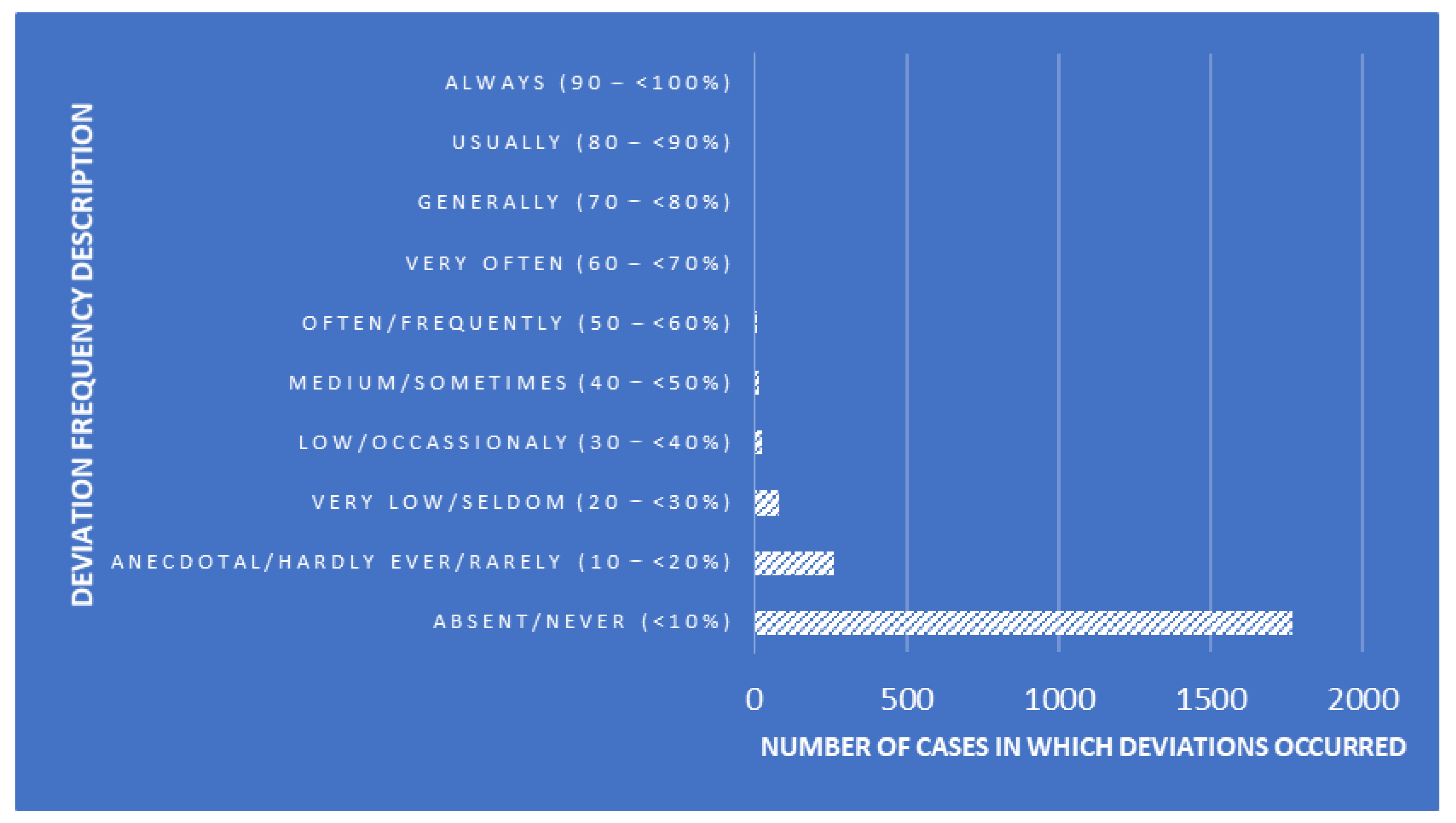

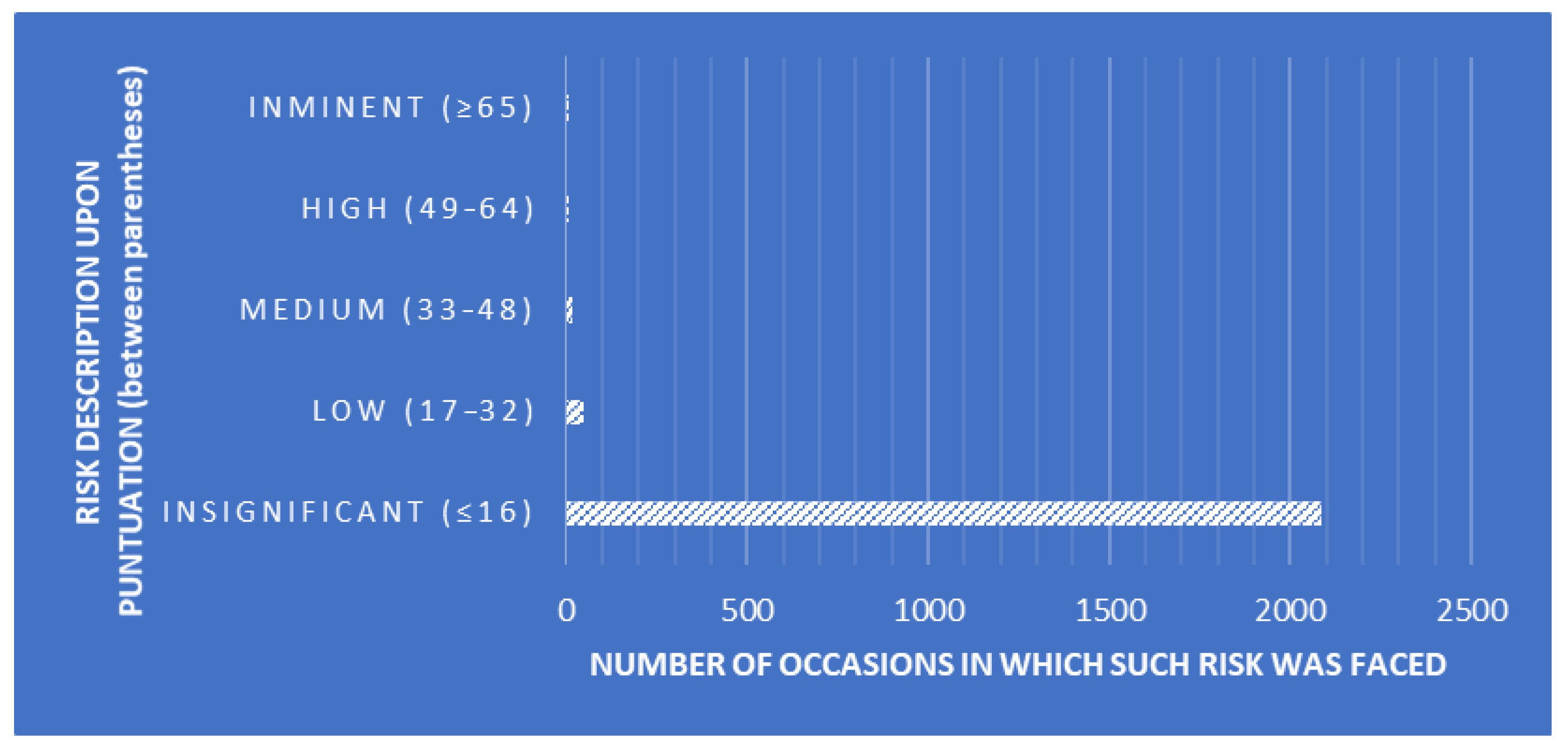

Canonical discriminant analysis

CDAs were performed to design a tool that enables the classification of SLs while determining whether linear combinations of measures of QA-related traits describe within- and between-SL clustering patterns. The explanatory variables used for the present analyses were the essay number (number of essays seeking the detection of Trichinella larvae), positive levels (number of Trichinella larvae cases found), auditor combinations (random combinations of the three auditors performing the audits), deviation frequency description (description of the frequency of occurrence of each deviation, Figure 1), risk description (description of the risk depending on the punctuation obtained after each audit, Figure 2), and risk punctuation on a scale from insignificant (≤16) to imminent (≥65) (Figure 2). Additionally, the number of deviations classified per scope (either technical or management requirement), type (either OBS or NC), and each subtype (a to f) were considered as well (Table 2). Each SL was considered on one level within the laboratory clustering criterion.

|

|

| ||||||||||||||||||||||||||||||||||||||||||||

To create a territorial map that could be easily understood, we utilized canonical relationships based on traits to visualize the differences between groups. To select the most relevant variables, we employed regularized forward stepwise multinomial logistic regression algorithms. To ensure fairness and prevent the impact of varying sample sizes on the classification accuracy, we applied regularization to the priors based on the group sizes, considering the prior probability of commercial software (SPSS Version 26.0 for Windows, SPSS, Inc., Chicago, IL, USA). This approach aimed to avoid any bias caused by unequal group sizes and to enhance the overall quality of the classification results. [11]

The same sample size contexts as as those used in this study across groups have been reported to be robust. In this regard, some authors have reported a minimum sample size of at least 20 observations for every four or five predictors, and the maximum number of independent variables should be n−2, where n is the sample size, to palliate possible distortion effects. [12,13]

Consequently, the present study used a four- or five-times higher ratio between observations and independent variables than those described above, which renders discriminant approaches efficient. Multicollinearity analysis was run to ensure independence and a strong linear relationship across predictors. Variables chosen by the forward or backward stepwise selection methods were the same. Finally, the progressive forward selection method was performed, since it requires less time than the backward selection method.

The discriminant routine of the Classify package of SPSS version 26.0 software and the CDA routine of the Analyzing Data package of XSLTAT software (Addinsoft Pearson Edition 2014, Addinsoft, Paris, France) were used to perform CDA.

Multicollinearity preliminary testing

Multicollinearity refers to the linear relationship among two or more variables, which also means there is a lack of orthogonality among them. Multicollinearity analysis is crucial in improving the reliability of HACCP evaluations. It involves assessing the relationships between different variables or factors studied in HACCP. Detecting and quantifying multicollinearity helps in identifying highly correlated variables, which can lead to inaccurate or unstable results. By addressing multicollinearity, analysts can isolate the independent effects of each factor, resulting in a more accurate understanding of CCPs in the HACCP system. This, in turn, enhances the precision of risk assessments and strategies for ensuring food safety and quality. Different methods are available to detect multicollinearity, and the most widely used are variance inflation factor (VIF) and tolerance. [14] VIF is a ratio of variance in a regression model with multiple attributes divided by the variance of a model with only one attribute. [15]

Explained more technically and exactly, multicollinearity occurs when k vectors lie in a subspace of dimension less than k. Multicollinearity can explain a data-poor condition, which is frequently found in observational studies in which the researchers do not interfere with the study. Thus, many investigators often confuse multicollinearity with correlation. Whereas correlation is the linear relationship between just two variables, multicollinearity can exist between two variables, or between one variable and the linear combination of the others. Therefore, correlation is considered a special case of multicollinearity. A high correlation implies multicollinearity, but not the other way around.

Before performing the statistical analyses per se, a multicollinearity analysis was run to discard potential strong linear relationships across explanatory variables and ensure data independence. In this way, before data manipulation, redundancy problems can be detected, which limits the effects of data noise and reduces the error term of discriminant models. The multicollinearity preliminary test helps identify unnecessary variables which should be excluded, preventing the overinflation of variance explanatory potential and type II error increase. [16] The variance inflation factor (VIF) was used to determine the occurrence of multicollinearity issues. The literature reports a recommended maximum VIF value of 5. [17] On the other hand, tolerance (1 − R2) concerns the amount of variability in a certain independent variable that is not explained by the rest of the dependent variables considered (tolerance > 0.20). [18] The multicollinearity statistics routine of the describing data package of XSLTAT software (Addinsoft Pearson Edition 2021, Addinsoft, Paris, France) was used. The following formula was used to calculate the VIF:

,

where R2 is the coefficient of determination of the regression equation.

In the present study, four rounds were needed to rule out all the potential factors involved in the occurrence of problems of multicollinearity, discarding one of the factors at each round.

Canonical correlation dimension determination

The maximum number of canonical correlations between two sets of variables is the number of variables in the smaller set. The first canonical correlation usually explains most of the relationships between different sets. In any case, attention should be given to all canonical correlations, despite reporting of only the first dimension being common in previous research. When canonical correlation values are 0.30 or higher, they correspond to approximately 10% of the variance explained.

CDA efficiency

Wilks’ lambda test evaluates which variables may significantly contribute to the discriminant function. When Wilks’ lambda approximates 0, the contribution of that variable to the discriminant function increases. χ2 tests the Wilks’ lambda significance. If significance is below 0.05, the function can be concluded to explain the group adscription well. [19]

CDA model reliability

Pillai’s trace criterion, as the only acceptable test to be used in cases of unequal sample sizes, was used to test the assumption of equal covariance matrices in the discriminant function analysis. [20] Pillai’s trace criterion was computed as a subroutine of the CDA routine of the Analyzing Data package of XSLTAT software (Addinsoft Pearson Edition 2014, Addinsoft, Paris, France). A significance of ≤0.05 is indicative of the set of predictors considered in the discriminant model being statistically significant. Pillai’s trace criterion is argued to be the most robust statistic for general protection against departures from the multivariate residuals’ normality and homogeneity of variance. The higher the observed value for Pillai’s trace is, the stronger the evidence is that the set of predictors has a statistically significant effect on the values of the response variable. That is, the Pillai trace criterion shows potential linear differences in the combined quality-assurance-related traits across SL clustering groups. [21]

Canonical coefficients and loading interpretation and spatial representation

When CDA is implemented, a preliminary principal component analysis is used to reduce the overall variables into a few meaningful variables that contributed most to the variations between SLs. The use of the CDA determined the percentage assignment of SLs within its own group. Variables with a discriminant loading of ≥|0.40| were considered substantive, indicating substantive discriminating variables. Using the stepwise procedure technique, non-significant variables were prevented from entering the function. Coefficients with large absolute values correspond to variables with greater discriminating ability.

Canonical loadings represent the relationships between the original variables and the canonical variables derived from the data. They help identify which original variables contribute the most to the canonical correlation between datasets, shedding light on the most influential factors in content personalization. Canonical coefficients, on the other hand, provide a numerical representation of the strength and direction of these relationships, offering valuable insights into how specific variables impact content creation. By analyzing canonical loadings and coefficients in the HAPCC model, content creators and data scientists can optimize the personalization process, ensuring that content is finely tuned to meet the diverse needs and preferences of users.

Data were standardized following procedures reported by Manly and Alberto. [22] Then, squared Mahalanobis distances and principal component analysis were computed using the following formula:

,

where is the distance between population i and j; is the inverse of the covariance matrix of measured variable x; and are the means of variable x in the ith and jth populations, respectively.

In our study, the results were spatially represented through the creation of a territorial map, which served as a visual representation of the geographical distribution of key variables. The squared Mahalanobis distance matrix was converted into a Euclidean distance matrix, and a dendrogram was built using the underweighted pair-group method arithmetic averages (UPGMA; Rovira i Virgili University, Tarragona, Spain) and the Phylogeny procedure of MEGA X 10.0.5 (Institute of Molecular Evolutionary Genetics, The Pennsylvania State University, State College, PA, USA).

The practical implications of the classification accuracy and Press’ Q statistic are profound. A high classification accuracy signifies the reliability of our model in assigning each audit to the appropriate satellite laboratory, which is crucial in decision making processes. In contrast, a strong Press’ Q statistic indicates that the model is capable of accurately predicting new, unseen data, thus enhancing its real-world applicability. These statistical metrics are invaluable for policy makers, urban planners, and other stakeholders, as they inform data-driven decisions that can have a lasting impact on territorial management and resource allocation. It is important to note that all relevant references to regulations, standards, and best practices were meticulously cited throughout the study to ensure the credibility and replicability of our findings.

Discriminant function cross-validation

To assess the accuracy of our discriminant functions, we employed a rigorous cross-validation procedure. This involved splitting the dataset into training and testing subsets, ensuring that the model’s performance was evaluated on unseen data. Cross-validation helps mitigate overfitting and provides a robust estimation of the model’s predictive power. Furthermore, we calculated Press’ Q statistic, which measures the sum of squared prediction errors and provides a valuable assessment of model fit. High Q values indicate excellent model performance.

Afterwards, to determine the probability that an audit of a SL of an unknown background belongs to a particular SL [23], the hit ratio parameter was computed. For this, the relative distance of each particular audit to the centroid of its closest SL was used. The hit ratio is the percentage of correctly classified audits that are correctly ascribed to the SL in which they were performed. The leave-one-out cross-validation procedure is used as a form of significance to consider if the discriminant functions can be validated. Classification accuracy is achieved when the classification rate is at least 25% higher than that obtained by chance.

Press’ Q statistic can support these results, since it can be used to compare the discriminating power of the cross-validated function, as follows:

,

where n is the number of observations in the sample; n’ is the number of observations correctly classified; and K is the number of groups.

The value of Press’ Q statistic must be compared with the critical value of 6.63 for χ2 with a degree of freedom at a significance of 0.01. When Press’ Q exceeds the critical value of χ2 = 6.63, the cross-validated classification can be regarded as significantly better than chance.

Results

Multicollinearity prevention: Preliminary testing

A summary of values for VIF and tolerance is reported in Table S1 of the Supplementary materials. Variables whose VIF values were ≥5 were discarded from further analyses. Thus, all traits were removed for the following statistical analyses except for auditor combination and risk description.

CDA

CDA model reliability

A significant Pillai’s trace criterion determined that discriminant canonical analysis was feasible (Pillai’s trace criterion = 0.9830; F (Observed value) = 17.3469; F (Critical value) = 1.1766; df1 = 187; df2 = 33,055; p-value < 0.0001). As reported in Table 3, seven out of the 11 discriminant functions designed after the analyses presented a significant discriminant ability. The discriminatory power of the F1 function was high (eigenvalue of 0.5356; Figure 2), with ≈64% of the variance being explained by F1 and F2.

| ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

Canonical coefficients, loading interpretation, and spatial representation

Variables were ranked depending on their discriminating properties. For this, a test of equality of group means across SLs was used (Table 4). Lower values of Wilks’ lambda and greater values of F indicate a better discriminating power, which translates into a better position in the rank. The analyses revealed that either auditor combination or risk description significantly contributes (p < 0.05) to the discriminant ability of significant discriminant functions.

| |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

References

Notes

This presentation is faithful to the original, with only a few minor changes to presentation and updates to spelling and grammar. In some cases important information was missing from the references, and that information was added. The URL to the Deloitte paper was broken; an archived version of the document was used for this version.