Difference between revisions of "Journal:An extract-transform-load process design for the incremental loading of German real-world data based on FHIR and OMOP CDM: Algorithm development and validation"

Shawndouglas (talk | contribs) (Saving and adding more.) |

Shawndouglas (talk | contribs) (Saving and adding more.) |

||

| Line 60: | Line 60: | ||

==Methods== | ==Methods== | ||

===Analysis of the Existing ETL FHIR-to-OMOP process=== | ===Analysis of the Existing ETL FHIR-to-OMOP process=== | ||

To determine the requirements for integrating incremental loading into our existing ETL process design, we performed an impact analysis focusing on the whole ETL process, as well as, in more detail, the three main components of it, namely, Reader, Processor, and Writer, as presented by Peng ''et al.'' [2] Regarding the whole ETL process, the following three requirements were needed: | |||

* Requirement A: It is necessary to provide the user with the ability to distinguish between bulk loading and incremental loading. | |||

* Requirement B: For incremental loading, it is further essential that the Reader of the ETL process is able to detect changes in the source system and reads only CUD-FHIR resources on a daily basis. | |||

* Requirement C: During the processing of updated and deleted FHIR resources, duplicates and obsolete data should be avoided in OMOP CDM to guarantee data correctness. | |||

Considering the semantic mapping from FHIR MI-I Core Data Set (CDS) to OMOP CDM and the Writer of the ETL process, as described by Peng ''et al.'' [2], incremental loading has no impact on both. In summary, incremental loading requires an adjustment of the implementation of the Reader and Processor. | |||

===Literature review=== | |||

To identify approaches that might be adaptable to our existing ETL design and fulfill the three requirements in the previous section, we conducted a first literature review on July 14, 2021; a second one on November 28, 2022; and a third one on February 22, 2023 (Multimedia Appendices 1, 2, and 3). Table 1 includes the search strings and the number of results for three literature databases. | |||

{| | |||

| style="vertical-align:top;" | | |||

{| class="wikitable" border="1" cellpadding="5" cellspacing="0" width="100%" | |||

|- | |||

| colspan="3" style="background-color:white; padding-left:10px; padding-right:10px;" |'''Table 1.''' Literature review: database, search string, and number of results. | |||

|- | |||

! style="background-color:#e2e2e2; padding-left:10px; padding-right:10px;" |Database | |||

! style="background-color:#e2e2e2; padding-left:10px; padding-right:10px;" |Search string | |||

! style="background-color:#e2e2e2; padding-left:10px; padding-right:10px;" |Results (''n'') | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |PubMed | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |All fields: (incremental) AND ((etl) OR (extract transform load)) | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |7 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |IEEE Xplore | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |((“All Metadata”: incremental) AND (“All Metadata”: etl OR “All Metadata”: extract transform load)) | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |15 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |Web of Science | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |ALL=(incremental) AND (ALL=(etl) OR ALL=(extract transform load)) | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |46 | |||

|- | |||

|} | |||

|} | |||

We included only articles from 2011 to 2022 in English. After removing duplicates, 51 items were left. These were screened independently by two authors (EH and MZ). Through the title and abstract screening, we identified 12 relevant articles. After the screening of the full texts, we included eight articles within our research. Reasons for excluding the other articles were other meanings of the abbreviation “ETL,” ETL tools without regard to theoretical approaches of incremental loads, focus on application instead of ETL process and theoretical approach, and quality and error handling without focus on a theoretical approach. | |||

Only two of the eight articles addressed ETL processes for loading patient data into OMOP CDM. Lynch ''et al.'' [16] introduced an approach for incremental transformation from the data warehouse to OMOP CDM to prevent incremental load errors. They suggest basing the development on a [[quality assurance]] (QA) process dependent on the [[data quality]] framework by Kahn ''et al.'' [17] Furthermore, they generated ETL batch tracking ids for each record of data during the transformation to OMOP CDM. For 1:1 mappings, they created custom columns in the standardized OMOP CDM tables, and for 1:''n'' or ''n'':1 mappings, they used a parallel mapping table to store the ETL batch id and a link to the corresponding record in OMOP CDM. Secondly, Lenert ''et al.'' [18] describe an automated transformation of clinical data into two CDMs (OMOP and PCORnet database) by using FHIR. Therefore, they use the so-called subscriptions of FHIR resources. These subscriptions trigger a function to create a copy of the FHIR resource and its transmission into another system whenever a FHIR resource is created or updated. | |||

Despite OMOP CDM being the target database, the literature search revealed different concepts for incremental ETL itself. Kathiravelu ''et al.'' [19] described the caching of new or updated data in a temporary table. Of the eight articles, seven described various methods for incremental updates, particularly focusing on change data capture (CDC). All describe different categories of CDC, like timestamp-based, audit column–based, trigger-based, log-based, [[application programming interface]]–based, and data-based snapshots [16,18,20-24]: (1) Lynch ''et al.'' [16] and (2) Lenert ''et al.'' [18] focused on triggers; (3) Wen [20] focused on timestamps and triggers; (4) Thulasiram and Ramaiah [21] and (5) Sun [22] focused on timestamps; (6) Hu and Dessloch [23] focused on timestamps, audit columns, logs, triggers, and snapshots; and (7) Wei Du and Zou [24] focused on snapshots and MapReduce. | |||

In summary, the literature review revealed adaptable approaches, which can be applied for the implementation of requirements B and C. However, no approaches could be found in the literature for requirement A. For this reason, we have to define a new method to enable both bulk and incremental loading in one ETL process. The concrete integration of the approaches into our existing ETL design is described in more detail in the following sections. | |||

===Incremental ETL process design=== | |||

====Enabling both bulk and incremental loading==== | |||

For the specification, if the ETL process should be executed as bulk or incremental load, we added a new Boolean parameter in the configuration file of the ETL process called <tt>APP_BULKLOAD_ENABLED</tt>. According to the desired loading option, the parameter has to be adjusted before executing the ETL process, with “true” results in a bulk load and “false” results in an incremental load. During the execution of the ETL process, this parameter is further taken into account for the Reader and Processor of the ETL process [2] to distinguish between the needs of bulk and incremental load (e.g., to ensure that the OMOP CDM database is not emptied at the beginning of the ETL process execution during an incremental load). | |||

====Focusing on CUD-FHIR resources since the last ETL execution==== | |||

Our purpose of incremental loading was to focus only on CUD-FHIR resources since the last time the ETL process was executed (whether as bulk or incremental load). Consequently, the ETL process for incremental load has to filter only CUD-FHIR resources from the source. The literature research showed that there are various CDC approaches to detect changes in the source. In our case, FHIR resources in the FHIR Gateway and FHIR Server contain [[metadata]], such as a timestamp indicating when an FHIR resource was created, updated, or deleted in the source (FHIR Gateway: <tt>column last_updated_at</tt>; FHIR Server: <tt>meta.lastUpdated</tt>). That is why we used the timestamp-based CDC approach to filter FHIR resources, which have a timestamp specification after the last ETL execution time. | |||

To ensure filtering for the incremental load, we added two new parameters in the configuration file of the ETL process: <tt>DATA_BEGINDATE</tt> and <tt>DATA_ENDDATE</tt>. Both parameters have to be adjusted before executing the ETL process as incremental load. During the execution, the ETL process takes these two parameters into account and only reads FHIR resources from the source that has a metadata timestamp specification that is in [<tt>DATA_BEGINDATE</tt>, <tt>DATA_ENDDATE</tt>]. | |||

====Guarantee data correctness in OMOP CDM==== | |||

To avoid duplicates in OMOP CDM when processing updated and deleted FHIR resources, their existence in OMOP CDM has to be checked during processing. The FHIR resources themselves do not have a flag that indicates whether they are new or have been changed. Only deleted FHIR resources can be identified by a specific flag in the metadata. To assess the existence of FHIR resources in OMOP CDM, a comparison of the data of the read FHIR resources with the data already available in OMOP CDM has to be done. | |||

The literature research showed an approach to generate a unique tracking id per source data during the transformation process and its storage in OMOP CDM. [16] We decided against the approach of generating an additional id because FHIR resources already contain two identifying FHIR elements themselves: id and identifier. The id represents the logical id of the resource per resource type, while the identifier specifies an identifier that is part of the source data. Both FHIR elements allow the unique identification of an FHIR resource per resource type. However, the standardized OMOP CDM tables do not provide the possibility to store this information from FHIR. Furthermore, OMOP CDM has its own primary keys for each record in a table independent of the id and identifier used in FHIR. Consequently, after transforming FHIR resources to OMOP CDM, the identifying data from FHIR resources will be lost. | |||

To solve this problem, we need to store the mapping between the id and identifier used in FHIR with the id used in OMOP CDM. Due to the fact that the id of an FHIR resource is only unique per resource type and one FHIR resource can be stored in OMOP CDM in multiple tables, we additionally have to specify the resource type. As mentioned above, Lynch ''et al.'' [16] presented an approach to store the mapping between tracking ids for source records and ids used in OMOP CDM by using a mapping table and custom columns in OMOP CDM. We have slightly customized this approach and adapted it into our ETL design. Contrary to the use of both mapping tables and custom columns, we considered each approach separately. | |||

Our first approach uses mapping tables for each FHIR resource type in a separate schema in OMOP CDM. With this approach, the Writer of the ETL process has to fill additional mapping tables beside the standardized tables in OMOP CDM. Our second approach focuses on two new columns in the standardized tables in OMOP CDM called “fhir_logical_id” and “fhir_identifier.” These columns store the id and identifier of the FHIR resource. Furthermore, we appended an abbreviation of the resource type as a prefix to the id and identifier of FHIR (eg, “med-” for Medication, “mea-” for MedicationAdministration, or “mes-” for MedicationStatement FHIR resources). In consequence, the combination of the prefix with the id and identifier and its storage in OMOP CDM enables the unique identification of FHIR resources in OMOP CDM. Since the mapping tables and two new columns are required exclusively for the ETL process, the analysis of data across multiple OMOP CDM databases is not affected. | |||

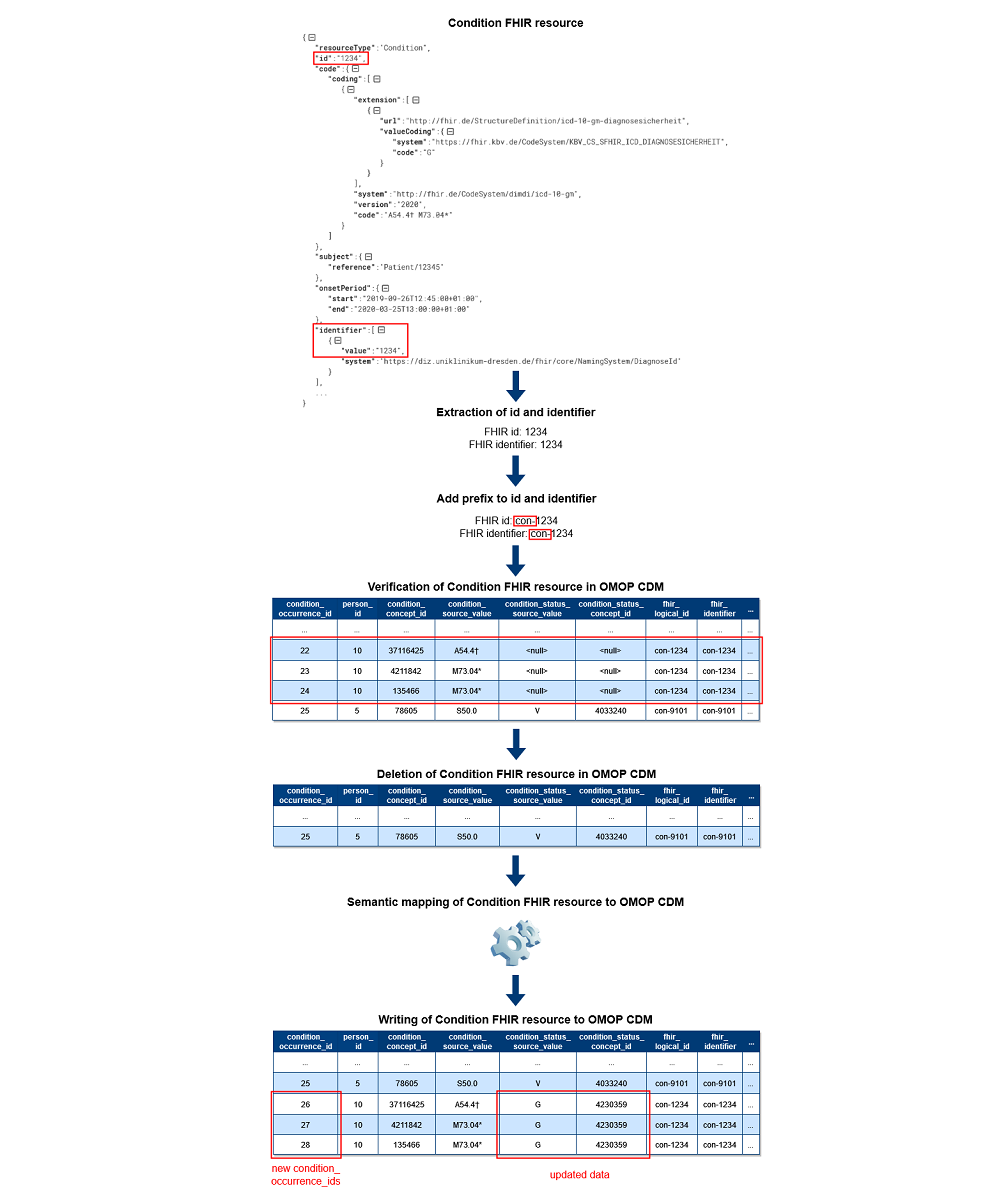

Based on the unique identification of FHIR resources in OMOP CDM, it is now possible to guarantee data correctness in OMOP CDM during incremental loading. Figure 1 shows the exemplary data flow for Condition FHIR resources for the second approach with two new columns. First, the Processor extracts the id and identifier used in FHIR. After that, the prefix is added to both values. Regardless of whether the data was created, updated, or deleted in the source, the ETL process next verifies each processed FHIR resource’s existence in OMOP CDM using the mapping tables or two new columns. During the verification, records are deleted in OMOP CDM if they were found. This approach is also used for updated FHIR resources to avoid incomplete updates for cross-domain mappings in OMOP CDM. Consequently, we do not perform updates on the existing records in OMOP CDM except Patient and Encounter FHIR resources to ensure referential integrity in OMOP CDM. In case FHIR resources are marked as deleted in the source, the processing is completed. Otherwise, the same semantic mapping logic as for bulk loading [2] applies afterward, and the data of the FHIR resources are written to OMOP CDM as new records with new OMOP ids. | |||

[[File:Fig1 Henke JMIRMedInfo2023 11.png|1000px]] | |||

{{clear}} | |||

{| | |||

| style="vertical-align:top;" | | |||

{| border="0" cellpadding="5" cellspacing="0" width="1000px" | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |<blockquote>'''Figure 1.''' Excerpt of the data flow of the Condition Processor. CDM: Common Data Model; FHIR: Fast Healthcare Interoperability Resources; OMOP: Observational Medical Outcomes Partnership.</blockquote> | |||

|- | |||

|} | |||

|} | |||

===Evaluation of the incremental load process=== | |||

For the evaluation of the incremental load process, we defined and executed two ETL test designs. First, we tested which approach to store the mapping between id and identifier used in FHIR with the id used in OMOP CDM was the most performant. For this purpose, we implemented a separate ETL process version for each approach. Afterward, we executed the ETL process as bulk load first and as incremental load afterward, and compared the execution times between the mapping table approach and the column approach. For further evaluation of the incremental load process, we have chosen the most performant approach, resulting in a new optimized ETL process version for the second ETL test design. | |||

To test the achievement of the three requirements identified during the initial analysis of our ETL process, we defined and executed a second ETL test design (Table 2) that compares the results of bulk loading with those of incremental loading regarding performance and data correctness. Our hypotheses here are that the execution time of incremental loading alone is less than bulk loading, including daily updates, and that the amount of data per table in OMOP CDM is identical after incremental loading and bulk loading, including daily updates. | |||

{| | |||

| style="vertical-align:top;" | | |||

{| class="wikitable" border="1" cellpadding="5" cellspacing="0" width="100%" | |||

|- | |||

| colspan="2" style="background-color:white; padding-left:10px; padding-right:10px;" |'''Table 2.''' Extract-transform-load test design regarding performance and data correctness. | |||

|- | |||

! style="background-color:#e2e2e2; padding-left:10px; padding-right:10px;" |Test focus | |||

! style="background-color:#e2e2e2; padding-left:10px; padding-right:10px;" |Hypothesis | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |Performance | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |t(bulk loading (3 mon)) + t(incremental loading (1 d)) < t(bulk loading (3 mon)) + t(bulk loading (3 mon + 1 d)) | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |Data correctness | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |#((bulk loading (3 mon)) + (incremental loading (1 d))) = #(bulk loading (3 mon + 1 d)) | |||

|- | |||

|} | |||

|} | |||

For both ETL test designs, we used a total of 3,802,121 synthetic FHIR resources version R4 based on the MI-I CDS version 1.0, which were generated using random values. Furthermore, we simulated CUD-FHIR resources for testing incremental loading for one day. For the simulation, we checked the frequency distribution of CUD data per domain in our source system with real-world data for eight days and calculated the average value (see Multimedia Appendix 4). In addition, we set up one OMOP CDM v5.3.1 database as the target and executed the ETL process according to our test designs. For both ETL tests, we tracked the execution times based on the time stamps in the logging file of the ETL process until the corresponding job finished successfully. In a second step, we recorded the data quantity for each filled table in OMOP CDM and compared the results between the two ETL loading options for the second ETL test. | |||

==Results== | |||

===Architecture of the ETL process=== | |||

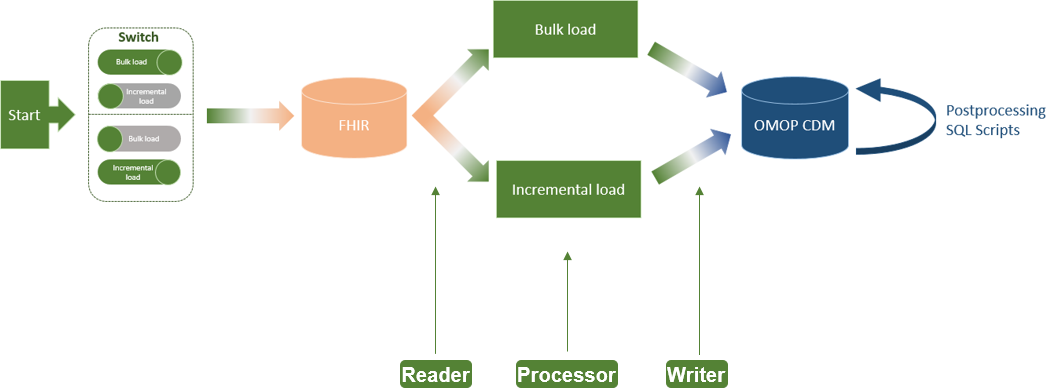

The implemented ETL process extension for incremental loading of FHIR resources to OMOP CDM has not changed the basic architecture of the ETL process, as proposed by Peng ''et al.'' [2], consisting of Reader, Processor, and Writer (Figure 2). The only addition is a switch at the beginning of the ETL process, which allows the user to select between bulk load and incremental load (requirement A). Moreover, we configured the Reader for incremental loading of CUD-FHIR resources on a daily basis (requirement B). In the Processor, we added the logic of the verification of CUD-FHIR resources and their deletion from OMOP CDM if they already exist (requirement C). | |||

[[File:Fig2 Henke JMIRMedInfo2023 11.png|900px]] | |||

{{clear}} | |||

{| | |||

| style="vertical-align:top;" | | |||

{| border="0" cellpadding="5" cellspacing="0" width="900px" | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |<blockquote>'''Figure 2.''' Architecture of the FHIR-to-OMOP extract-transform-load process including incremental load. CDM: Common Data Model; FHIR: Fast Healthcare Interoperability Resources; OMOP: Observational Medical Outcomes Partnership.</blockquote> | |||

|- | |||

|} | |||

|} | |||

The ETL process covering bulk and incremental load is available in the OHDSI repository ETL-German-FHIR-Core. [25] | |||

===Findings of the first ETL test=== | |||

The first ETL test focused on the performance measurement of the mapping table approach versus the column approach. First, we executed both ETL approaches as a bulk load. The column approach took about 30 minutes to transform FHIR resources to OMOP CDM. In contrast, the mapping table approach was still not finished after four hours. Therefore, we stopped the ETL execution and did not test the incremental loading anymore. Consequently, for the incremental ETL design, we decided to use the column approach due to its better performance and executed the subsequent performance evaluations with it. | |||

===Findings of the second ETL test=== | |||

The second ETL test dealt with testing our two hypotheses in Table 2. First, we compared the execution times between a bulk load (three months plus one day) and an initial bulk load (three months) followed by an incremental load (one day). For this, each loading option was executed three times. Based on the results, we calculated the average execution times. The performance results (Multimedia Appendix 5) show that an initial bulk load (13.31 minutes) followed by a daily incremental load (2.12 minutes) is more efficient than an everyday full load (17.07 minutes). Looking at the percentage improvement in performance, it can be shown that incremental loading had 87.5% less execution time than a daily full load (2.12 minutes compared to 17.07 minutes). Referring to our first hypothesis, we were able to prove our initial assumption. | |||

After the execution of both loading options, we further checked the data quantity for each filled table in OMOP CDM and compared the results of it. As shown in Table 3, both loading options resulted in the same amount of data (Multimedia Appendix 5). Consequently, we were also able to confirm our second hypothesis regarding data correctness in OMOP CDM. | |||

{| | |||

| style="vertical-align:top;" | | |||

{| class="wikitable" border="1" cellpadding="5" cellspacing="0" width="100%" | |||

|- | |||

| colspan="3" style="background-color:white; padding-left:10px; padding-right:10px;" |'''Table 3.''' Results of the data quantity comparison in the Observational Medical Outcomes Partnership Common Data Model (OMOP CDM) between bulk and incremental load. | |||

|- | |||

! style="background-color:#e2e2e2; padding-left:10px; padding-right:10px;" |Data field | |||

! style="background-color:#e2e2e2; padding-left:10px; padding-right:10px;" |Bulk load (three months + one day; ''n'') | |||

! style="background-color:#e2e2e2; padding-left:10px; padding-right:10px;" |Bulk load (three months) + incremental load (one day) (''n'') | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |Care_site | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |152 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |152 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |Condition_occurrence | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |800,640 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |800,640 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |Death | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |857 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |857 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |Drug_exposure | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |1,171,521 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |1,171,521 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |Fact_relationship | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |2,323,894 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |2,323,894 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |Measurement | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |231,369 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |231,369 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |Observation | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |511,844 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |511,844 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |Observation_period | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |15,037 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |15,037 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |Person | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |15,037 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |15,037 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |Procedure_occurrence | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |168,384 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |168,384 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |Source_to_concept_map | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |251 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |251 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |Visit_detail | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |43,929 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |43,929 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |Visit_occurrence | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |29,898 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |29,898 | |||

|- | |||

|} | |||

|} | |||

==Discussion== | |||

Revision as of 21:10, 4 December 2023

| Full article title | An extract-transform-load process design for the incremental loading of German real-world data based on FHIR and OMOP CDM: Algorithm development and validation |

|---|---|

| Journal | JMIR Medical Informatics |

| Author(s) | Henke, Elisa; Peng, Yuan; Reinecke, Ines; Zoch, Michéle; Sedlmayr, Martin; Bathelt, Franziska |

| Author affiliation(s) | Technische Universität Dresden |

| Primary contact | Email: elisa dot henke at tu dash dresden dot de |

| Editors | Lovis, Christian |

| Year published | 2023 |

| Volume and issue | 11 |

| Article # | e47310 |

| DOI | 10.2196/47310 |

| ISSN | 2291-9694 |

| Distribution license | Creative Commons Attribution 4.0 International |

| Website | https://medinform.jmir.org/2023/1/e47310 |

| Download | https://medinform.jmir.org/2023/1/e47310/PDF (PDF) |

|

|

This article should be considered a work in progress and incomplete. Consider this article incomplete until this notice is removed. |

Abstract

Background: In the Medical Informatics in Research and Care in University Medicine (MIRACUM) consortium, an IT-based clinical trial recruitment support system was developed based on the Observational Medical Outcomes Partnership (OMOP) Common Data Model (CDM). Currently, OMOP CDM is populated with German Fast Healthcare Interoperability Resources (FHIR) data using an extract-transform-load (ETL) process, which was designed as a bulk load. However, the computational effort that comes with an everyday full load is not efficient for daily recruitment.

Objective: The aim of this study is to extend our existing ETL process with the option of incremental loading to efficiently support daily updated data.

Methods: Based on our existing bulk ETL process, we performed an analysis to determine the requirements of incremental loading. Furthermore, a literature review was conducted to identify adaptable approaches. Based on this, we implemented three methods to integrate incremental loading into our ETL process. Lastly, a test suite was defined to evaluate the incremental loading for data correctness and performance compared to bulk loading.

Results: The resulting ETL process supports bulk and incremental loading. Performance tests show that the incremental load took 87.5% less execution time than the bulk load (2.12 minutes compared to 17.07 minutes) related to changes of one day, while no data differences occurred in OMOP CDM.

Conclusions: Since incremental loading is more efficient than a daily bulk load, and both loading options result in the same amount of data, we recommend using bulk load for an initial load and switching to incremental load for daily updates. The resulting incremental ETL logic can be applied internationally since it is not restricted to German FHIR profiles.

Keywords: extract-transform-load, ETL, incremental loading, OMOP CDM, FHIR, interoperability, Observational Medical Outcomes Partnership Common Data Model; Fast Healthcare Interoperability Resources

Introduction

Background and significance

Randomized controlled clinical trials are the gold standard to “measure the effectiveness of a new intervention or treatment.” [1] However, randomized controlled clinical trials are limited regarding the representative number of persons included and, therefore, are restricted in their external generalizability. To gain more unbiased evidence, observational studies focus on real-world data from large heterogeneous populations.

To support observational research, we at the Institute for Medical Informatics and Biometry at Technische Universität Dresden already provide a transferable extract-transform-load (ETL) process [2] to transform German real-world data to the Observational Medical Outcomes Partnership (OMOP) Common Data Model (CDM) [3] provided by Observational Health Data Sciences and Informatics (OHDSI). [4] This transformation effort supports the possibilities for multicentric and even international studies. Due to the heterogeneity of the structure and content of the data from the data integration centers within the Medical Informatics Initiative Germany (MI-I) [5], the Health Level 7 (HL7) [6] Fast Healthcare Interoperability Resources (FHIR) communication standard was specified among all German university hospitals. Consequently, we used FHIR as the source for our ETL process. The FHIR specification is given by the core data set of the MI-I. [7] FHIR resources can be read from an FHIR Gateway [8] (PostgreSQL database) or FHIR Server (e.g., HAPI [9] or Blaze [10]). As the target of our ETL process, we used OMOP CDM v5.3.1. [11] The implementation of the ETL process was done using the open-source framework Java SpringBatch. [12] Our ETL process has been implemented in accordance with the default assumption as described in The Book of OHDSI [13], where the OHDSI community defines the ETL process as a full load to transfer data from source to target systems.

This approach is efficient for a dedicated study where data gets loaded once without any update afterward; however, it is inefficient when it comes to the need for updated data on a daily basis. The latter is the case for the developments around the improvement and support of the recruitment process for clinical trials, which the Medical Informatics in Research and Care in University Medicine (MIRACUM) [14] consortium, as part of the MI-I funded by the German Federal Ministry of Education and Research, is working on. In this context, an IT-based clinical trial recruitment support system (CTRSS) based on OMOP CDM was implemented. [15] The CTRSS consists of a screening list for recruitment teams that provides potential candidates for clinical trials updated on a daily base. To enable the CTRSS to provide recruitment proposals, it is necessary to transform the data in FHIR format at each site from the 10 MIRACUM data integration centers into the standardized format of OMOP CDM. The procession of FHIR resources to OMOP CDM through our ETL process has already been successfully tested and integrated at all 10 German university hospitals of the MIRACUM consortium.

So far, our ETL process is restricted to a bulk load of FHIR resources to OMOP CDM. This implied that all FHIR resources are read from the source. To enable the CTRSS to provide daily recruitment proposals, our ETL process has to be executed every day as a full load. However, an everyday full load is not efficient because often only a small amount of source data has changed during loading periods, which results in unnecessary long execution times considering a full load for daily executions. Consequently, the computational effort that comes with the daily execution of the bulk load is not efficient in the context of the CTRSS.

Thus, a new approach is needed to only process FHIR resources that were created, updated, or deleted (CUD) since the last execution of the ETL process once an initial load has been executed. This loading option is known as "incremental loading."

Objective

To keep the bulk load option for dedicated studies and still be performant toward daily changes in the source data, a combination of bulk load and incremental load is needed. To reduce the additional effort in implementing a second independent ETL process for incremental loading, it is our aim to extend our existing ETL process with the option of incremental loading. During our research, we focused on the following four research questions:

- What requirements need to be considered when integrating incremental loading into our existing ETL process design?

- What approaches already exist for incremental ETL processes?

- How can the identified requirements from research question one be implemented in our existing ETL process design?

- Does incremental loading provide an advantage over daily bulk loading?

Methods

Analysis of the Existing ETL FHIR-to-OMOP process

To determine the requirements for integrating incremental loading into our existing ETL process design, we performed an impact analysis focusing on the whole ETL process, as well as, in more detail, the three main components of it, namely, Reader, Processor, and Writer, as presented by Peng et al. [2] Regarding the whole ETL process, the following three requirements were needed:

- Requirement A: It is necessary to provide the user with the ability to distinguish between bulk loading and incremental loading.

- Requirement B: For incremental loading, it is further essential that the Reader of the ETL process is able to detect changes in the source system and reads only CUD-FHIR resources on a daily basis.

- Requirement C: During the processing of updated and deleted FHIR resources, duplicates and obsolete data should be avoided in OMOP CDM to guarantee data correctness.

Considering the semantic mapping from FHIR MI-I Core Data Set (CDS) to OMOP CDM and the Writer of the ETL process, as described by Peng et al. [2], incremental loading has no impact on both. In summary, incremental loading requires an adjustment of the implementation of the Reader and Processor.

Literature review

To identify approaches that might be adaptable to our existing ETL design and fulfill the three requirements in the previous section, we conducted a first literature review on July 14, 2021; a second one on November 28, 2022; and a third one on February 22, 2023 (Multimedia Appendices 1, 2, and 3). Table 1 includes the search strings and the number of results for three literature databases.

| |||||||||||||||

We included only articles from 2011 to 2022 in English. After removing duplicates, 51 items were left. These were screened independently by two authors (EH and MZ). Through the title and abstract screening, we identified 12 relevant articles. After the screening of the full texts, we included eight articles within our research. Reasons for excluding the other articles were other meanings of the abbreviation “ETL,” ETL tools without regard to theoretical approaches of incremental loads, focus on application instead of ETL process and theoretical approach, and quality and error handling without focus on a theoretical approach.

Only two of the eight articles addressed ETL processes for loading patient data into OMOP CDM. Lynch et al. [16] introduced an approach for incremental transformation from the data warehouse to OMOP CDM to prevent incremental load errors. They suggest basing the development on a quality assurance (QA) process dependent on the data quality framework by Kahn et al. [17] Furthermore, they generated ETL batch tracking ids for each record of data during the transformation to OMOP CDM. For 1:1 mappings, they created custom columns in the standardized OMOP CDM tables, and for 1:n or n:1 mappings, they used a parallel mapping table to store the ETL batch id and a link to the corresponding record in OMOP CDM. Secondly, Lenert et al. [18] describe an automated transformation of clinical data into two CDMs (OMOP and PCORnet database) by using FHIR. Therefore, they use the so-called subscriptions of FHIR resources. These subscriptions trigger a function to create a copy of the FHIR resource and its transmission into another system whenever a FHIR resource is created or updated.

Despite OMOP CDM being the target database, the literature search revealed different concepts for incremental ETL itself. Kathiravelu et al. [19] described the caching of new or updated data in a temporary table. Of the eight articles, seven described various methods for incremental updates, particularly focusing on change data capture (CDC). All describe different categories of CDC, like timestamp-based, audit column–based, trigger-based, log-based, application programming interface–based, and data-based snapshots [16,18,20-24]: (1) Lynch et al. [16] and (2) Lenert et al. [18] focused on triggers; (3) Wen [20] focused on timestamps and triggers; (4) Thulasiram and Ramaiah [21] and (5) Sun [22] focused on timestamps; (6) Hu and Dessloch [23] focused on timestamps, audit columns, logs, triggers, and snapshots; and (7) Wei Du and Zou [24] focused on snapshots and MapReduce.

In summary, the literature review revealed adaptable approaches, which can be applied for the implementation of requirements B and C. However, no approaches could be found in the literature for requirement A. For this reason, we have to define a new method to enable both bulk and incremental loading in one ETL process. The concrete integration of the approaches into our existing ETL design is described in more detail in the following sections.

Incremental ETL process design

Enabling both bulk and incremental loading

For the specification, if the ETL process should be executed as bulk or incremental load, we added a new Boolean parameter in the configuration file of the ETL process called APP_BULKLOAD_ENABLED. According to the desired loading option, the parameter has to be adjusted before executing the ETL process, with “true” results in a bulk load and “false” results in an incremental load. During the execution of the ETL process, this parameter is further taken into account for the Reader and Processor of the ETL process [2] to distinguish between the needs of bulk and incremental load (e.g., to ensure that the OMOP CDM database is not emptied at the beginning of the ETL process execution during an incremental load).

Focusing on CUD-FHIR resources since the last ETL execution

Our purpose of incremental loading was to focus only on CUD-FHIR resources since the last time the ETL process was executed (whether as bulk or incremental load). Consequently, the ETL process for incremental load has to filter only CUD-FHIR resources from the source. The literature research showed that there are various CDC approaches to detect changes in the source. In our case, FHIR resources in the FHIR Gateway and FHIR Server contain metadata, such as a timestamp indicating when an FHIR resource was created, updated, or deleted in the source (FHIR Gateway: column last_updated_at; FHIR Server: meta.lastUpdated). That is why we used the timestamp-based CDC approach to filter FHIR resources, which have a timestamp specification after the last ETL execution time.

To ensure filtering for the incremental load, we added two new parameters in the configuration file of the ETL process: DATA_BEGINDATE and DATA_ENDDATE. Both parameters have to be adjusted before executing the ETL process as incremental load. During the execution, the ETL process takes these two parameters into account and only reads FHIR resources from the source that has a metadata timestamp specification that is in [DATA_BEGINDATE, DATA_ENDDATE].

Guarantee data correctness in OMOP CDM

To avoid duplicates in OMOP CDM when processing updated and deleted FHIR resources, their existence in OMOP CDM has to be checked during processing. The FHIR resources themselves do not have a flag that indicates whether they are new or have been changed. Only deleted FHIR resources can be identified by a specific flag in the metadata. To assess the existence of FHIR resources in OMOP CDM, a comparison of the data of the read FHIR resources with the data already available in OMOP CDM has to be done.

The literature research showed an approach to generate a unique tracking id per source data during the transformation process and its storage in OMOP CDM. [16] We decided against the approach of generating an additional id because FHIR resources already contain two identifying FHIR elements themselves: id and identifier. The id represents the logical id of the resource per resource type, while the identifier specifies an identifier that is part of the source data. Both FHIR elements allow the unique identification of an FHIR resource per resource type. However, the standardized OMOP CDM tables do not provide the possibility to store this information from FHIR. Furthermore, OMOP CDM has its own primary keys for each record in a table independent of the id and identifier used in FHIR. Consequently, after transforming FHIR resources to OMOP CDM, the identifying data from FHIR resources will be lost.

To solve this problem, we need to store the mapping between the id and identifier used in FHIR with the id used in OMOP CDM. Due to the fact that the id of an FHIR resource is only unique per resource type and one FHIR resource can be stored in OMOP CDM in multiple tables, we additionally have to specify the resource type. As mentioned above, Lynch et al. [16] presented an approach to store the mapping between tracking ids for source records and ids used in OMOP CDM by using a mapping table and custom columns in OMOP CDM. We have slightly customized this approach and adapted it into our ETL design. Contrary to the use of both mapping tables and custom columns, we considered each approach separately.

Our first approach uses mapping tables for each FHIR resource type in a separate schema in OMOP CDM. With this approach, the Writer of the ETL process has to fill additional mapping tables beside the standardized tables in OMOP CDM. Our second approach focuses on two new columns in the standardized tables in OMOP CDM called “fhir_logical_id” and “fhir_identifier.” These columns store the id and identifier of the FHIR resource. Furthermore, we appended an abbreviation of the resource type as a prefix to the id and identifier of FHIR (eg, “med-” for Medication, “mea-” for MedicationAdministration, or “mes-” for MedicationStatement FHIR resources). In consequence, the combination of the prefix with the id and identifier and its storage in OMOP CDM enables the unique identification of FHIR resources in OMOP CDM. Since the mapping tables and two new columns are required exclusively for the ETL process, the analysis of data across multiple OMOP CDM databases is not affected.

Based on the unique identification of FHIR resources in OMOP CDM, it is now possible to guarantee data correctness in OMOP CDM during incremental loading. Figure 1 shows the exemplary data flow for Condition FHIR resources for the second approach with two new columns. First, the Processor extracts the id and identifier used in FHIR. After that, the prefix is added to both values. Regardless of whether the data was created, updated, or deleted in the source, the ETL process next verifies each processed FHIR resource’s existence in OMOP CDM using the mapping tables or two new columns. During the verification, records are deleted in OMOP CDM if they were found. This approach is also used for updated FHIR resources to avoid incomplete updates for cross-domain mappings in OMOP CDM. Consequently, we do not perform updates on the existing records in OMOP CDM except Patient and Encounter FHIR resources to ensure referential integrity in OMOP CDM. In case FHIR resources are marked as deleted in the source, the processing is completed. Otherwise, the same semantic mapping logic as for bulk loading [2] applies afterward, and the data of the FHIR resources are written to OMOP CDM as new records with new OMOP ids.

|

Evaluation of the incremental load process

For the evaluation of the incremental load process, we defined and executed two ETL test designs. First, we tested which approach to store the mapping between id and identifier used in FHIR with the id used in OMOP CDM was the most performant. For this purpose, we implemented a separate ETL process version for each approach. Afterward, we executed the ETL process as bulk load first and as incremental load afterward, and compared the execution times between the mapping table approach and the column approach. For further evaluation of the incremental load process, we have chosen the most performant approach, resulting in a new optimized ETL process version for the second ETL test design.

To test the achievement of the three requirements identified during the initial analysis of our ETL process, we defined and executed a second ETL test design (Table 2) that compares the results of bulk loading with those of incremental loading regarding performance and data correctness. Our hypotheses here are that the execution time of incremental loading alone is less than bulk loading, including daily updates, and that the amount of data per table in OMOP CDM is identical after incremental loading and bulk loading, including daily updates.

| ||||||||

For both ETL test designs, we used a total of 3,802,121 synthetic FHIR resources version R4 based on the MI-I CDS version 1.0, which were generated using random values. Furthermore, we simulated CUD-FHIR resources for testing incremental loading for one day. For the simulation, we checked the frequency distribution of CUD data per domain in our source system with real-world data for eight days and calculated the average value (see Multimedia Appendix 4). In addition, we set up one OMOP CDM v5.3.1 database as the target and executed the ETL process according to our test designs. For both ETL tests, we tracked the execution times based on the time stamps in the logging file of the ETL process until the corresponding job finished successfully. In a second step, we recorded the data quantity for each filled table in OMOP CDM and compared the results between the two ETL loading options for the second ETL test.

Results

Architecture of the ETL process

The implemented ETL process extension for incremental loading of FHIR resources to OMOP CDM has not changed the basic architecture of the ETL process, as proposed by Peng et al. [2], consisting of Reader, Processor, and Writer (Figure 2). The only addition is a switch at the beginning of the ETL process, which allows the user to select between bulk load and incremental load (requirement A). Moreover, we configured the Reader for incremental loading of CUD-FHIR resources on a daily basis (requirement B). In the Processor, we added the logic of the verification of CUD-FHIR resources and their deletion from OMOP CDM if they already exist (requirement C).

|

The ETL process covering bulk and incremental load is available in the OHDSI repository ETL-German-FHIR-Core. [25]

Findings of the first ETL test

The first ETL test focused on the performance measurement of the mapping table approach versus the column approach. First, we executed both ETL approaches as a bulk load. The column approach took about 30 minutes to transform FHIR resources to OMOP CDM. In contrast, the mapping table approach was still not finished after four hours. Therefore, we stopped the ETL execution and did not test the incremental loading anymore. Consequently, for the incremental ETL design, we decided to use the column approach due to its better performance and executed the subsequent performance evaluations with it.

Findings of the second ETL test

The second ETL test dealt with testing our two hypotheses in Table 2. First, we compared the execution times between a bulk load (three months plus one day) and an initial bulk load (three months) followed by an incremental load (one day). For this, each loading option was executed three times. Based on the results, we calculated the average execution times. The performance results (Multimedia Appendix 5) show that an initial bulk load (13.31 minutes) followed by a daily incremental load (2.12 minutes) is more efficient than an everyday full load (17.07 minutes). Looking at the percentage improvement in performance, it can be shown that incremental loading had 87.5% less execution time than a daily full load (2.12 minutes compared to 17.07 minutes). Referring to our first hypothesis, we were able to prove our initial assumption.

After the execution of both loading options, we further checked the data quantity for each filled table in OMOP CDM and compared the results of it. As shown in Table 3, both loading options resulted in the same amount of data (Multimedia Appendix 5). Consequently, we were also able to confirm our second hypothesis regarding data correctness in OMOP CDM.

| |||||||||||||||||||||||||||||||||||||||||||||

Discussion

References

Notes

This presentation is faithful to the original, with only a few minor changes to presentation, though grammar and word usage was substantially updated for improved readability. In some cases important information was missing from the references, and that information was added.