Difference between revisions of "Journal:Critical analysis of the impact of AI on the patient–physician relationship: A multi-stakeholder qualitative study"

Shawndouglas (talk | contribs) |

Shawndouglas (talk | contribs) (Saving and adding more.) |

||

| Line 392: | Line 392: | ||

One of the themes that emerged in the results was the potential transformation of patient–physician relationships, a concern which has already been noted in the literature [23] and other qualitative studies. [11,12,17] All of the participants emphasized the importance of the patient–physician relationship and the need to preserve its core values, which are based on trust and honesty, achieved through open and sincere communication. In the realm of mental health, several studies [30–32] have endorsed the use of AI in an assistive role as playing a positive role in improving openness and communication while avoiding potential complications in interpersonal relationships, since patients in mental health context may be more willing to disclose personal information to AI rather than physicians. | One of the themes that emerged in the results was the potential transformation of patient–physician relationships, a concern which has already been noted in the literature [23] and other qualitative studies. [11,12,17] All of the participants emphasized the importance of the patient–physician relationship and the need to preserve its core values, which are based on trust and honesty, achieved through open and sincere communication. In the realm of mental health, several studies [30–32] have endorsed the use of AI in an assistive role as playing a positive role in improving openness and communication while avoiding potential complications in interpersonal relationships, since patients in mental health context may be more willing to disclose personal information to AI rather than physicians. | ||

Although the majority of the participants in this study had a positive outlook on the potential for AI to improve the patient–physician relationship, the physicians feared that implementing such technology could reduce physician–patient interactions and erode the established trust in the patient–physician relationship. [33] In this relationship, as perceived by the respondents, the AI cannot replace the physician's skills, visual observations, and emotional recognition of the patients, nor the experience and intuition that guides the physician's decision-making. Moreover, substituting humans with AI in healthcare would not be possible because healthcare relies on relationships, empathy, and human warmth. [34] Therefore, patients, physicians, and hospital managers view the implementation of AI in healthcare as a necessary synergy between physicians and AI, with AI providing technical support, and physicians providing a human-specific approach in the form of a partnership. [35] Thus, the AI can become the physician's right hand [29], augmenting the physician's existing capabilities.36 Ideally, this supportive and augmenting role of AI-based tools within the patient–physician relationship would be followed up using models of shared decision-making.14 | Although the majority of the participants in this study had a positive outlook on the potential for AI to improve the patient–physician relationship, the physicians feared that implementing such technology could reduce physician–patient interactions and erode the established trust in the patient–physician relationship. [33] In this relationship, as perceived by the respondents, the AI cannot replace the physician's skills, visual observations, and emotional recognition of the patients, nor the experience and intuition that guides the physician's decision-making. Moreover, substituting humans with AI in healthcare would not be possible because healthcare relies on relationships, empathy, and human warmth. [34] Therefore, patients, physicians, and hospital managers view the implementation of AI in healthcare as a necessary synergy between physicians and AI, with AI providing technical support, and physicians providing a human-specific approach in the form of a partnership. [35] Thus, the AI can become the physician's right hand [29], augmenting the physician's existing capabilities. [36] Ideally, this supportive and augmenting role of AI-based tools within the patient–physician relationship would be followed up using models of shared decision-making. [14] | ||

It is important to note that AI-based tools were perceived only as potentially empowering physicians [37] in particular. Hospital managers argued that future development would need to be geared towards assisting physicians rather than providing a tool for patients’ (self-)diagnosis, which might have a negative effect on younger generations in particular, who are more inclined towards the use of new technology. Some parallels can be drawn with the rise of ChatGPT and its potentially risky contribution to the "AI-driven infodemic." [5] | |||

===Alleviating workload and reducing the administrative burden by saving time and bringing the patient to the center of the caring process=== | |||

Moreover, the potential of AI has been recognized in the findings as improving the existing patient–physician relationship among all stakeholder groups considering the current state of medicine and healthcare, which is confronted with excessive workload and administrative burden. In particular, physicians view AI as an opportunity to replace or automate certain tasks [13], such as the manual input of data, which often reduces the patient consultation to a meeting with the screen/monitor, involving limited physical contact with the patient. Furthermore, the patients anticipated that the implementation of AI might minimize waiting times and administration in healthcare. However, the perception of AI-based tools being a solution to the persisting issues in healthcare reveals that, among our respondents, AI is seen as a panacea. Specific tasks being taken over by AI-based tools will allow more time for physicians to spend with patients or for professional growth. [29] However, it could be argued that using this extra time might be also used to see more patients each day, which at the end might result in the opposite effect of our respondents expectations. Recent reports have implied that, with the implementation of AI, productivity might increase, and greater efficiency of care delivery will allow healthcare systems to provide more and better care to more people. [38] The recent AI economic model for diagnosis and treatment [39] demonstrated that AI-related reductions in time for diagnosis and treatment could potentially lead to a triple increase of patients per day in the next 10 years. | |||

The respondents did not perceive that the delegation of certain tasks to AI would result in AI replacing physicians, nor the physicians’ role being threatened, because their role is not only to provide a diagnosis but to fully engage with the patients, offering consolation, consultations, and more. Sezgin [40] provides concrete instances of the ways in which AI enhances healthcare without taking the place of physicians. For example, AI-assisted [[Clinical decision support system|decision support systems]] work with [[magnetic resonance imaging]] and ultrasound equipment in helping physicians with diagnosis, or enhancing speech recognition in dictation devices that record radiologists’ notes. Nagy and Sisk [34] argue that although AI-based tools might reduce the burden of administrative tasks, they might also increase the interpersonal demands of patient care. Therefore, AI-based tools have the potential to place the patient at the center of the caring process, safeguarding the patients’ autonomy and assisting them in making informed decisions that align with their values. [41] | |||

===The potential loss of a holistic approach by neglecting humanness in healthcare=== | |||

The essential aspects of the patient–physician relationship, such as effective communication and personal interaction, are already under threat due to excessive administrative tasks, physician workload, and technology that hinders direct patient interaction. Indeed, physicians endorse the need for synergy between AI and physicians; they consider the physical contact between physicians and patients as something that must be preserved, and which could be threatened by the implementation of AI. This is due to the perception that interaction between patients and physicians will likely decrease, leading to alienation, and excessive digitalization could further reduce communication and connection within patient–physician relationships. Sparrow and Hatherley [42] emphasize the paradox of the expectations of AI, which predict that, on the one hand, it will help improve the physician's efficiency but, on the other—taking into consideration the economics of healthcare—it might erode the empathic and compassionate nature of the relationship between patients and physicians as a result of increased numbers of patient consultations each day, due to the physician's increased efficiency. | |||

On the contrary, some studies [10,14] have reported that AI-based tools represent an opportunity to enhance the humanistic aspects of the patient–physician relationship by requiring enhanced communication skills in explaining to patients the outputs of AI-based tools that might influence their care. Explaining how a particular decision has been made is the first step in building a trusting relationship between the physician, patient, and AI [24], which might lead towards a more productive patient–physician relationship by increasing the transparency of decision-making. [25] The lack of explainability might be problematic for physicians [26] to take responsibility for decisions involving AI systems, as the participants in this study emphasized that physicians should retain ultimate responsibility. The ability of a human expert to explain and reverse-engineer AI decision-making processes is still necessary, even in situations in which AI tools can supplement physicians and, in certain circumstances, even significantly influence the decision-making process in the future. [27] Therefore, keeping humans in the loop with regard to the inclusion of AI in healthcare will contribute to preserving the humane aspects of healthcare. Otherwise, the holistic elements of care might be lost and the dehumanization of the healthcare system [29] would render AI a severe disadvantage, rather than a significant benefit. | |||

==Strengths and limitations== | |||

Caution should be taken when interpreting the results of the study, as there are some limitations. The sample size of the participants was not calculated using power analysis; instead, the data saturation approach was taken within each specific category of key stakeholders. Therefore, it is impossible to make generalizations for the entire stakeholder groups. As participants were recruited using purposive sampling and the snowballing method, a degree of sample bias is acknowledged because some of the individuals who were keen and familiar with AI technology expressed their willingness to participate in the study; these people might have had higher medical and digital literacy levels compared to the average members of the included stakeholder groups in Croatia. Another limitation is the timeframe of the research, because it was conducted during the [[COVID-19]] [[pandemic]], when meetings between patients and physicians were reduced to a minimum. As a result, participants may have been more aware of the impact of AI on the patient–physician relationship. | |||

==Conclusions== | |||

This multi-stakeholder qualitative study, focusing on the micro-level of healthcare decision-making, sheds new light on the impact of AI on healthcare and the future of patient–physician relationships. Following the recent introduction of ChatGPT and its impact on public awareness of the potential benefits of AI in healthcare, it would be interesting for future research endeavors to conduct a follow-up study to determine how the participants’ aspirations and expectations of AI in healthcare have changed. The results of the current study highlight the need to use a critical awareness approach to the implementation of AI in healthcare by applying critical thinking and reasoning, rather than simply relying upon the recommendation of the algorithm. It is important not to neglect clinical reasoning and consideration of best clinical practices, while avoiding a negative impact on the existing patient–physician relationship by preserving its core values, such as trust and honesty situated in open and sincere communication. The implementation of AI should not be allowed to cause the dehumanization and deterioration of healthcare but should help to bring the patient to the center of the healthcare focus. Therefore, preservation of the trust-based relationship between patient and physician is urged, by emphasizing a human-centric model involving both the patient and the public even at the design stage of AI-based tool development. | |||

==Acknowledgements== | |||

We would like to thank our former research group member Ana Tomičić, PhD, who also participated in conducting and transcribing the interviews, as well as our former student research group members Josipa Blašković and Ana Posarić, for their assistance with the transcription of interviews. Furthermore, we want to express our immense gratitude to two anonymous reviewers who provided us with detailed, valuable, and constructive feedback for improving the manuscript. We also wish to acknowledge that the credit for the proposed future research avenue of a follow-up study following the introduction of ChatGPT goes to one of our two reviewers. | |||

===Funding=== | |||

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Hrvatska Zaklada za Znanost HrZZ (Croatian Science Foundation) (grant number UIP-2019-04-3212). | |||

===Ethical approval=== | |||

The ethics committee of the Catholic University of Croatia approved this study (number: 498-03-01-04/2-19-02). | |||

===Conflict of interest=== | |||

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article. | |||

==Supplementary material== | |||

* [https://journals.sagepub.com/doi/suppl/10.1177/20552076231220833/suppl_file/sj-docx-1-dhj-10.1177_20552076231220833.docx Supplementary material 1] (.docx) | |||

==Footnotes== | ==Footnotes== | ||

Revision as of 23:25, 7 March 2024

| Full article title | Critical analysis of the impact of AI on the patient–physician relationship: A multi-stakeholder qualitative study |

|---|---|

| Journal | Digital Health |

| Author(s) | Čartolovni, Anto; Malešević, Anamaria; Poslon, Luka |

| Author affiliation(s) | Catholic University of Croatia |

| Primary contact | Email: anto dot cartolovni at unicath dot hr |

| Year published | 2023 |

| Volume and issue | 9 |

| Article # | 231220833 |

| DOI | 10.1177/20552076231220833 |

| ISSN | 2055-2076 |

| Distribution license | Creative Commons Attribution-NonCommercial-NoDerivs 4.0 International |

| Website | https://journals.sagepub.com/doi/10.1177/20552076231220833 |

| Download | https://journals.sagepub.com/doi/reader/10.1177/20552076231220833 (PDF) |

|

|

This article should be considered a work in progress and incomplete. Consider this article incomplete until this notice is removed. |

Abstract

Objective: This qualitative study aims to present the aspirations, expectations, and critical analysis of the potential for artificial intelligence (AI) to transform the patient–physician relationship, according to multi-stakeholder insight.

Methods: This study was conducted from June to December 2021, using an anticipatory ethics approach and sociology of expectations as the theoretical frameworks. It focused mainly on three groups of stakeholders, namely physicians (n = 12), patients (n = 15), and healthcare managers (n = 11), all of whom are directly related to the adoption of AI in medicine (n = 38).

Results: In this study, interviews were conducted with 40% of the patients in the sample (15/38), as well as 31% of the physicians (12/38) and 29% of health managers in the sample (11/38). The findings highlight the following: (1) the impact of AI on fundamental aspects of the patient–physician relationship and the underlying importance of a synergistic relationship between the physician and AI; (2) the potential for AI to alleviate workload and reduce administrative burden by saving time and putting the patient at the center of the caring process; and (3) the potential risk to the holistic approach by neglecting humanness in healthcare.

Conclusions: This multi-stakeholder qualitative study, which focused on the micro-level of healthcare decision-making, sheds new light on the impact of AI on healthcare and the potential transformation of the patient–physician relationship. The results of the current study highlight the need to adopt a critical awareness approach to the implementation of AI in healthcare by applying critical thinking and reasoning. It is important not to rely solely upon the recommendations of AI while neglecting clinical reasoning and physicians’ knowledge of best clinical practices. Instead, it is vital that the core values of the existing patient–physician relationship—such as trust and honesty, conveyed through open and sincere communication—are preserved.

Keywords: artificial intelligence, patient-physician relationship, ethics, bioethics, qualitative research, multi-stakeholder approach

Introduction

Recent developments in large language models (LLM) have attracted public attention in regard to artificial intelligence (AI) development, raising many hopes among the wider public as well as healthcare professionals. After ChatGPT was launched in November 2022, producing human-like responses, it reached 100 million users in the following two months. [1] Many suggestions for its potential applications in healthcare have appeared on social media. These have ranged from using AI to write outpatient clinic letters to insurance companies, thereby saving time for the practicing physician, to offering advice to physicians on how to diagnose a patient. [2] Such an AI-enabled chatbot-based symptom checker can be used as a self-triaging and patient monitoring tool, or AI can be used for translating and explaining medical notes or making diagnoses in a patient-friendly way. [3] Therefore, the introduction of ChatGPT represented a potential benefit not only for healthcare professionals but also for patients themselves, particularly with the improved version of GPT-4. In addition to ChatGPT, various other LLMs are at different stages of development, for example, BioGPT (Massachusetts Institute of Technology, Boston, MA, USA), LaMDA (Google, Mountainview, CA, USA), Sparrow (Deepmind AI, London, UK), Pangu Alpha (Huawei, Shenzen, China), OPT-IML (Meta, Menlo Park, CA, USA), and Megatron Turing MLG (Nvidia, Santa Clara, CA, USA). [4]

However, despite the wealth of potential applications for LLM, including cost-saving and time-saving benefits that can be used to increase productivity, there has been widespread acknowledgement that it must be used wisely. [3] Therefore, the critical awareness approach mostly relates to underlying ethical issues such as transparency, accountability, and fairness. [5] Critical thinking is essential for physicians to avoid blindly relying only on the recommendations of AI algorithms, without applying clinical reasoning or reviewing current best practices, which could lead to compromising the ethical principles of beneficence and non-maleficence. [6] Moreover, when using LLM in the healthcare context, the provision of sensitive health information by feeding up the algorithmic black box might be met with a lack of transparency in terms of the ways in which the commercial companies will use or store such information. In other words, such information might be made available to company employees or potential hackers. [4] In addition, from a public health perspective, using ChatGPT could potentially lead to an "AI-driven infodemic," producing a vast amount of scientific articles, fake news, and misinformation. [5] Therefore, all of these challenges [7] necessitate further regulation of LLM in healthcare in order to minimize the potential harms and foster trust in AI among patients and healthcare providers. [1]

Interestingly, healthcare professionals have demonstrated openness and readiness to adopt generative AI, mostly because they are excessively burdened by administrative tasks [8] and are desperately seeking a practical solution. Several medical specializations have been identified as benefiting from the use of medical AI, including general practitioners [9], nephrologists [10], nuclear medicine practitioners [11], and pathologists [12], with the technology reportedly having a direct impact on physicians’ roles, responsibilities, and competencies. [12–14] Although the above-mentioned potential has been recognized, various studies have noted that the implementation of medical AI would bring about certain challenges [15] and barriers [16], such as physicians’ trust in the AI, user-friendliness [17], or tensions between the human-centric model and technology-centric model, that is, upskilling and deskilling [18], which will further impact on the (non-)acceptance of AI-based tools. [17]

Aims

This study seeks to present the aspirations, fears, expectations, and critical analysis of the ability of AI to transform healthcare. Therefore, this qualitative study aims to provide multi-stakeholder insights, with a particular focus on the perspectives of patients, healthcare professionals, and managers regarding the current state of healthcare, the ways in which AI should be implemented, the expectations of AI, the synergistic effect between physicians and AI, and its impact on the patient–physician relationship. These results will provide some clarification regarding questions that have been raised about openness towards embracing AI, and critical awareness of AI's potential limitations in clinical practice.

Methods

This study was conducted from June to December 2021 as a multi-stakeholder (n = 75) qualitative study. It employs an anticipatory ethics approach, an innovative form of ethical reasoning that is applied to the analysis of potential mid-term to long-term implications and outcomes of technological innovation [19], and sociology of expectations, focusing on the role of expectations in shaping scientific and technological change. [20,21] These are the theoretical frameworks underpinning the design of the qualitative study, in which the questions were followed by two scenarios set in 2030 and 2023 to stimulate discussions. Although both referred to the digital health context, the first scenario focused on the use of an AI-based virtual assistant, while the second focused on self-monitoring devices. This article focuses only on the first scenario (see Appendix I) as it was embedded in the clinical setting and depicts the future care provision and transformation of healthcare. The study follows the consolidated criteria for reporting qualitative research (COREQ) guidelines [22] (see Appendix II). Furthermore, it was approved by the Catholic University of Croatia Ethics Committee n. 498-03-01-04/2-19-02.

Participants and recruitment

A purposeful random sampling method was employed. The inclusion criteria included that they belonged to specific key stakeholder groups (physicians, patients, or hospital managers), while people who were under 18 years of age or who did not fall into any of the specified key stakeholder groups were excluded. The participants were recruited using the snowballing technique until data saturation was reached, respecting and ensuring data heterogeneity and aiming for maximum variation in variables such as stakeholder category, age, gender, and location. Participants (n = 75) were identified as stakeholders in the healthcare context: patients, physicians, IT engineers, jurists, hospital managers, and policymakers (Figure 1). Initially, an email was sent to invite participation in the research. Some of the invitees did not respond to the email, though no one provided reasons for declining. Of those who agreed to participate, some participants opted to postpone the interviews due to other commitments, and some were ultimately not conducted.

|

Considering the context, with the recent introduction of ChatGPT and outlined aim, it was decided to focus mainly on three groups that were directly affected by the adoption of AI in medicine (n = 38), which were physicians (n = 12), patients (n = 15), and healthcare managers (n = 11). All participation was voluntary and, prior to the interview, participants received all of the information they needed to provide informed consent.

Data collection and analysis

Semi-structured interviews were conducted both in-person (at locations convenient for the participants or at the research group's work office) and online, using the Zoom platform, by researchers experienced in qualitative research. Only the participant and the researcher attended the interviews. The initial interview guide was based on the authors’ previous desk research on recognized ethical, legal, and social issues in the development and deployment of AI in medicine. [23] It was inspired by similar studies [24–26] and was pilot-tested on a group of 23 stakeholders. Later, the interview guide was adapted as the study continued to take account of emerging themes until data saturation was reached. All interviews were recorded using a portable audio recorder and later transcribed; the average length of interviews was 47 minutes. Transcripts were not provided to participants for comments or corrections. The transcribed interviews were entered into the NVivo qualitative data analysis software. Researchers familiarized themselves with the material by reading the transcripts and taking notes to gain deeper insights into the data. Next, a thematic analysis was conducted. [27] Following that, an open coding process was initiated for the interviews (n = 11). Based on the initial codes, the researchers agreed on thematic categories [28], leading to the development of the final codebook, which was then used to code the remaining interviews. Finally, the researchers combined and discussed themes for comparison and reached a consensus on how to define and use them. All interviews were analyzed in the original language (Croatian), and the quotes presented in this article have been translated into English.

Results

Participant demographics

This study focuses on 38 conducted interviews with patients (comprising 40% of the sample; 15/38), followed by physicians (31% of the sample; 12/38), and health managers (29% of the sample; 11/38). In terms of gender, 53% of the participants were female (20/38), while 47% (18/38) were male. The participants’ ages ranged from the 18 to 24 age group to the 65 and older category. Regarding the geographical distribution, most respondents 74% (28/38) hailed from urban centers, and nine participants, representing 23% of respondents (9/38), were from the urban periphery, whereas one participant resided in a rural periphery (Table 1). A minority of patients (33%; 5/15) regularly used technology for health monitoring, such as applications and smart devices (e.g., smartwatches), in their daily routines.

| |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

Thematic analysis

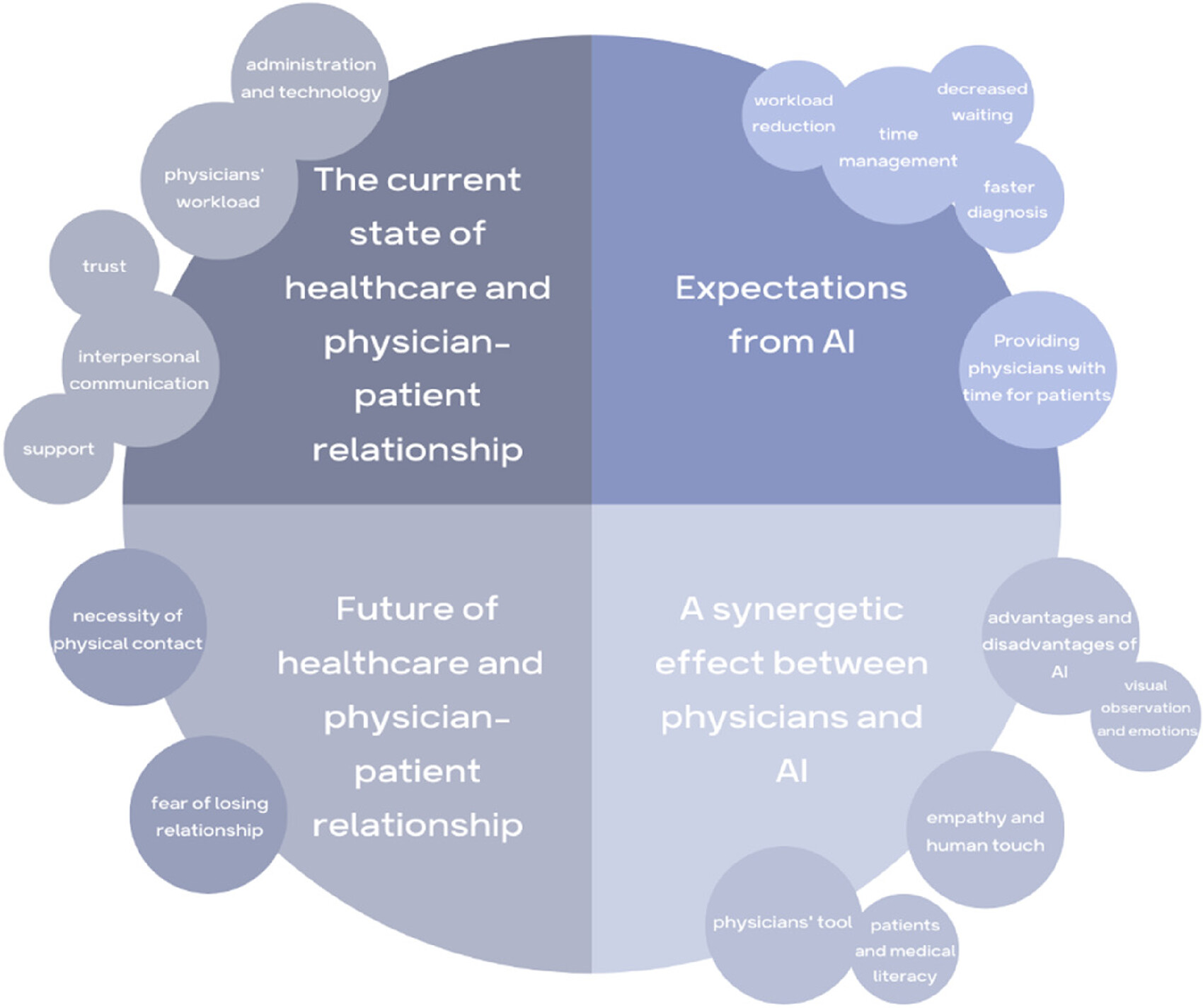

Four themes and subthemes (Figure 2) were identified: (1) the current state of healthcare and the patient–physician relationship; (2) expectations of AI; (3) a synergetic effect between physicians and AI; and (4) the future of healthcare and the patient–physician relationship.

|

The current state of healthcare and the patient–physician relationship

Research participants reflected on the current state of healthcare, discussing both positive and negative aspects. Their responses encompassed a wide range of topics related to healthcare, including health policies, infrastructure, physicians’ qualifications, patients’ medical literacy, weak hospital management, and financial losses. While each of these themes is intriguing for analysis, this section will focus on the current state of the patient–physician relationship, and specifically those aspects highlighted by the participants as being the essential, positive, or negative aspects of this relationship.

Many participants emphasized trust, honesty, and effective interpersonal communication as a fundamental aspect of the patient–physician relationship. Patients stressed the importance of being heard by their physicians and actively engaging in a shared decision-making process.

I think the most important thing for me is that we communicate about everything. To talk. To trust what he tells me. (Patient 5)

It's important that he[a] listens to me and doesn't jump to conclusions before I fully explain the issue. (Patient 9)

It is crucial that he listen to me and that I listen to him. That's really the most important thing because if he does not listen to me, if it only gets heard in one ear, coming here is a waste of time. (Patient 12)

Physicians also emphasized the importance of patients having trust in them, identifying openness and honesty as directly enabling the identification of the fastest and optimal treatment approach. Furthermore, they highlighted the significance of patients’ ability to articulate their condition effectively, enabling them to convey their symptoms clearly to the physician.

When they have trust in me, it becomes much easier for me to treat them. (Physician 3)

I have always strived, both in direct and indirect interactions at work, to listen to the patient, guide them, and provide advice in good faith, with the simple aim of resolving any issues. (Physician 4)

The most crucial aspect is establishing easy communication and trust in what we do. (Physician 12)

Actually, it's always about honesty. Knowing that, when patients speak, they tell the exact truth and don't deceive. That's an essential factor for me. Another important factor is the patient's capacity to communicate clearly. To correctly interpret their symptoms and condition of health. To convey a comprehensive picture to me. (Physician 11)

Some physicians emphasized that patients often come to them specifically to have a conversation. In other words, patients sometimes approach physicians not only for health issues but also for support and simply to be heard.

Many people come to the physician just to vent. They come to vent, and then we find some psychological component in a good percentage of them. I believe it's important for them to sit down with the physician, have a chat. (Physician 11)

Some patients reported that they have developed a friendly relationship with their physician due to long-term treatment.

The friendship that is built over the years is essential because I have been with this physician for twenty years, and I simply feel like… When I come to her, it's like talking to a friend – we chat a little, laugh, and then I start talking about my [health] problems. (Patient 11)

A friendly patient–physician relationship must be developed. (Patient 5)

Patients also expressed dissatisfaction that they sometimes do not receive enough care and attention from physicians. However, they were aware that the reason for this is often the physicians’ overwhelming workload and the limited time they have available.

It feels selfish to ask because I know they have a lot of work to do, but I don't like being just a number and a piece of paper in a drawer. (Patient 10)

You feel like they want to get rid of you. To get it done as quickly as possible, and that's it. (Patient 1)

Some participants pointed out that the paperwork is a problem. According to them, physicians are more focused on paperwork than on the individuals sitting in front of them.

I'd prefer if the physician didn't just look at the papers but lifted their head, talked to me, gave me a look, and conducted an examination if needed, which is equally important, because lately, it often seems to be reduced to just paperwork. (Patient 15)

Physicians also expressed dissatisfaction with the fact that a part of their consultation time with patients is spent on tasks that could be done differently or replaced by other methods.

Suppose I spend time measuring their blood pressure at every consultation, explaining to them that I'm referring them for certain tests, and prescribing medications every time. In that case, I have less time for purposeful [laughs] procedures that could really impact treatment outcomes, right? (Physician 9)

One aspect that physicians highlighted as being time-consuming was the administrative tasks undertaken during patient consultations. Similarly, manual data collection and entering data into the system often means the physician is spending the meeting time looking at a screen, having limited actual contact with the patient who is there.

Because, honestly, all that typing, printing, and confirming of test results and such, I waste a lot of time on it, and the actual examination of the patient and talking to them, I believe, should be retained, but in a way that avoids the need for me to look at the screen constantly. Personally, when I gather data, sometimes I forget that, while I'm typing and looking at the screen, I'm not really looking at the patient themselves, and I end up missing information I could gather just by observing them. (Physician 3)

Today, they are mostly on the computer, and they have to type everything, so they have very little time to dedicate to us patients. (Patient 1)

Somehow, since computers were introduced in healthcare, a visit to one physician, a neurologist, for example, would be like this: I spent 5 minutes with him. Out of those 5 minutes, he would talk to me for a whole minute, and then spend 4 minutes typing on his computer. (Patient 13)I want to be polite, look at him, and talk to him, but on the other hand, you see this “click, click”, and if I don't click, I can't proceed. And then he says, “Why are you constantly looking at that [computer]?” – I mean, it's impolite, right? But I can't continue working because I have to type this and move on to the next… (Hospital manager 4)

Furthermore, hospital manager 4 also expressed concern about the current state of healthcare by pointing out that physicians have already become too focused on technology, neglecting personal contact with patients.

We forget that psychosomatics is 80–90%. The placebo effect, communication, positive thinking, and interaction are crucial. It's highly important, but we have neglected it and focused too much on technology. (Hospital manager 4)

Hospital managers felt that this issue might particularly affect young physicians who have not yet developed the skills that older physicians possess, due to their limited experience of patient interaction, and were further concerned that such physicians may not develop these skills because of excessive reliance on technology. This primarily pertains to patient communication, observation, and support.

For instance, when the electricity goes out, younger physicians will call me to ask whether they should examine patients or wait for the computer to start up. (Hospital manager 9)

Participants pointed out that communication and trust between patients and physicians are the keys to a good relationship. On the other hand, they reported often feeling that relationship difficulties are due to insufficient time caused by administrative tasks and the workload burden on physicians. Interestingly, existing non-AI-based technology, to the extent that it is now present, was perceived as being a negative factor and an obstacle to direct interaction between physicians and patients.

Expectations of AI

Regarding AI, there was no consensus among the participants. Their perceptions ranged from highly positive to entirely negative. This might be because most participants (especially patients) had no prior exposure to AI, and existing AI solutions have not had a comparable impact on public awareness to that of ChatGPT. However, by considering hypothetical scenarios, participants were able to reflect on their perspectives and attitudes towards the implementation of AI. In particular, physicians emphasized that AI could help to alleviate their workload by relieving them of certain tasks.

But it will dramatically ease our workload, won't it? Considering that there is currently a shortage of personnel and increased workload, it would be a very good solution. (Physician 12)

It can be an excellent tool for speeding up the diagnostic process, increasing accuracy, and making work easier. (Physician 2)

Many of our routine tasks, which consume our daily time, can be replaced. (Physician 5)Physicians don't have time, and the routine tasks that require lower levels of care could be performed by artificial intelligence as well… (Physician 6)

Patients also anticipated that AI could reduce waiting times and administration tasks in healthcare.

It would cut down on paperwork and waiting times for patients. (Patient 7)

It would make things much easier. There are patients who come to the physician just for a prescription and have to wait for two hours because there are 15 people ahead of them. (Patient 14)

Accelerated diagnoses and reduced waiting times for diagnosis or treatment were also notable expectations of AI that were expressed by the participants.

It can be an excellent tool for speeding up diagnosis, increasing diagnostic accuracy, and making work easier. (Physician 2)

If we had artificial intelligence that could quickly direct all of that towards a diagnosis, many unnecessary tests would be eliminated. Unnecessary tests are one of the key reasons for waiting lists. (Physician 6)

AI can also serve as a tool to bring healthcare closer to people. Specifically, facilitating speedy diagnosis can aid in preventive care and raising awareness.

In fact, it can ease and reduce barriers, making entry into the healthcare system more accessible. It can diagnose more people who wouldn't even know they have a certain condition and provide them with basic information, motivation, and a reason why they should take action in that regard. (Physician 9)

Reducing overcrowding, simplifying access to care, and faster diagnosis in the healthcare system would result in better time management. Therefore, this would free up time that could then be utilized more effectively.

Consequently, it would give me time that is currently being wasted. (Hospital manager 4)

According to the participants, the current healthcare system allows for insufficient direct interaction between patients and physicians, and the limited time allotted for communication often falls short of creating a quality foundation for further care. Hence, the biggest expectation regarding AI is that its adoption of certain tasks could create more time for physicians to spend with their patients.

Firstly, medicine would become significantly more humane, as people who truly need medical help would receive it in its full scope. Physicians don't have time, and routine tasks that require lower levels of care could be performed by artificial intelligence, so I think everyone would be satisfied; there would be no queues, everyone would receive services quickly, and, as I mentioned, what is essential, and what we can do less and less, is the time spent with the physicians, which would then truly be dedicated to the patient in a way that we cannot do now. (Physician 6)

For example, one of the most advantageous aspects is that it frees up more time for doctors to spend with patients and reduces the basically pointless standing around time. (Physician 1)

So, suppose you already have an algorithm that, for example, saves you maybe five minutes per patient, and you are not overwhelmed with work. In that case, you can spend those five minutes on the relationship between you and the patient, which is greatly mutually beneficial. (Physician 2)

For some physicians, the extra time freed up by AI would represent more time to devote to professional growth and other activities that are presently unachievable.

I could focus more on learning, organization, people, delivering news, conversations, personal development, and various other things. So, in that sense, I see only positive aspects. (Physician 7)

Hospital managers also emphasized that faster diagnosis would allow physicians to dedicate more time to patients, highlighting that physicians are not just there to provide a diagnosis but to fully engage with the patient, offering consolation, consultations, and more.

We'll reach a diagnosis more quickly [using AI], so then [physician and patient] will spend an hour and a half discussing it, and I'll encourage you, I'll comfort you, I'll provide various possibilities [to patient], and so on because I've gained some time. (Hospital manager 4)

The most significant expectations of AI that were highlighted by the participants included accelerated diagnostics, streamlined triage processes, reduction of the administrative burden, decreased waiting times and overcrowding, and improved access to healthcare services. Although it may appear that the realization of these expectations would encompass the majority of the physician's current tasks, allowing AI to fully take over the physician's job, our participants viewed it as a useful tool to free up time for physicians to focus on the core of their profession: working with patients.

A synergetic effect between physicians and AI

Although physicians would like AI to provide them with more time to dedicate to patients, there is still a fear that the implementation of such technology could actually have the opposite effect. Some participants predicted that AI could ultimately reduce the interaction between patients and physicians.

There would be fewer visits to the physician, and I think the AI system could eventually prevail. (Patient 3)

The physicians reported that AI alone cannot perform the job sufficiently well and that collaborative cooperation will always be essential. According to them, AI will never have the skills that a physician possesses, particularly when it comes to visual observations and recognizing emotions that are inherent to humans. In addition to medical knowledge, experience and intuition often play a crucial role in guiding a physician's thinking.

When you see a patient, when they walk through the door, you know what's wrong with them. Not because you've seen many like them and so on. By their appearance, by their behaviour, you know. You ask them two questions, and that's it. You understand. But artificial intelligence would have to go step by step, excluding similar things. So what might be straightforward for us, would require AI to ask 24 questions before arriving at the same conclusion we can get from two questions. (Physician 12)

Sometimes the entire field of medicine is on one side, but your gut feeling tells you something else, and in the end it turns out you are right. (Hospital manager 4)

Therefore, many physicians envision this relationship as a synergy between themselves and AI. They believe that AI could provide technical support, while they would remain responsible for the human-specific approach.

Technology should provide that technical, engineering aspect, while we physicians, as humans, should provide the empathetic part, everything that machines cannot do, but we as humans can. So, it would be an ideal combination. (Physician 6)

Physician 9 asserted that AI is better able to recognize diseases compared to physicians and that medicine should utilize this capability.

It is known that digital technologies, such as algorithms using artificial intelligence, machine learning methods, and so on, can much better recognize diseases than physicians, and this is something that we certainly need to use. (Physician 9)

Furthermore, physician 9 added that machines lack creativity and emotions, which are crucial for interpersonal relationships. Therefore, it appears that AI is unable to completely take over the diagnosis process without human assistance.

People have a role in creativity, in developing interpersonal relationships over time, which machines do not have, and in medicine, we can do a lot in this field… (Physician 9)

Patients also noted that AI cannot provide emotional support or empathy for the patient's condition.

I think that humans can express emotions, empathy, help, and give hope for a better tomorrow better than any machine. (Patient 4)

The majority of participants, including patients and physicians, viewed this new AI–physician relationship as a synergy between physicians and AI. It was generally agreed that AI can provide diagnoses but cannot offer assistance that includes empathy or compassion.

I wouldn't only rely on Cronko [AI]; I would use Cronko alongside a physician, a specialist, and so on. Why? Because Cronko doesn't feel, it lacks empathy and all those essential things that should be present when someone is feeling unwell. Cronko can't react, it can't provide help; it can only give a diagnosis. But when you visit a physician you need assistance. So, I think the whole system makes sense, but it can't function independently. (Patient 13)

Furthermore, hospital managers also saw the use of AI in healthcare as being inextricably linked to physicians. Indeed, the physician remains ultimately responsible for controlling and validating any results. Although AI may be superior in some respects, its limitations allow for physicians’ roles to be more clearly defined.

And for this reason, the employment of artificial intelligence is regarded as additional intelligence since, in the end, a person must have sufficient knowledge of it for the response to be relevant and logical. (Hospital manager 11)

AI helps us understand our limitations, but also encourages us to think about the simple way of doing things, as everything has its limitations. Our naked eye and technology provide us with certain indications, highlighting the irreplaceable aspects of our roles. (Hospital manager 2)

Regardless of technological innovations, the managers felt that AI should never be allowed to limit face-to-face human contact. They highlighted the need for human interaction to comprehend emotions, feelings, and cognitive processes.

Regardless of technological innovations, it should never, I mean, technology should never surpass us. It can serve us excellently, and that's great, I'm very supportive of that, but that human contact and the “eye-to-eye” aspect, I believe we should never lose that. (Hospital manager 4)

This technology cannot perceive and understand other people's emotions, feelings, cognitive functions, and reasoning. You simply have to be a living being for that. (Hospital manager 3)

Additionally, some participants expressed concerns that AI could replace physicians in patients’ eyes. This was viewed as a negative aspect because patients have insufficient medical knowledge to independently understand their diagnoses or fully comprehend their own conditions.

For example, patients may not comprehend their health reports… And this is where I see a significant role for physicians, nurses, and healthcare professionals to actually make healthcare more accessible to people, right? (Physician 9)

Therefore, participants asserted that AI should be geared towards assisting physicians rather than being available to patients as a tool for (self-)diagnosis. A hospital manager expressed strong opposition to the use of AI as an aid to patients’ self-diagnosis.

Artificial intelligence should aid physicians, not advise patients to self-diagnose. I am absolutely against that. (Hospital manager 9)

Moreover, hospital manager 9 also pointed out that this approach could have negative effects, especially among younger generations who are more inclined to use technology.

The younger ones probably wouldn't go to physicians anymore because they are into these new technologies. They would diagnose themselves, and that would be a much bigger horror. (Hospital manager 9)

Interestingly, participants from all three stakeholder groups reported being open to the implementation of AI in healthcare but felt that a critical thinking approach was necessary. They considered it important to bear in mind that the implementation of AI should not herald the replacement of physicians, by expelling them from the patient–physician relationship or neglecting the importance of empathy and compassion. Moreover, implementing AI within the current patient–physician dichotomic relationship could lead to a synergy between physicians and AI, whereby AI could provide technical support, while physicians remain responsible for the human-specific approach. Therefore, this synergy can be interpreted as the patient–physician relationship of the future, depicted through several main subthemes in our interviews.

The future of healthcare and the patient–physician relationship

According to the results of this study, the participants considered effective communication and personal interaction to be the most essential aspects of the patient–physician relationship. Moreover, they felt that this relationship was already under threat due to excessive administrative tasks, physician workload, and the presence of technology that hinders direct interaction with patients. It is not surprising, therefore, that their expectations of AI involve the reduction of tasks that unnecessarily burden physicians, allowing them to dedicate more quality time to patients. Additionally, participants emphasised the need for synergy between AI and physicians. Physicians concluded that, despite technological advancements, physical contact with patients will remain crucial in the future.

Nonetheless, I wouldn't give up on, let's say, examining the patient and occasionally providing some words of comfort because that's also a very important aspect of treatment. (Physician 3)

I believe the patient-physician contact is irreplaceable. (Hospital manager 9)

One of the hospital managers predicted that physical contact would decrease, but that this would be compensated by the expertise of physicians.

In the future, there will undoubtedly be less physical contact between physicians and patients, but the future lies in the expertise of physicians. (Hospital manager 5)

Fear of the loss of human contact has proven significant in the research. Many participants expressed concerns that implementing AI could lead to the loss of human touch. Physicians felt that the interaction between patients and physicians would decrease, leading to alienation. Some patients also expressed concerns that excessive digitalization could reduce communication and connection.

I think the interaction between physician and patients would definitely decrease, leading to some sort of alienation between them. (Physician 1)

What I absolutely dislike is losing this contact with patients, and I believe we must fight against it, no matter how accurate any system might be. (Physician 5)

Excessive digitalization reduces personal contact between people, and that, in turn, reduces communication and connection, and so on. (Patient 4)

Physicians like to have a conversation with patients. Not just give a diagnosis and be done with it. They prefer to talk to patients and explain exactly what they need to do to maintain such a health condition. So, I believe that through digitalization and all those things, they won't be able to do that. (Patient 14)Well, probably, the relationship with the physician on a personal level will become less frequent. Currently, many patients can connect with a physician and develop a personal approach over time… I think that will be less and less, you know, colder… (Patient 9)

Therefore, the implementation of AI solutions, according to some, could lead to a loss of the holistic approach to patients. Any absence of direct contact and immediate communication due to AI usage would represent the main disadvantage of using AI in healthcare.

However, I think that the holistic approach to people and human-to-human communication is lost here. So, there are advantages to this, but certainly, there will be some drawbacks. Warm words and communication between healthcare professionals that convey a sense of trust and security are often crucial to patients. I believe that will be a drawback. (Hospital manager 1)

There is a potential for AI to be used in the future for preventive measures and to encourage people to adopt healthy lifestyle habits while monitoring their health conditions. One of the physicians asserted that AI could motivate people to consult with a physician.

From another perspective, people who think they are healthy but are actually sick might be encouraged by the algorithm to consult a physician, so I would still benefit in that way. (Physician 9)

One of the problems that could arise is the lack of medical literacy. Inaccuracy of data, patients’ understanding of received diagnosis, or an overreliance on data could lead to incorrect decisions being made.

When we receive a diagnosis, it usually contains many Latin terms that we don't understand. (Patient 13)

It's so easy to get lost in all that data nowadays. And you can often come across incorrect information and so on. (Physician 12)

There's a study that actually proved that people who have access to such solutions tend to think they are smart, and they believe they are right, even if the application provides them with completely wrong answers. So, they don't even engage their brains to see that it's utter nonsense. As soon as they see it came from a smartphone or someone said it, they think it must be true. So, that's also a danger – false authority, knowledge and, consequently, wrong decisions. (Hospital manager 11)

In this study, participants’ expectations regarding the future of the patient–physician relationship mainly focus on their hopes and fears about the way in which AI will transform it, for better or worse. On the one hand, there was optimism in terms of the potential for AI to be used in preventive measures to encourage people to adopt healthy lifestyle habits or even consult their physicians. On the other hand, fears were raised about decreased communication, which would impact on the bond between physicians and patients, and the loss of the human touch, eroding the possibility of employing a holistic approach to patients.

Discussion

This is the first qualitative study to combine a multi-stakeholder approach and an anticipatory ethics approach to analyze the ethical, legal, and social issues surrounding the implementation of AI-based tools in healthcare by focusing on the micro-level of healthcare decision-making and not only on groups such as healthcare professionals/workers [9,10,12,13,16,17], healthcare leaders [15] or patients, family members, and healthcare professionals. [29] Interestingly, the findings have highlighted the impact of AI on: (1) the fundamental aspects of the patient–physician relationship and its underlying core values as well as the need for a synergistic dynamic between the physician and AI; (2) alleviating workload and reducing the administrative burden by saving time and bringing the patient to the center of the caring process; and (3) the potential loss of a holistic approach by neglecting humanness in healthcare.

The fundamental aspects of the patient–physician relationship and its underlying core values require a synergistic dynamic between physicians and AI

One of the themes that emerged in the results was the potential transformation of patient–physician relationships, a concern which has already been noted in the literature [23] and other qualitative studies. [11,12,17] All of the participants emphasized the importance of the patient–physician relationship and the need to preserve its core values, which are based on trust and honesty, achieved through open and sincere communication. In the realm of mental health, several studies [30–32] have endorsed the use of AI in an assistive role as playing a positive role in improving openness and communication while avoiding potential complications in interpersonal relationships, since patients in mental health context may be more willing to disclose personal information to AI rather than physicians.

Although the majority of the participants in this study had a positive outlook on the potential for AI to improve the patient–physician relationship, the physicians feared that implementing such technology could reduce physician–patient interactions and erode the established trust in the patient–physician relationship. [33] In this relationship, as perceived by the respondents, the AI cannot replace the physician's skills, visual observations, and emotional recognition of the patients, nor the experience and intuition that guides the physician's decision-making. Moreover, substituting humans with AI in healthcare would not be possible because healthcare relies on relationships, empathy, and human warmth. [34] Therefore, patients, physicians, and hospital managers view the implementation of AI in healthcare as a necessary synergy between physicians and AI, with AI providing technical support, and physicians providing a human-specific approach in the form of a partnership. [35] Thus, the AI can become the physician's right hand [29], augmenting the physician's existing capabilities. [36] Ideally, this supportive and augmenting role of AI-based tools within the patient–physician relationship would be followed up using models of shared decision-making. [14]

It is important to note that AI-based tools were perceived only as potentially empowering physicians [37] in particular. Hospital managers argued that future development would need to be geared towards assisting physicians rather than providing a tool for patients’ (self-)diagnosis, which might have a negative effect on younger generations in particular, who are more inclined towards the use of new technology. Some parallels can be drawn with the rise of ChatGPT and its potentially risky contribution to the "AI-driven infodemic." [5]

Alleviating workload and reducing the administrative burden by saving time and bringing the patient to the center of the caring process

Moreover, the potential of AI has been recognized in the findings as improving the existing patient–physician relationship among all stakeholder groups considering the current state of medicine and healthcare, which is confronted with excessive workload and administrative burden. In particular, physicians view AI as an opportunity to replace or automate certain tasks [13], such as the manual input of data, which often reduces the patient consultation to a meeting with the screen/monitor, involving limited physical contact with the patient. Furthermore, the patients anticipated that the implementation of AI might minimize waiting times and administration in healthcare. However, the perception of AI-based tools being a solution to the persisting issues in healthcare reveals that, among our respondents, AI is seen as a panacea. Specific tasks being taken over by AI-based tools will allow more time for physicians to spend with patients or for professional growth. [29] However, it could be argued that using this extra time might be also used to see more patients each day, which at the end might result in the opposite effect of our respondents expectations. Recent reports have implied that, with the implementation of AI, productivity might increase, and greater efficiency of care delivery will allow healthcare systems to provide more and better care to more people. [38] The recent AI economic model for diagnosis and treatment [39] demonstrated that AI-related reductions in time for diagnosis and treatment could potentially lead to a triple increase of patients per day in the next 10 years.

The respondents did not perceive that the delegation of certain tasks to AI would result in AI replacing physicians, nor the physicians’ role being threatened, because their role is not only to provide a diagnosis but to fully engage with the patients, offering consolation, consultations, and more. Sezgin [40] provides concrete instances of the ways in which AI enhances healthcare without taking the place of physicians. For example, AI-assisted decision support systems work with magnetic resonance imaging and ultrasound equipment in helping physicians with diagnosis, or enhancing speech recognition in dictation devices that record radiologists’ notes. Nagy and Sisk [34] argue that although AI-based tools might reduce the burden of administrative tasks, they might also increase the interpersonal demands of patient care. Therefore, AI-based tools have the potential to place the patient at the center of the caring process, safeguarding the patients’ autonomy and assisting them in making informed decisions that align with their values. [41]

The potential loss of a holistic approach by neglecting humanness in healthcare

The essential aspects of the patient–physician relationship, such as effective communication and personal interaction, are already under threat due to excessive administrative tasks, physician workload, and technology that hinders direct patient interaction. Indeed, physicians endorse the need for synergy between AI and physicians; they consider the physical contact between physicians and patients as something that must be preserved, and which could be threatened by the implementation of AI. This is due to the perception that interaction between patients and physicians will likely decrease, leading to alienation, and excessive digitalization could further reduce communication and connection within patient–physician relationships. Sparrow and Hatherley [42] emphasize the paradox of the expectations of AI, which predict that, on the one hand, it will help improve the physician's efficiency but, on the other—taking into consideration the economics of healthcare—it might erode the empathic and compassionate nature of the relationship between patients and physicians as a result of increased numbers of patient consultations each day, due to the physician's increased efficiency.

On the contrary, some studies [10,14] have reported that AI-based tools represent an opportunity to enhance the humanistic aspects of the patient–physician relationship by requiring enhanced communication skills in explaining to patients the outputs of AI-based tools that might influence their care. Explaining how a particular decision has been made is the first step in building a trusting relationship between the physician, patient, and AI [24], which might lead towards a more productive patient–physician relationship by increasing the transparency of decision-making. [25] The lack of explainability might be problematic for physicians [26] to take responsibility for decisions involving AI systems, as the participants in this study emphasized that physicians should retain ultimate responsibility. The ability of a human expert to explain and reverse-engineer AI decision-making processes is still necessary, even in situations in which AI tools can supplement physicians and, in certain circumstances, even significantly influence the decision-making process in the future. [27] Therefore, keeping humans in the loop with regard to the inclusion of AI in healthcare will contribute to preserving the humane aspects of healthcare. Otherwise, the holistic elements of care might be lost and the dehumanization of the healthcare system [29] would render AI a severe disadvantage, rather than a significant benefit.

Strengths and limitations

Caution should be taken when interpreting the results of the study, as there are some limitations. The sample size of the participants was not calculated using power analysis; instead, the data saturation approach was taken within each specific category of key stakeholders. Therefore, it is impossible to make generalizations for the entire stakeholder groups. As participants were recruited using purposive sampling and the snowballing method, a degree of sample bias is acknowledged because some of the individuals who were keen and familiar with AI technology expressed their willingness to participate in the study; these people might have had higher medical and digital literacy levels compared to the average members of the included stakeholder groups in Croatia. Another limitation is the timeframe of the research, because it was conducted during the COVID-19 pandemic, when meetings between patients and physicians were reduced to a minimum. As a result, participants may have been more aware of the impact of AI on the patient–physician relationship.

Conclusions

This multi-stakeholder qualitative study, focusing on the micro-level of healthcare decision-making, sheds new light on the impact of AI on healthcare and the future of patient–physician relationships. Following the recent introduction of ChatGPT and its impact on public awareness of the potential benefits of AI in healthcare, it would be interesting for future research endeavors to conduct a follow-up study to determine how the participants’ aspirations and expectations of AI in healthcare have changed. The results of the current study highlight the need to use a critical awareness approach to the implementation of AI in healthcare by applying critical thinking and reasoning, rather than simply relying upon the recommendation of the algorithm. It is important not to neglect clinical reasoning and consideration of best clinical practices, while avoiding a negative impact on the existing patient–physician relationship by preserving its core values, such as trust and honesty situated in open and sincere communication. The implementation of AI should not be allowed to cause the dehumanization and deterioration of healthcare but should help to bring the patient to the center of the healthcare focus. Therefore, preservation of the trust-based relationship between patient and physician is urged, by emphasizing a human-centric model involving both the patient and the public even at the design stage of AI-based tool development.

Acknowledgements

We would like to thank our former research group member Ana Tomičić, PhD, who also participated in conducting and transcribing the interviews, as well as our former student research group members Josipa Blašković and Ana Posarić, for their assistance with the transcription of interviews. Furthermore, we want to express our immense gratitude to two anonymous reviewers who provided us with detailed, valuable, and constructive feedback for improving the manuscript. We also wish to acknowledge that the credit for the proposed future research avenue of a follow-up study following the introduction of ChatGPT goes to one of our two reviewers.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Hrvatska Zaklada za Znanost HrZZ (Croatian Science Foundation) (grant number UIP-2019-04-3212).

Ethical approval

The ethics committee of the Catholic University of Croatia approved this study (number: 498-03-01-04/2-19-02).

Conflict of interest

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplementary material

- Supplementary material 1 (.docx)

Footnotes

- ↑ In Croatian, the noun physician (liječnik) is masculine; therefore, the patient refers to the physician as a male.

References

Notes

This presentation is faithful to the original, with only a few minor changes to presentation, grammar, and punctuation. In some cases important information was missing from the references, and that information was added. The original lists references in alphabetical order; this version lists them in order of appearance, by design.